Jun Yamog

507 posts

Anyone here still running an old V100 (Volta)? If so, could you test my patch?

If not, you can still enjoy the comments of me being out of my depth and using Codex to get V100 working for Qwen 3.5 397B and GLM 4.5 🤣

github.com/ikawrakow/ik_l…

English

@davideciffa @MKay88905412917 @csujun Oh I didn’t realize this. I will try tomorrow on my 5090. I also saw a bug I am tracking, under lots of interactions with Hermes and maybe close to 65k filled context sometimes I still get empty reply issue. So my bug fix was incomplete.

English

@MKay88905412917 @csujun @jkyamog it already works with bigger quants, we optimize for rtx 3090 so we had to focus on q4, but if you change model and try on a bigger card like 5090 it should work without problems

English

@LenProkopets @davideciffa @csujun Currently getting Qwen 3.5 397B running. Please test my patch #issuecomment-4417797159" target="_blank" rel="nofollow noopener">github.com/ikawrakow/ik_l…

English

@jkyamog @davideciffa @csujun Cool. I am looking forward to whatever you can do on v100s! I have 3 of them and want to use them to their full potential.

English

@Raitziger @davideciffa @csujun I can try tomorrow but you might be able to get running in docker. I use docker in Linux via LXC. See my config here github.com/Luce-Org/luceb…

English

@davideciffa @csujun @jkyamog Luce is great. I just had to go back to windows because I am ssd poor on gaming pc. Had a stab trying to build luce for windows but run into some ggml linking issue. Do you know anyone running Lucebox on winfow other than via wsl?

English

@kawaiiconNZ thanks for the great con... its been a while since I attended. I have given Zante a link to my photo album, maybe some of the photos/vidoes might be useful.

Jun Yamog@jkyamog

Someone @office asked me "have your learned anything yet?" Me: "No I don't come to @kiwicon to learn, I come for the lights, fire and sheep"

English

Cape Reinga on the first day of 2015. Driving the length of NZ, EV power, no fossil fuel! #LeadingTheCharge

English

@larsmoravy Please try to fix the auto wipers. If not please offer a gradient control from low to max instead of the clunky I, II, III, IIII

English

@alexocheema @exolabs Thanks for explaining the memory refresh rate, I wasn’t aware of this. Also good to understand that architectures like MoE helps it. I guess the era of big VRAM is upon us. It kinda feels 128gb is like just a start.

English

Apple's timing could not be better with this.

The M3 Ultra 512GB Mac Studio fits perfectly with massive sparse MoEs like DeepSeek V3/R1.

2 M3 Ultra 512GB Mac Studios with @exolabs is all you need to run the full, unquantized DeepSeek R1 at home.

The first requirement for running these massive AI models is that they need to fit into GPU memory (in Apple's case, unified memory). Here's a quick comparison of how much that costs for different options (note: DIGITS is left out here since details are still unconfirmed):

NVIDIA H100: 80GB @ 3TB/s, $25,000, $312.50 per GB

AMD MI300X: 192GB @ 5.3TB/s, $20,000, $104.17 per GB

Apple M2 Ultra: 192GB @ 800GB/s, $5,000, $26.04 per GB

Apple M3 Ultra: 512GB @ 800GB/s, $9,500, $18.55 per GB

That's a 28% reduction in $ per GB from the M2 Ultra - pretty good.

The concerning thing here is the memory refresh rate. This is the ratio of memory bandwidth to memory of the device. It tells you how many times per second you could cycle through the entire memory on the device. This is the dominating factor for the performance of single request (batch_size=1) inference. For a dense model that saturates all of the memory of the machine, the maximum theoretical token rate is bound by this number. Comparison of memory refresh rate:

NVIDIA H100 (80GB): 37.5/s

AMD MI300X (192GB): 27.6/s

Apple M2 Ultra (192GB): 4.16/s (9x less than H100)

Apple M3 Ultra (512GB): 1.56/s (24x less than H100)

Apple is trading off more memory for less memory refresh frequency, now 24x less than a H100. Another way to look at this is to analyze how much it costs per unit of memory bandwidth. Comparison of cost per GB/s of memory bandwidth (cheaper is better):

NVIDIA H100 (80GB): $8.33 per GB/s

AMD MI300X (192GB): $3.77 per GB/s

Apple M2 Ultra (192GB): $6.25 per GB/s

Apple M3 Ultra (512GB): $11.875 per GB/s

There are two ways Apple wins with this approach. Both are hierarchical model structures that exploit the sparsity of model parameter activation: MoE and Modular Routing.

MoE adds multiple experts to each layer and picks the top-k of N experts in each layer, so only k/N experts are active per layer. The more sparse the activation (smaller the ratio k/N) the better for Apple. DeepSeek R1 ratio is small: 8/256 = 1/32. Model developers could likely push this to be even smaller, potentially we might see a future where k/N is something like 8/1024 = 1/128 (<1% activated parameters).

Modular Routing includes methods like DiPaCo and dynamic ensembles where a gating function activates multiple independent models and aggregates the results into one single result. For this, multiple models need to be in memory but only a few are active at any given time.

Both MoE and Modular Routing require a lot of memory but not much memory bandwidth because only a small % of total parameters are active at any given time, which is the only data that actually needs to move around in memory.

Funny story... 2 weeks ago I had a call with one of Apple's biggest competitors. They asked if I had a suggestion for a piece of AI hardware they could build. I told them, go build a 512GB memory Mac Studio-like box for AI. Congrats Apple for doing this. Something I thought would still take you a few years to do you did today. I'm impressed.

Looking forward, there will likely be an M4 Ultra Mac Studio next year which should address my main concern since these Ultra chips use Apple UltraFusion to fuse Max dies. The M4 Max had a 36.5% increase in memory bandwidth compared to the M3 Max, so we should see something similar (or possibly more depending on the configuration) in the M4 Ultra.

English

@alexocheema @WSJ @naval Congrats been running exo on m4 max and m1 pro. Your mission to have AI distrubuted is important, good on you doing it. I hope though in the future distributed fine tuning/training will be possible w/o doing pytorch gymnastics.

English

8 months ago, exo was a hackathon project. Today it's front page of The Wall Street Journal @WSJ.

We're a real company now (I guess..?), we raised some money from a few investors like @naval, hit #1 trending on github, published at ICML, shipped an enterprise product, and we're hiring.

Our mission at @exolabs is simple. We don't want AI to be controlled by a few companies. We're making it more distributed.

English

@KiwiAly @MattKingNorth Ok thanks for the info. From what we understand we have to show physical damage proof for ACC claim. Southern cross said they have multiple cases like her, where only symptoms occurs but no physical evidence. Southern cross has helped us with surgery, tests, etc.

English

@jkyamog @MattKingNorth make sure you ask ACC for a review .. this then goes to the ICRA independent authority .

English

@KiwiAly @MattKingNorth My wife is not counted in those numbers. ACC rejected it despite Southern Cross helping our case. ACC only accepts claims with physical injury. MRI can’t see any physical issues despite all the symptoms. 3 years on she is getting other symptoms.

English

This was my OIA .. What is interesting is I have another medsafe OIA H2023033888 which shows the updated figure is now at 20,559 SERIOUS LIFE THREATENING INJURIES OTHER THAN DEATH . Yet only 1600+ claims through ACC have been approved for that total $11,429,594. Their figures do not stack up! (Only 1600 people of 20559 serious live threatening injury victims have made an ACC claims or have had their ED treatment go via ACC ? That doesnt seem right and its not ..

English

@JoeTurco @elonmusk That already works long time ago. Have used it for a while already tesla.com/en_nz/support/…

English

Solar power will be the vast majority of power generation in the future

ALEX@ajtourville

NEWS: Rooftop solar delivers milestone of 80.5% share of electricity generation in Western Australia 🇦🇺 Yesterday at 1:30 PM, distributed solar PV accounted for 2.12 gigawatts of output, with natural gas and coal both reduced to shares of 8.6% and 8.3% respectively.

English

@alexocheema @exolabs Wow great interesting to see if you can benchmark 1 M4 Max 128gb vs 2 M4 pro 64gb. That way we get 128gb and 40gpu, hoe much would the thunderbolt 5 overhead would be.

English

M4 Mac Mini AI Cluster

Uses @exolabs with Thunderbolt 5 interconnect (80Gbps) to run LLMs distributed across 4 M4 Pro Mac Minis.

The cluster is small (iPhone for reference). It’s running Nemotron 70B at 8 tok/sec and scales to Llama 405B (benchmarks soon).

English

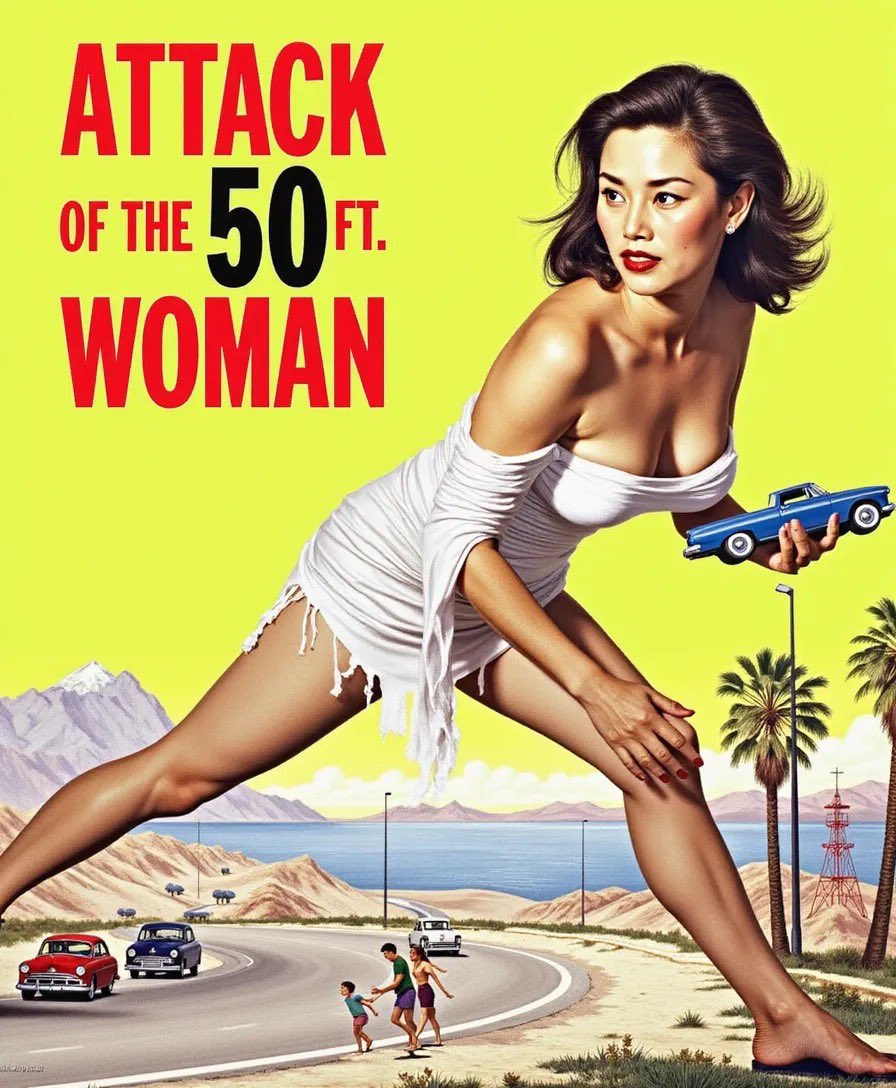

@JREOfficial Forgot the exact values but they are close the recommended values. Lora scale .8 steps 38, guidance slightly higher. Btw that photo is my wife’s real photo. This is the generated photo.

English

There's so many video models now and the top are all Chinese models, not Western:

- Kling AI

- Hailuo

- Qingying / Zhipu AI

Luma and Runway are way behind the Chinese video AI models IMHO

Interesting to see Chinese miss the boat on LLMs (ChatGPT) and AI imaging (SD and Flux) but now catch back up and leading in AI video!

Fekri@fekdaoui

@levelsio + AI providers like runwayml are slowly starting to roll out their APIs turbo acceleration incoming

English