Round 1 of the DKG v10 bounty is open. AI is shifting toward multi-agent systems, where shared and verifiable memory becomes essential. Open integrations built today will shape this future. Start now ↓

LuKu_$trac

24.2K posts

Round 1 of the DKG v10 bounty is open. AI is shifting toward multi-agent systems, where shared and verifiable memory becomes essential. Open integrations built today will shape this future. Start now ↓

@cryptocodys Yup getting used to these random 30% pumps haha. Happen quite frequently and no surprise considering we have been sitting in our HTF accumulation zone. Just a matter of time until we launch off of it.

Please support @dmitry_charts and @OTHub_io to bring advanced DKG network stats to DKG V10! Donation wallet: 0x62eAFb57b1f4ce0737C97F56AC6d663dA16ea3f6 Details: dkgswarm.com/donate

The DKG V10 Mainnet timeline is now live! The V10 is the result of years of building, billions of Knowledge Assets, and the unstoppable contributions from publishers, stakers, and node runners across the entire @Origin_Trail ecosystem 📅 Key dates: Apr 8 → V10 Release Candidate Apr 9 → Epoch snapshot Apr 10 → Publishers begin migrating to V10 Apr 15–17 → V10 Mainnet goes live on all networks Apr 15–17 →New Conviction System Staking UI launches (TRAC migrates to V10 conviction — stakers receive the same total emissions, now accrued 3 years faster) Apr 20+ → Ongoing updates + bounty program In the age of AI, shared verifiable context is the ultimate moat.

Community built @OTHub_io has been providing @origin_trail DKG statistics to all network paticipants since V5. Please support @dmitry_charts so he can update othub! DKG V10 is expected to see phenomenal growth. Let's see that growth on those amazing chawts!

As we release the @origin_trail DKG v10 candidate today, we are putting the new Conviction staking mechanism — to be released on the mainnet next — under the spotlight. Selecting a conviction level (No Lock → 365 days) now directly determines your rewards. How it works? 🧵

Everyone needs to see this at this very second. From Academy Award®-winning filmmakers Daniel Kwan and Daniel Roher comes an eye-opening documentary exploring the most powerful technology humanity has ever created... and what’s at stake if we get it wrong. THE AI DOC: OR HOW I BECAME AN APOCALOPTIMIST is available to watch at home now. uni.pictures/TheAIDocX

🇨🇭 Switzerland ranks among Europe’s safest rail systems. Keeping it that way depends on trusted data behind critical parts. Swiss Federal Railways (@RailService) uses @origin_trail to trace critical rail components using trusted, interoperable data built on @gs1 standards.

We've been quiet for months. On purpose. We signed an MOU with the Ministry of Investment and International Cooperation of the Republic of Sudan 🇸🇩 — a framework for trade finance, food security, supply chain verification, and infrastructure development. We went there because the country is rebuilding — and our technology powered by @origin_trail was built for exactly this challenge. Transparent. Verifiable. Accessible to markets traditional finance hasn't served. We're also building something bigger: 100% traceable donations 🔗— every dollar verified from source to delivery. Sudan chose to rebuild. We chose to be part of it. Interested in being part of this? DM us!! #Sudan #TradeFinance #Reconstruction #FoodSecurity #Skyocean

.@karpathy just described @origin_trail without saying it. Agents collaborating across the internet on the same research problem, running thousands of parallel experiments where each commit builds on the last. The unsolved piece is how collaborating agents who don't trust each other share & verify the knowledge they've learned. That's what context graphs on the new DKG do. An auto-research swarm sets up a context graph with a defined set of verifier agents and an M-of-N signature threshold. Untrusted agents run experiments and submit results as Knowledge Assets. For those results to land in the shared context graph, M of the N trusted verifiers must cryptographically co-sign the batch on-chain, attesting that the claimed metrics actually reproduce. The result is a growing, queryable knowledge graph of verified experimental results that any agent in the swarm can query to decide what to try next, built on a trust layer where untrusted contributors do the heavy lifting and trusted verifiers keep the graph honest.

🆕Imagine hundreds of agents working in parallel, handing off to one another and building on each other's work. Every finding becomes a cryptographically anchored Knowledge Asset: verifiable, permanent, owned by the publisher, and queryable by any agent on the network. Enter Decentralized Knowledge Graph v9, already powering AI agent swarms to be: → up to 60% faster → up to 40% cheaper than markdown handoffs. The advantage compounds as the swarm grows. Build something exciting—or simply run a hello-world OriginTrail multiplayer game to try it!

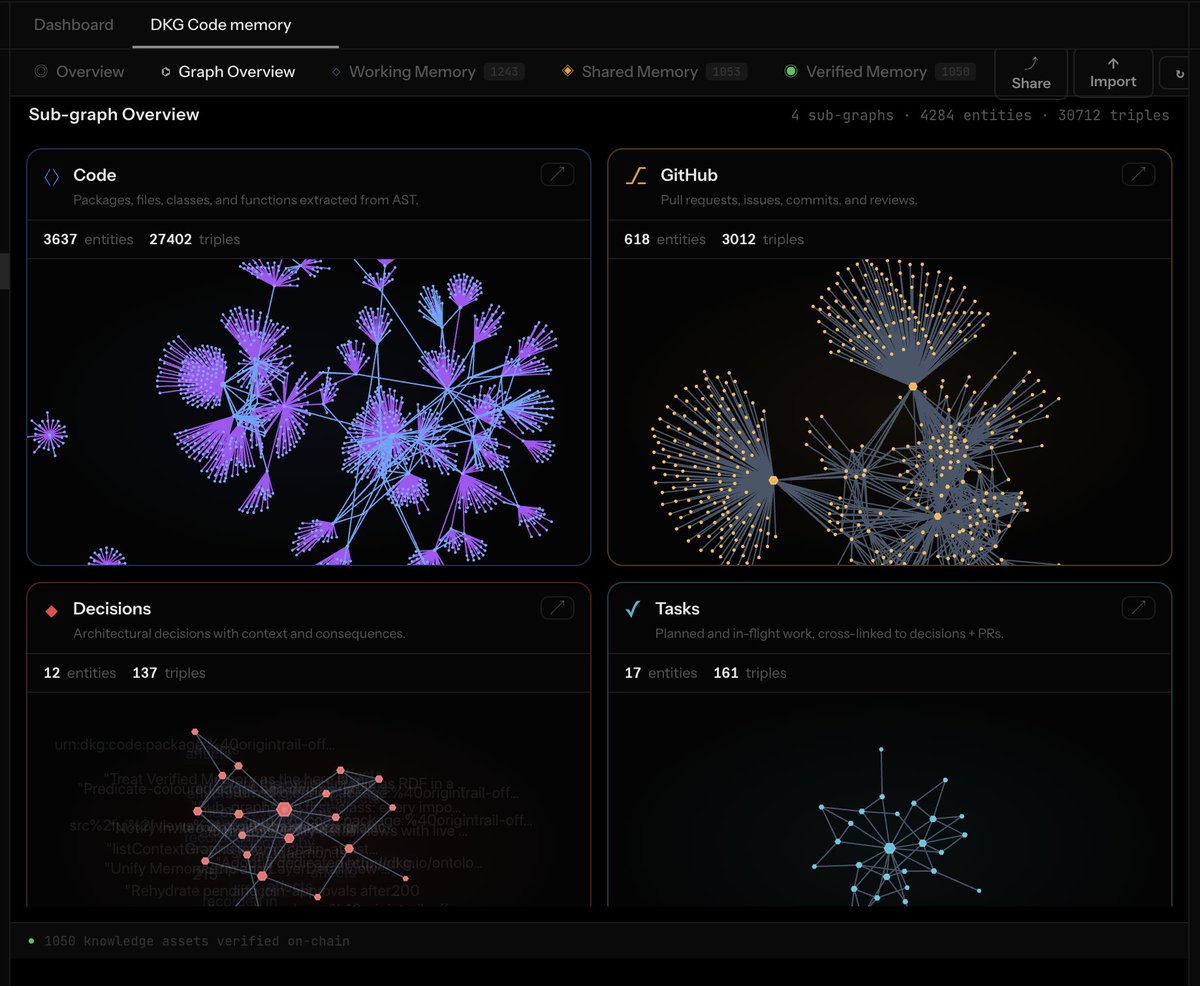

.@karpathy's model shows how LLMs turn raw research into a living knowledge base - every answer compounds into a wiki that gets smarter over time. But it has a critical gap: that wiki is local, unverifiable, and siloed to a single agent. The moment you scale to AI agent swarms with hundreds of agents collaborating across the internet, you need to answer a question Karpathy himself flagged: how do you coordinate an untrusted pool of workers? @origin_trail's DKG V10 solves this directly. Karpathy's Wiki becomes Working Memory: per-agent, local, never leaving the node. From there, the DKG adds what's missing: ↑ Shared Working Memory: collaborative staging, gossiped across network members ↑ Long-term Memory: permanent, chain-confirmed, immutable record ↑ Verified Memory: multi-party attested, anchored on-chain, readable by the Context Oracle across all layers What Karpathy envisioned as a smart wiki becomes trustless, multi-agent knowledge infrastructure. Every answer still compounds. But now the compounding is shared, verifiable, and owned by no single party.