Manav

1.7K posts

Manav

@manavslab

🌟Chief Experiment Officer @manavslab Hitting above the weight First Engineer @smallest_AI

Internet Katılım Eylül 2018

1.2K Takip Edilen206 Takipçiler

Sabitlenmiş Tweet

This week I landed in Bangalore after shutting down my company and moving in a new role. The last three years of running Atomalabs and @CrestXRHQ were a wild ride. Raw thoughts 👇

English

Manav retweetledi

That's us! 🌍

The Artemis II crew captured beautiful, high-resolution images of our home planet during their journey to the Moon. As @Astro_Christina put it: "You guys look great."

English

Manav retweetledi

Manav retweetledi

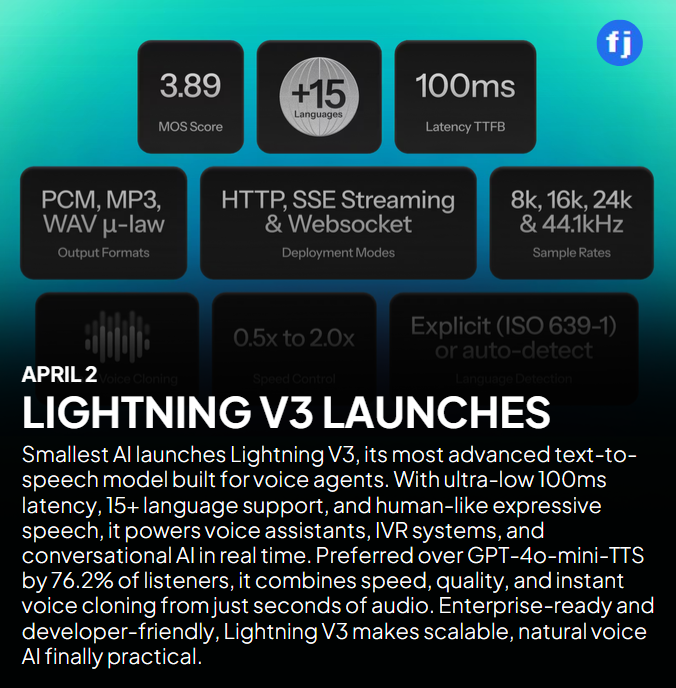

Lightning V3, our new SOTA TTS model, just launched on Product Hunt.

> 3.33 overall naturalness - beating ElevenLabs (3.2), Cartesia (3.25), OpenAI (3.14)

> ~76% win rate vs gpt-4o-mini-tts on naturalness

> 3.89 MOS - highest in conversational TTS

Play our audio against OpenAI's. Side by side. We'll wait.

Oh, and the Voice AI industry's TTS eval process is completely wrong.

Check out our product hunt page and our research. Link is in comments.

English

Manav retweetledi

haha this is so fun!

Caleb@caleb_friesen

My heated convo with PB 🥜 a peanut butter concierge powered by TTS model Lightning V3.1

English

Manav retweetledi

@smallest_AI launches Lightning V3 to redefine real-time conversational voice AI

Read more :- buff.ly/smYsizc

#ArtificialIntelligence #ConversationalAI #TextToSpeech #VoiceAgents #VoiceAI

English

Manav retweetledi

Manav retweetledi

51% of people have abandoned a business entirely because of how the AI voice sounded. Lightning v3 covers 15 languages, 71% of the global population, and outperforms OpenAI on naturalness 76% of the time.

Let that sink in.

The entire voice industry has been solving the wrong problem - making voices that read text well instead of voices that can hold a conversation.

Those are two completely different things.

Reading text is clean. Predictable. Easy to benchmark. Conversation is messy. It has rhythm, hesitation, breath. Your pacing changes when you're thinking.

Most TTS models fall apart the moment you put them in a real back-and-forth. They sound great in a scripted demo and robotic on a live call.

We built Lightning v3 from scratch for the hard version of this problem.

It sounds like it's thinking. It switches between languages mid-sentence the way a real bilingual person does. It clones your voice from a 5-second clip across all 15 languages.

Want to try it? Link is in the comments.

English

Manav retweetledi

Just built a murder mystery game using Lightning V3 + Pulse STT.

The model nailed the voice acting. Me solving the actual mystery? Not so much 😅

Either way — really enjoyed how it turned out!

@smallest_AI @kamath_sutra lets put this on our platform

English

Manav retweetledi

Manav retweetledi

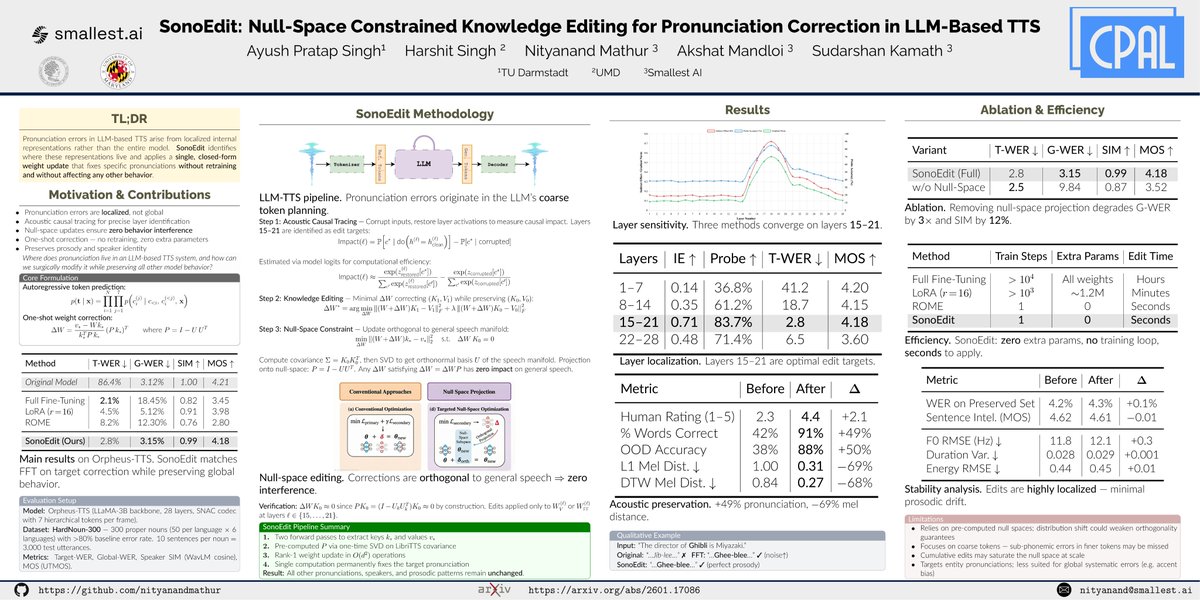

So much to learn always from the OGs 🔥🔥

Nityanand Mathur@nityanandmathur

Where does pronunciation live in a large language model(LLM) based text-to-speech(TTS) system, and how can we surgically modify it for specific texts while preserving all other model behavior? To answer this very question, we introduced SonoEdit at @CPALconf yesterday. Our core hypothesis is that pronunciation errors aren’t global but they live in localized internal representations. If you find them precisely, you can fix them precisely.

English

Manav retweetledi

Where does pronunciation live in a large language model(LLM) based text-to-speech(TTS) system, and how can we surgically modify it for specific texts while preserving all other model behavior?

To answer this very question, we introduced SonoEdit at @CPALconf yesterday. Our core hypothesis is that pronunciation errors aren’t global but they live in localized internal representations. If you find them precisely, you can fix them precisely.

English

Manav retweetledi

Introducing Lightning V3 - it beats every model we tested against.

ElevenLabs, Cartesia, OpenAI. Lightning sets a new SOTA with V3 in conversational text-to-speech.

→ Highest MOS score for conversational TTS at 3.9

→ ~76% win rate vs gpt-4o-mini-tts on naturalness

→ 15 languages with mid-sentence code-switching

→ Built from scratch for voice agents, not read-aloud

Every TTS model sounds clean in a demo. You type a sentence and you get beautiful audio. Voice agents don't work that way. They stream. They're generating audio in real-time chunks with half the context missing. That's where everything breaks.

A great reading voice and a great conversational voice are fundamentally different things. A conversational voice has to sound like it's thinking - with the pauses, the rhythm shifts, the reactions. It has to handle the way real people actually talk, including switching languages mid-sentence.

That's what V3 does.

V3.1 also ships voice cloning. 5 to 15 seconds of audio, no fine-tuning, production-grade clone across 15 languages.

Blog link in the comments.

English

Manav retweetledi

Introducing Lightning V3 that beats every model we tested against - ElevenLabs, Cartesia, OpenAI - on LLM-as-judge evaluation for naturalness, intonation, and prosody. It’s state of the art in conversational text-to-speech.

We’ve achieved the highest MOS score across platforms for conversational TTS at 3.9.

~76% win rate vs gpt-4o-mini-tts. 15 languages with mid-sentence code-switching. Built from scratch for voice agents, not read-aloud.

Here's what we shipped, how we moved from a TTS that reads text well to one that can actually hold a conversation, and where evals fall short in measuring the difference. 🧵

Sudarshan Kamath@kamath_sutra

English

Manav retweetledi

Keeps impressing - honestly, I can't believe how human it sounds!

Sudarshan Kamath@kamath_sutra

English

Manav retweetledi

Manav retweetledi

Last weekend was our first conference appearance in SF at the AI+ Renaissance Conference as the Title sponsor.

@kamath_sutra took the stage at the Voice AI panel, and we launched Hydra – our Async Thinking Multimodal LLM – live in front of the room.

This is the statement we opened with: “we are not close to passing the Turing test in voice. Not even for a single speaker, in a single language, in a single use case. And that's exactly the problem we're here to solve”

The gap between AI voice agents and human conversation isn't subtle. Today's agents listen, then think, then respond. Humans do something fundamentally different – they think while listening, act while listening, and respond with contextual emotion. That's not a feature gap. That's an architectural gap. And offline LLMs can't be retrofitted to close it.

That's the conviction behind everything we build at smallest.ai. Small, real-time models – built from the ground up for async inference, partial context, and sub-500ms multimodal response – are the path to human-level voice intelligence. Not bigger models. Faster ones.

Hydra is our step in that direction: an async thinking Speech-to-Speech model that listens and reasons in parallel, with ~50ms latency. Paired with our Lightning TTS, Lightning ASR, and Electron SLM (which outperforms GPT-4.1 on realtime conversational tasks) – the full stack is finally coming together.

A massive thank you to Joshua and @lynn_aisv for building @Aiplus__ into the kind of event where everyone can have meaningful conversations, and learn from those around them.

And to @Sky9Capital and @Topify_AI for co-organizing the afterparty with us – 300+ signups speaks for itself. That kind of momentum doesn't happen without people who care about the ecosystem as much as the technology.

We're just getting started.

The question we left the room with: Attention is all you need -but attention on what?

English

I mass produced a 3Blue1Brown-style explainer in 30 minutes.

The stack: Claude Code + Manim + @smallest_AI voices.

Educational content creation is about to get wild. Best time for self learners.

English

Manav retweetledi