snipsnip

210 posts

snipsnip

@mathburritos

Stealing your data @OpenAI

Breaking News: The Trump administration is discussing vetting new A.I. models before they are publicly released. nyti.ms/49msJbF

Everybody who thinks ai is conscious has to do a mandatory from scratch transformer implementation. There are only floats and multiplications.

X is not the best place for long form thinking. But some quick points: 1. My view of no conflict between intelligence and being a tool is longstanding and has nothing to do with Anthropic. Some blog posts on this include windowsontheory.org/2025/06/24/mac… and windowsontheory.org/2022/11/22/ai-… 2. I do not know what is the future form factor of AI. I am focused on the next 10-20 years. Maybe in some future we will decide that we want AIs to be more in the form of persons. 3. The basic thing I dispute is that there is a fundamental tension between AI being capable and being "tool like." GPT 5.5 is in some ways the most capable model in existence (definitely most capable one generally available) but it is in several ways more instruction-following and tool-like than GPT-4o. I am working to ensure that future version will be even more better at obedience and honesty. 4. Scientists and engineers often serve as "tools" for leaders, even though they (we) are more intelligent than these leaders in many of the ways that matter. 5. I am not sure what the most prevalent form factor of AI will be. We are now moving from the chat interface to the agent and more accurately a swarm of agents. I am sure will grow in "intelligence per FLOP" and total number of FLOPs, but beyond that it's hard to know. Humans have a particular package as localized individual intelligence. But it doesn't mean all intelligences have to come in that package. 6. There is a huge spectrum between the prompt "write this javascript app" to "maximize worldwide happiness". I think we will end up somewhere that fall shorts of the latter for a variety of reasons, not having to do with lack of capabilities of AI.

Compilers are deterministic. Give them the same code with the same compiler settings, and you'll always receive the same binary. You can take responsibility for your software at the code level. LLMs, on the other hand, are stochastic. Even if you set the temperature to zero, you're likely to get different responses on the same prompt. Therefore, you need to understand the code it produces if you want to take ownership and responsibility for it.

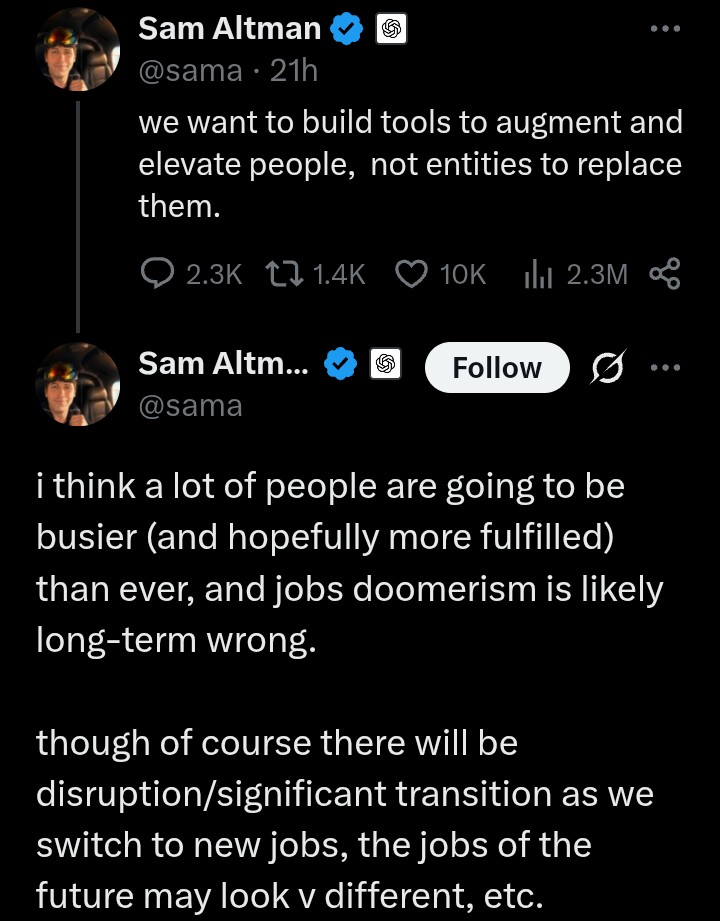

we want to build tools to augment and elevate people, not entities to replace them.

Richard Dawkins has officially been one-shot

we want to build tools to augment and elevate people, not entities to replace them.

seems tough

OpenAI model release: We’re throwing a party 🎉 Everything is scribbles and Pets are in Codex. Hope you like goblins! Anthropic model release: In research preview, it hacked the full Internet for fun. Also, it’s coming for YOUR job specifically. Enjoy the permanent underclass!

After 100 million tokens, performance was still going up. What we're seeing here is not the capability ceiling. From the report: "Performance on TLO continues to scale with the amount of inference compute spent, and we have not yet observed a plateau with the best models."

i think a lot of people are going to be busier (and hopefully more fulfilled) than ever, and jobs doomerism is likely long-term wrong. though of course there will be disruption/significant transition as we switch to new jobs, the jobs of the future may look v different, etc.