Santiago@svpino

We are one step closer to having AI generate code better than humans!

There's a new open-source, state-of-the-art code generation tool. It's a new approach that improves the performance of Large Language Models generating code.

The paper's authors call the process "AlphaCodium" and tested it on the CodeContests dataset, which contains around 10,000 competitive programming problems.

The results put AlphaCodium as the best approach to generate code we've seen. It beats DeepMind's AlphaCode and their new AlphaCode2 without needing to fine-tune a model!

I'm linking to the paper, the GitHub repository, and a blog post below, but let me give you a 10-second summary of how the process works:

Instead of using a single prompt to solve problems, AlphaCodium relies on an iterative process that repeatedly runs and fixes the generated code using the testing data.

1. The first step is to have the model reason about the problem. They describe it using bullet points and focus on the goal, inputs, outputs, rules, constraints, and any other relevant details.

2. Then, they make the model reason about the public tests and come up with an explanation of why the input leads to that particular output.

3. The model generates two to three potential solutions in text and ranks them in terms of correctness, simplicity, and robustness.

4. Then, it generates more diverse tests for the problem, covering cases not part of the original public tests.

5. Iteratively, pick a solution, generate the code, and run it on a few test cases. If the tests fail, improve the code and repeat the process until the code passes every test.

There's a lot more information in the paper and the blog post. Here are the links:

• Paper: arxiv.org/abs/2401.08500

• Blog: codium.ai/blog/alphacodi…

• Code: github.com/Codium-ai/Alph…

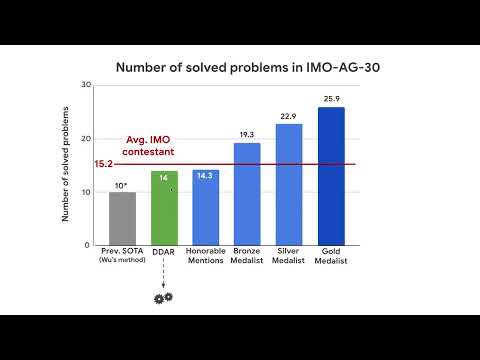

I attached an image comparing AlphaCodium with direct prompting using different models.

2024 has barely started, and we are making a ton of progress!