Nusratsathi

8.7K posts

Core Pillars of @PrismaXai • Robotics (Operate Robots): Robots perform real world tasks and connect digital intelligence with physical environments. • Data Generation (Generate Data): Robots produce valuable real world data that is accurate and useful. • Artificial Intelligence (Train Better AI): AI uses this data to learn, improve, and become more efficient over time. • Blockchain Integration: Ensures security, transparency, and decentralized trust across the ecosystem. • Authentic Contribution: Focus on real human effort and original content to maintain quality and fairness. In simple words: Robots → Data → AI → Better ecosystem.,, @vivianrobotics

Teleoperation is often portrayed as a laborious obstacle, but this view ignores the real potential. The real goal is not simply to put more robots in the hands of more people. The point is to turn every human correction into a permanent structural asset. If an operator's instructions are lost as soon as the task is completed, the system is memoryless. It gets the job done, but it doesn't have the ability to learn deeply from real-world experience. What matters is whether each intervention leaves something behind that is sustainable, clear traces of the decisions, the context, the quality of the movement, and the circumstances that determined the outcome. And this is where @PrismaXai is intriguing to me. It brings teleoperation closer to a permanent level of coordination, where every action is recorded, evaluated, and re-enacted as usable intelligence within the system. Once this cycle is in place, human labor is no longer a temporary fix. It becomes a composite source of operational knowledge that paves the way for training improvements, workflows, and future efficiency gains across the entire network. Website: prismax.ai Discord: discord.gg/prismaxai X: @PrismaXai

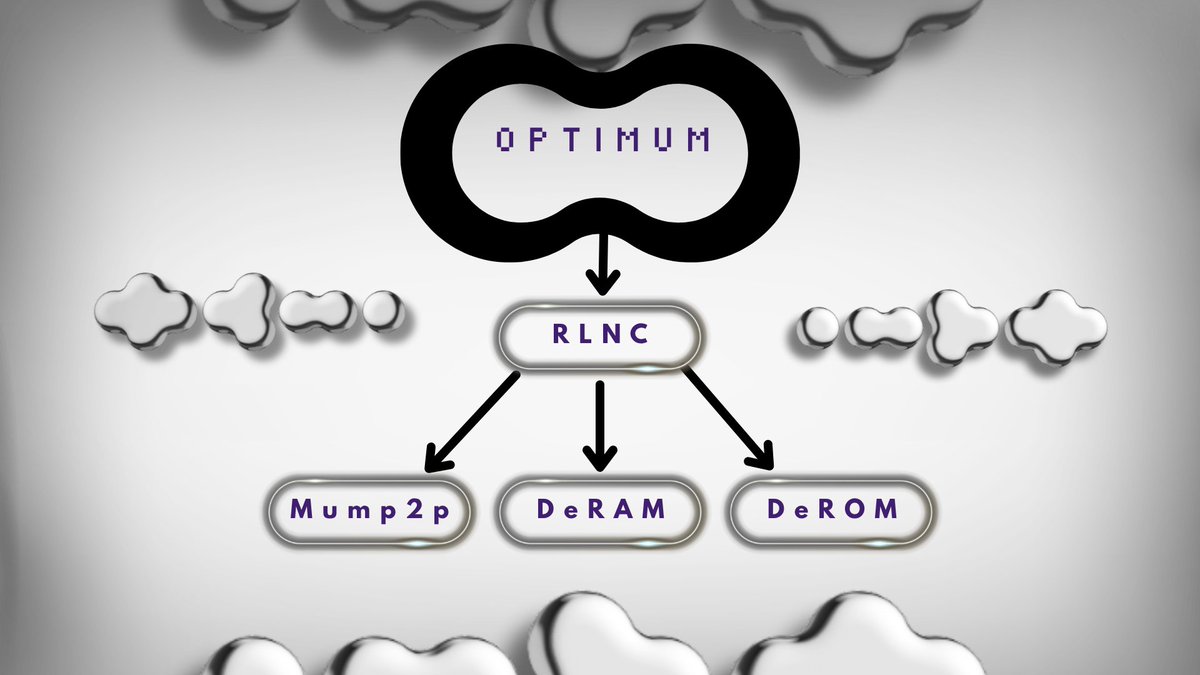

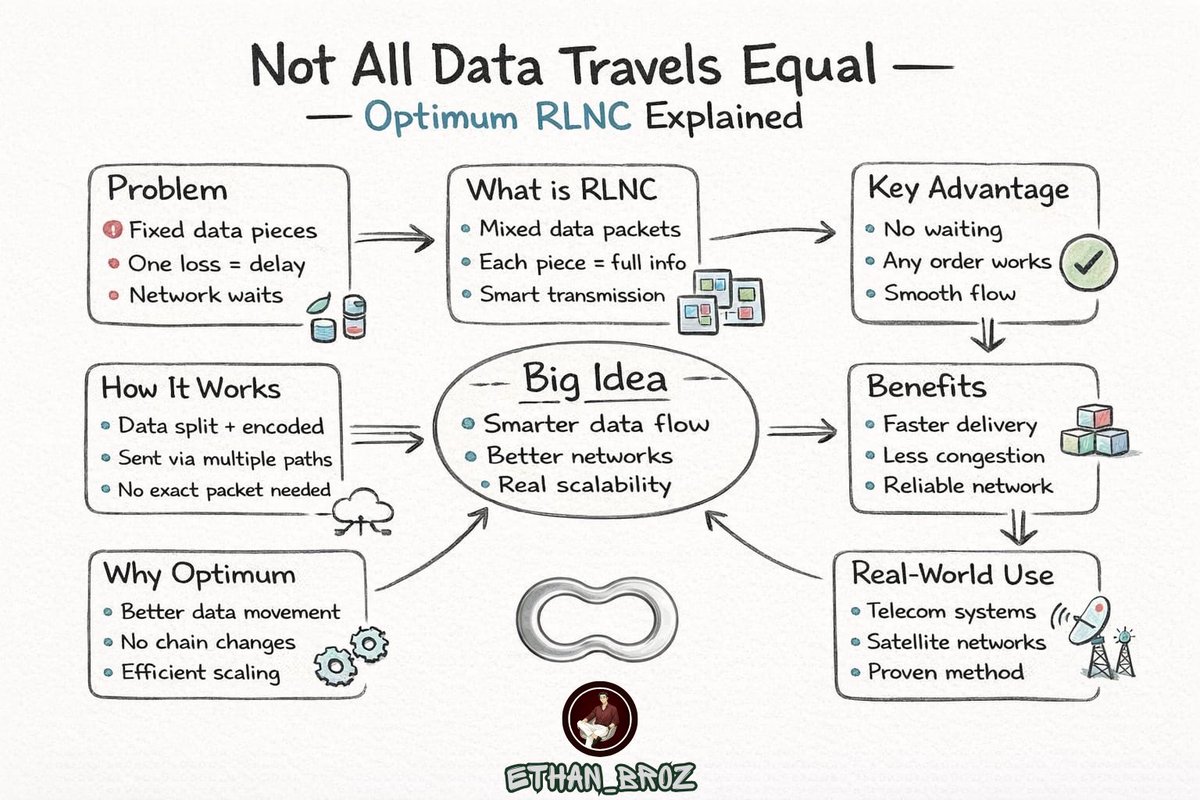

𝐇𝐨𝐰 𝐦𝐮𝐦𝐏𝟐𝐏 𝐖𝐨𝐫𝐤𝐬: 𝐓𝐡𝐞 𝐂𝐨𝐫𝐞 𝐄𝐧𝐠𝐢𝐧𝐞 𝐁𝐞𝐡𝐢𝐧𝐝 𝐎𝐩𝐭𝐢𝐦𝐮𝐦 @get_optimum Most people understand that Optimum is trying to improve how data works in Web3, but the real value comes from how it actually does it. That’s where mumP2P comes in - the system designed to completely rethink how data moves between nodes. Instead of sending full data directly from one node to another (which is slow and inefficient) mumP2P uses a smarter approach. It breaks data into smaller coded pieces, then sends those pieces across multiple paths in the network. These pieces are mixed and distributed in a way that allows the receiving node to quickly reconstruct the original data, even if some parts are delayed or missing. This system is powered by network coding (RLNC), where nodes don’t just forward data - they actively optimize how it moves. In traditional networks, data follows fixed paths and if one path slows down, everything gets delayed. But with mumP2P, data flows through multiple routes at once,removing bottlenecks and making the network more resilient. What makes mumP2P different? • Breaks data into smaller coded pieces • Sends data through multiple paths at once • Recovers data even if some parts are missing • Removes single points of delay in the network Because of this approach, the entire system becomes more efficient: • Faster and more reliable data delivery • Lower latency across nodes • Better block propagation • Improved synchronization between validators mumP2P is not just about making things faster - it’s about making data movement smarter. By fixing how data travels at the core level, Optimum is building a stronger foundation for Web3, where networks can scale more efficiently without changing their base structure.

I Submitted last night Optimum Sticker Quest form. This one’s all about creativity memes, emojis, characters, even animations. If your design stands out, it could become an official Optimum sticker on Discord + you might get shoutouts and merch. Rules: • PNG/APNG • 320×320 • Under 512KB Deadline: April 17 (Today last chance to submit) Focus on quality > quantity. One great sticker beats many average ones. Good chance to get creative and actually be part of @get_optimum. Let’s see who cooks the best. @blockchainjeff @shariaronchain