Sabitlenmiş Tweet

Morph

418 posts

Morph

@morphllm

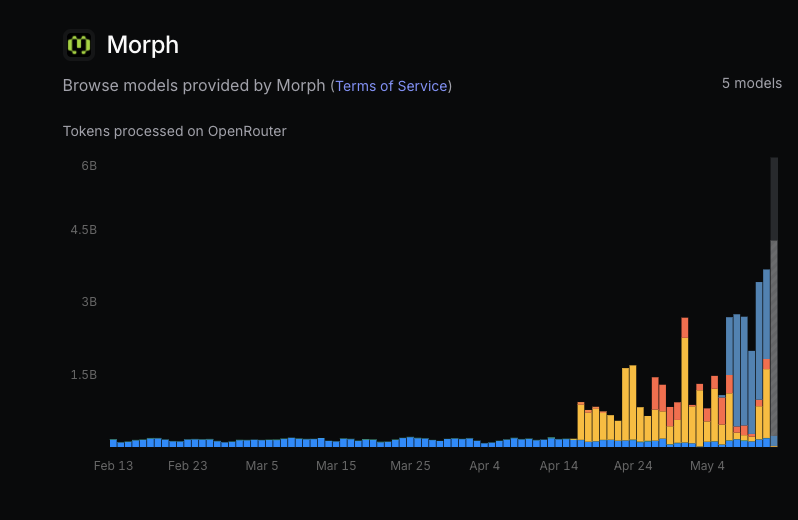

making coding agents better with specialized inference https://t.co/dBQjovGya3 Try WarpGrep: https://t.co/dXjJCKwINV

San Francisco, CA Katılım Mayıs 2025

3 Takip Edilen3.2K Takipçiler

Morph retweetledi

the general applied standard intelligence compute company

Tejas Bhakta@tejasybhakta

morph is 2 people we spend 10x more on gpus than salary we’re hiring for the first sub-10-person billion-dollar company. join us

English

Morph retweetledi

Join us in welcoming @morphllm Founder, @tejasybhakta to the AIE Miami lineup!

Don't miss his talk 'Everything is Models' next week on the big stage!

Get your tickets: ai.engineer/miami

English

Morph retweetledi

warpgrep_github_search from @morphllm is probably the most unfathomoly unfair advantage you can have right now. 10x better than grep app

Even beats Ctx7 tbh

docs.morphllm.com/sdk/components…

English

@ryanleecode @kunchenguid @dhruvbhatia0 is working on a fix for the latest CC version, but we reccomend compacting at around 150k for best coding performance. for investigation, search, chat with repo type uses, no need to compact until 1m

English

@kunchenguid @morphllm What is your thoughts on the 1m context window question if we use the morph compact plugin?

English

@tube_you86806 @FactoryAI oh wait actually no it doesnt. @FactoryAI wanna expose a compactions hook?

English

@fastquant0 clerk outage with their bot protection feature.

@clerk please fix

English

Morph retweetledi

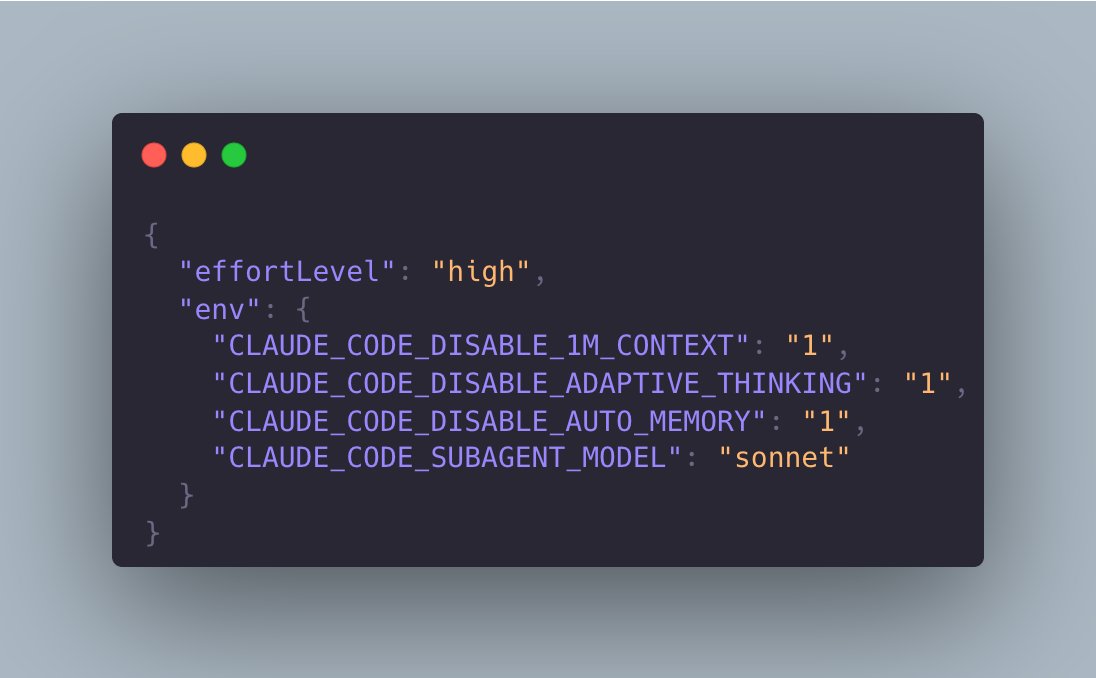

Agents don’t need bigger models. They need better tools.

Morph trains coding subagents.

Not for humans. For frontier models.

Fast Apply edits at 10,000 tokens/sec.

WarpGrep handles code and log search.

Both keep the main model’s context clean

Because when context gets too large, performance drops.

Now Morph is pushing coding subagents even faster

One newer model runs at 33,000 tokens/sec: docs.morphllm.com/sdk/components…

🎙️ @tejasybhakta, Founder & CEO, @morphllm on @fondocom @thestartpod w/ @davj

English

@ryanleecode opencode plugin github.com/morphllm/openc…

working on claude code still.

1M limit for context window. should be able to support 5M in a week or so

English

@jackjoliet you can specify that in the sdk if you use the messages input

docs.morphllm.com/sdk/components…

English

We just removed access control. Anyone can now try this from the API as well.

docs.morphllm.com/sdk/components…

English

Morph retweetledi

Perfect compaction is a prerequisite for long-running agents.

It’s the difference between a country of geniuses

and a pile of clankers.

#unLobotomizeClaude

Morph@morphllm

Introducing FlashCompact - the first specialized model for context compaction 33k tokens/sec 200k → 50k in ~1.5s Fast, high quality compaction

English

@scent_fetish_ Not in the mcp yet - just npm for now. For opencode we have a plugin github.com/morphllm/openc…

English

Those guys are doing it right.

@morphllm

Do we update MCPs, or do they just work server-side (NPM use, not NPX)?

Morph@morphllm

Introducing FlashCompact - the first specialized model for context compaction 33k tokens/sec 200k → 50k in ~1.5s Fast, high quality compaction

English