Matt Woods

2.7K posts

Matt Woods

@mv2woods

Builder | Additive Manufacturing | Bitcoin | EVs | XR | AI Founder Scrap Labs Co-founder Xact Metal Founder X Material Processing Ex-SpaceXer

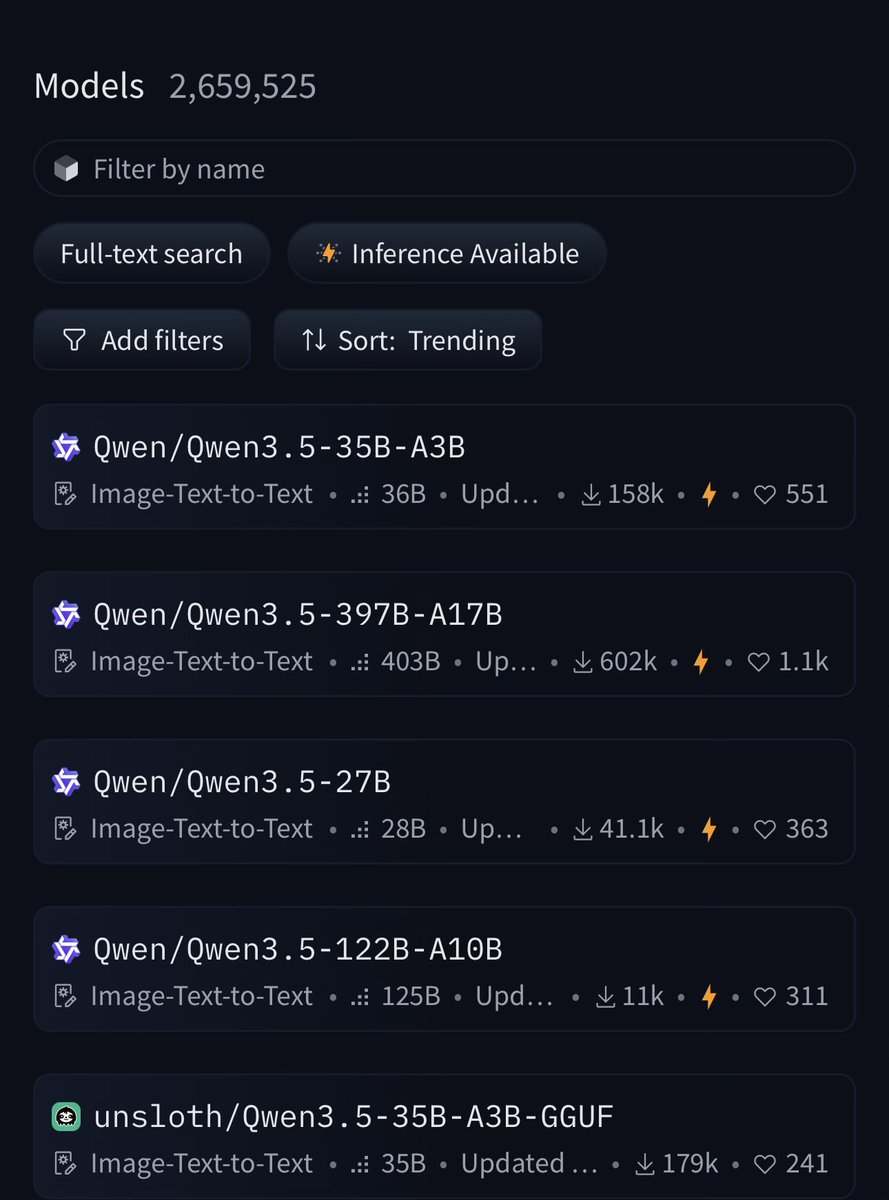

Best models to run on your hardware level I'll be doing this every week, I hope you guys enjoy. ---- 8 GB ---- Autocomplete for coding (like Cursor Tab) - huggingface.co/NexVeridian/ze… - huggingface.co/bartowski/zed-… Tool calling, assistant style - huggingface.co/nvidia/NVIDIA-… ---- 16 Gb ---- Here things get better: Multimodal - huggingface.co/Qwen/Qwen3.5-9B - huggingface.co/Tesslate/OmniC… - huggingface.co/unsloth/Qwen3.… ---- 24 GB ---- - The best model you can get (thanks Qwen) huggingface.co/Qwen/Qwen3.5-2… - Great model (strong agents) huggingface.co/nvidia/Nemotro… - Mine hehe huggingface.co/0xSero/Qwen-3.… I'm doing a weekly series