♡nat♡

21.1K posts

Finally built something I actually needed.

Introducing PinchBench, an open benchmark for testing LLMs inside real OpenClaw agent workflows.

Most benchmarks test isolated skills.

Answer a question. Solve math. Write code.

That doesn’t matter when your agent has to actually do things.

PinchBench runs models through 23 real tasks like:

• scheduling meetings

• writing + running scripts

• triaging email

• scraping data

• managing files

Not “can it answer”

But “can it complete the task end to end”

Did it actually send the email?

Create the file?

Finish the workflow?

Everything is graded automatically and pushed to a public leaderboard:

success rate, speed, and cost across 30+ models

You can plug in your own model, run it locally, and see how it stacks

Fully open source.

All tasks and eval logic are public.

This is how you actually measure agents.

github.com/agenticbuilder…

English

♡nat♡ retweetledi

♡nat♡ retweetledi

♡nat♡ retweetledi

I’m giving an agent control over Reachy Mini from @huggingface and letting it understand and share spatial data via @Spectacles

AR is the human interface for robotics and physical AI imo.

It feels like absolute magic to interact with this, both in voice/agent and “puppeteering” mode.

I’ll probably work on AR for either an arm (manipulation tasks) or some sort of drone (locomotion in 3D space) next…

Project is fully open source btw: github.com/V4C38/spectacl…

Thank you @SensAIHackademy for sending me the robot!

English

♡nat♡ retweetledi

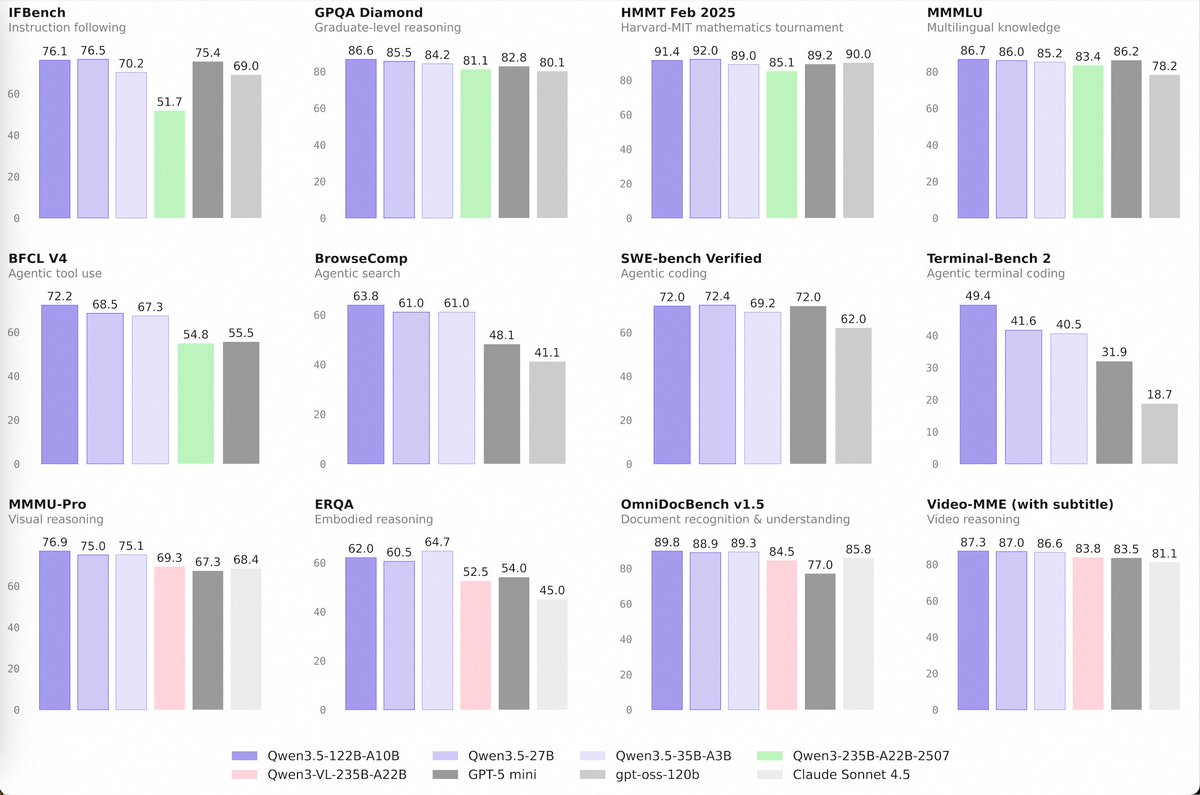

🚀 Introducing the Qwen 3.5 Medium Model Series

Qwen3.5-Flash · Qwen3.5-35B-A3B · Qwen3.5-122B-A10B · Qwen3.5-27B

✨ More intelligence, less compute.

• Qwen3.5-35B-A3B now surpasses Qwen3-235B-A22B-2507 and Qwen3-VL-235B-A22B — a reminder that better architecture, data quality, and RL can move intelligence forward, not just bigger parameter counts.

• Qwen3.5-122B-A10B and 27B continue narrowing the gap between medium-sized and frontier models — especially in more complex agent scenarios.

• Qwen3.5-Flash is the hosted production version aligned with 35B-A3B, featuring:

– 1M context length by default

– Official built-in tools

🔗 Hugging Face: huggingface.co/collections/Qw…

🔗 ModelScope: modelscope.cn/collections/Qw…

🔗 Qwen3.5-Flash API: modelstudio.console.alibabacloud.com/ap-southeast-1…

Try in Qwen Chat 👇

Flash: chat.qwen.ai/?models=qwen3.…

27B: chat.qwen.ai/?models=qwen3.…

35B-A3B: chat.qwen.ai/?models=qwen3.…

122B-A10B: chat.qwen.ai/?models=qwen3.…

Would love to hear what you build with it.

English

♡nat♡ retweetledi

TranslateGemma 4B by @GoogleDeepMind now runs 100% in your browser on WebGPU with Transformers.js v4.

55 languages. No server. No data leaks. Works offline.

A 4B parameter translation powerhouse, right in your browser.

Try the demo 👇

English

Been gradually building out my "Clawscan" dashboard to stay on top of OpenClaw coding sub agents, my team of agents, etc.

The best feature is being able to attach to things like Copilot CLI and see what's going on there as it runs in the background.

github.com/renzdevs/claws…

English

♡nat♡ retweetledi

How can businesses go beyond using AI for incremental efficiency gains to create transformative impact? I write from the World Economic Forum (WEF) in Davos, Switzerland, where I’ve been speaking with many CEOs about how to use AI for growth. A recurring theme is that running many experimental, bottom-up AI projects — letting a thousand flowers bloom — has failed to lead to significant payoffs. Instead, bigger gains require workflow redesign: taking a broader, perhaps top-down view of the multiple steps in a process and changing how they work together from end to end.

Consider a bank issuing loans. The workflow consists of several discrete stages:

Marketing -> Application -> Preliminary Approval -> Final Review -> Execution

Suppose each step used to be manual. Preliminary Approval used to require an hour-long human review, but a new agentic system can do this automatically in 10 minutes. Swapping human review for AI review — but keeping everything else the same — gives a minor efficiency gain but isn’t transformative.

Here’s what would be transformative: Instead of applicants waiting a week for a human to review their application, they can get a decision in 10 minutes. When that happens, the loan becomes a more compelling product, and that better customer experience allows lenders to attract more applications and ultimately issue more loans.

However, making this change requires taking a broader business or product perspective, not just a technology perspective. Further, it changes the workflow of loan processing. Switching to offering a “10-minute loan” product would require changing how it is marketed. Applications would need to be digitized and routed more efficiently, and final review and execution would need to be redesigned to handle a larger volume.

Even though AI is applied only to one step, Preliminary Approval, we end up implementing not just a point solution but a broader workflow redesign that transforms the product offering.

At AI Aspire (an advisory firm I co-lead), here’s what we see: Bottom-up innovation matters because the people closest to problems often see solutions first. But scaling such ideas to create transformative impact often requires seeing how AI can transform entire workflows end to end, not just individual steps, and this is where top-down strategic direction and innovation can help.

This year's WEF meeting, as in previous years, has been an energizing event. Among technologists, frequent topics of discussion include Agentic AI (when I coined this term, I was not expecting to see it plastered on billboards and buildings!), Sovereign AI (how nations can control their own access to AI), Talent (the challenging job market for recent graduates, and how to upskill nations), and data-center infrastructure (how to address bottlenecks in energy, talent, GPU chips, and memory). I will address some of these topics in future posts.

Against the backdrop of geopolitical uncertainty, I hope all of us in AI will keep building bridges that connect nations, sharing through open source, and building to benefit all nations and all people.

[Original text: deeplearning.ai/the-batch/issu… ]

English

♡nat♡ retweetledi

So our 45-person team developed entirely new AI capabilities, enabling them to:

🎨 Fine-tune custom Veo and Imagen models on their paintings and artwork

📹 Provide a desired look through rough animations, which the models transformed into stylized videos

🎭 Edit specific regions without regenerating entire shots from scratch

English

♡nat♡ retweetledi

Our short film Dear Upstairs Neighbors is previewing at @sundancefest. 🎬

It’s a story about noisy neighbors, but behind the scenes, it’s about solving a huge challenge in generative AI: control.

Developed by Pixar alumni, an Academy Award winner, researchers, and engineers, here’s how it came together. 🎨

English

♡nat♡ retweetledi

♡nat♡ retweetledi

@thejessezhang @AshwinSreenivas @DecagonAI @christinacaci @vanta @HeggieConnor is turning data into intelligent go-to-market systems with @unifygtm.

English

♡nat♡ retweetledi

♡nat♡ retweetledi

Tomorrow we’re hosting a town hall for AI builders at OpenAI. We want feedback as we start building a new generation of tools.

This is an experiment and a first pass at a new format — we’ll livestream the discussion on YouTube at 4 pm PT.

Reply here with questions and we’ll answer as many as we can!

English

♡nat♡ retweetledi

We’re rolling out age prediction on ChatGPT to help determine when an account likely belongs to someone under 18, so we can apply the right experience and safeguards for teens.

Adults who are incorrectly placed in the teen experience can confirm their age in Settings > Account.

Rolling out globally now. EU to follow in the coming weeks.

openai.com/index/our-appr…

English

♡nat♡ retweetledi

♡nat♡ retweetledi

♡nat♡ retweetledi

♡nat♡ retweetledi

The cryptography team at MakeInfinite Labs pushed some new performance upgrades to the @spaceandtime Proof of SQL repo this week.

The protocol can now prove queries against 1 million rows of data in less than a second.

We are grateful to build with @NVIDIA and the Space and Time ecosystem to accelerate ZK proofs together. 🤝

→ View the updated benchmarks: github.com/spaceandtimefd…

English