Jordan MacLeod

18.6K posts

Jordan MacLeod

@newcurrency

Author of New Currency: How Money Changes The World As We Know It. Automaticity in monetary policy: achieving Friedman's Optimum without deflation. Sculptor 🌱

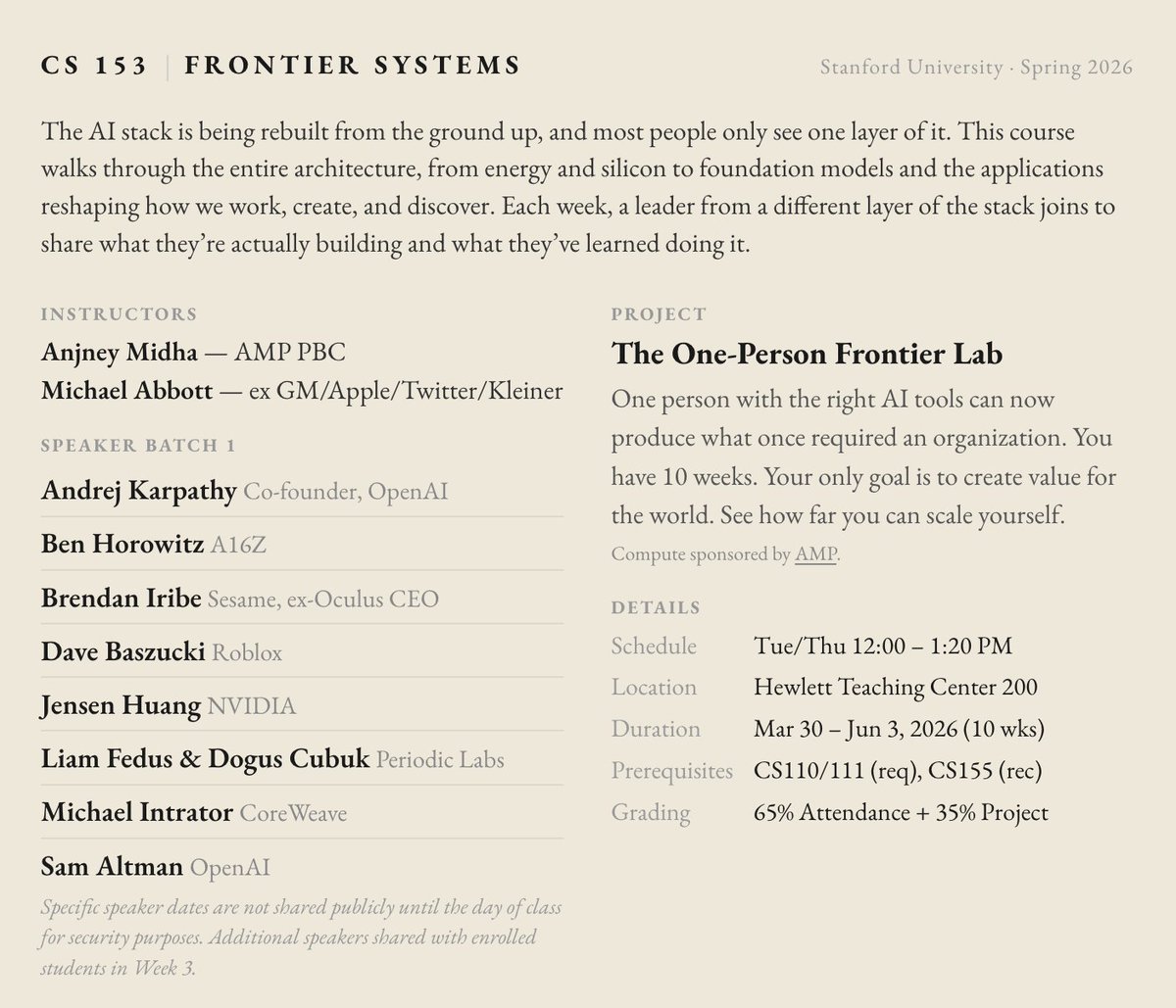

so @mabb0tt and I are once again volunteering to teach cs153.stanford.edu there are so many new frontiers to be pioneered thank you to our speakers like @karpathy @bhorowitz @brendaniribe @DavidBaszucki @LiamFedus @ekindogus @sama for investing in the next generation

The future is electromagnetic. One challenge is that there are ~ten people in the world who can deeply intuit electromagnetism. RF engineering is "black magic." Arena Physica thinks machines can intuit EM better. CEO Pratap Ranade & I on AI for EM: notboring.co/p/electromagne…

I had dinner once with a top physicist and a top computer scientist and asked what they thought the probability was that we were in a simulation. They answered simultaneously at 0% and 100% respectively. It was like a double-slit experiment, but with humans.

Scientists discuss whether AI could surpass human contributions to physics by 2035 physicsworld.com/a/is-vibe-phys…