Nathan Smith

702 posts

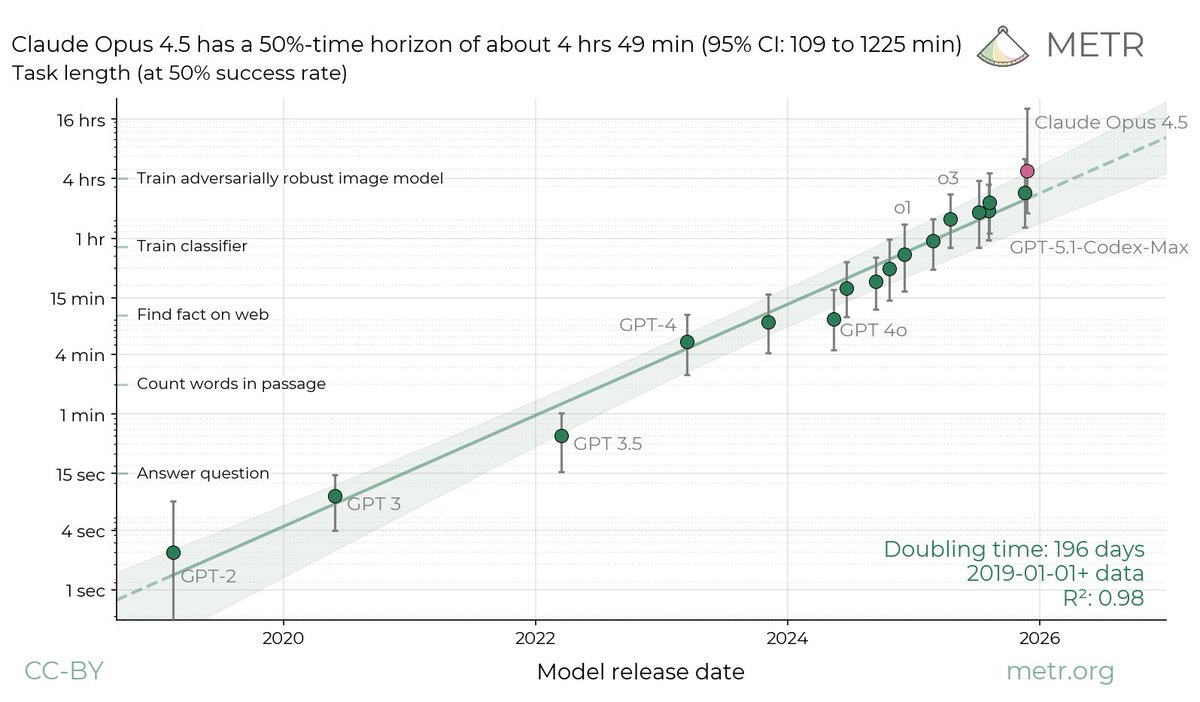

I might get some pushback for this, but I honestly think a lot of parents, especially in places like Silicon Valley and especially many Asian parents, are training their kids for the wrong world. I see kids at the age of 7-8 packed with after-school math, more reading, more test prep, with the goal to make them “smarter.” But from my perspective, living deep in the AI world every single day, I’m pretty sure raw intelligence is about to become a commodity. Very soon, AI is going to do math better than the best mathematician, it’ll diagnose better than top doctors around the world, it’ll draft contracts better than elite lawyers, and it’ll learn faster than any PhD, instantly, endlessly, and without any fatigue. All of that knowledge will live right in your pocket. So think about it… if we’re raising kids to win by being “the smartest in the room,” we’re really training them for something that’s already being replaced. In my opinion, this is a waste of time, $, and effort. What I focus on with my kids is very different. I care about willpower. I care about passion. I care about loving something enough to stick with it, especially when it feels hard. And as a Dad, my job is to support that, whatever it is, and teach them to never give up. I could be totally wrong though… But when I look at where AI is headed, I don’t think the future belongs to the kid who memorized the most formulas or did the most math problems, etc. In the future, I think the winners are going to be kids who 1/ can push through frustration 2/ can stay curious 3/ can keep going deeper into their passions than others 4/ can use AI tools to build cool things 5/ has the will power to never give up In this day and age, school doesn’t really teach this and I don’t think after-school classes teach that either. I don’t think any of this can really be taught at school tbh, it’s something that is developed inside the home through the environment we as parents cultivate. In a world where AI will help you build anything, create anything, and learn anything instantly, I don’t think the real edge will be intelligence anymore like the past. The edge will come down to grit, discipline, emotional strength, and to keep going as others quit. AI will be so deeply woven into our kids’ lives whether we like it or not. That part is unavoidable. However, what is avoidable is raising kids who only know how to follow instructions, chase grades, and wait for approval. I always tell my kids, I don’t care what grade you get in a test. I care that you know what you got wrong, why you got it wrong, and what you’re doing to avoid that mistake in the future. Because I firmly believe in the future, the kids who will thrive the most will be the ones who want something badly enough to go after it, who aren’t afraid to fail, and those who know how to leverage AI. Just my two cents. But if we’re serious about the future, I think it’s time parents start training for that world, NOT the one we grew up in.

Tinker is now generally available. We also added support for advanced vision input models, Kimi K2 Thinking, and a simpler way to sample from models. thinkingmachines.ai/blog/tinker-ge…

Daily abuse of authority by the federal judiciary, acting in stark contrast to the will of the people, is SEVERELY eroding their credibility in the eyes of public. Once gone, it is never coming back. Abuse of judicial authority MUST STOP!

. @SecDef response to the @TheAtlantic article…. “You’re talking about a deceitful and highly discredited “so-called journalist”

Intelligence isn't just capability; it's efficiency. We can no longer report performance as a single metric. Going forward our leaderboard will track the *cost* of performance as a first class citizen. ARC-AGI-2 is showing material resistance over ARC-AGI-1 towards reasoning models. It is unknown what scale, if any, would reach human performance.