Nick

316 posts

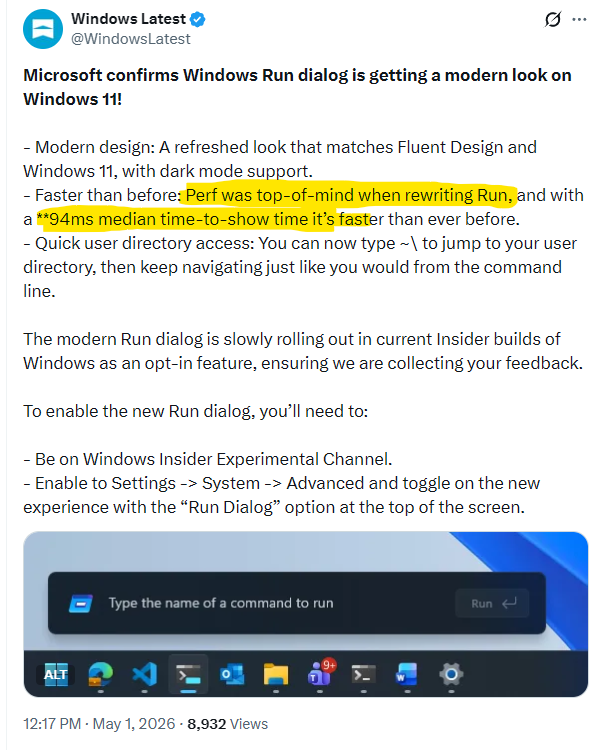

everyone is taking issue with the conflation of two perf metrics. I think the problem is people read optimizing for perf as making it much faster, but I think Microsoft’s point is that they’ve managed to improve the Run prompt latency (that nobody has had an issue with) despite adding more functionality (Command Palette) and redesigning it. So they optimized for the perf of the added feature set

You know how you can render a 10,000-line diff without melting the browser? By focusing on simplicity. 🧵

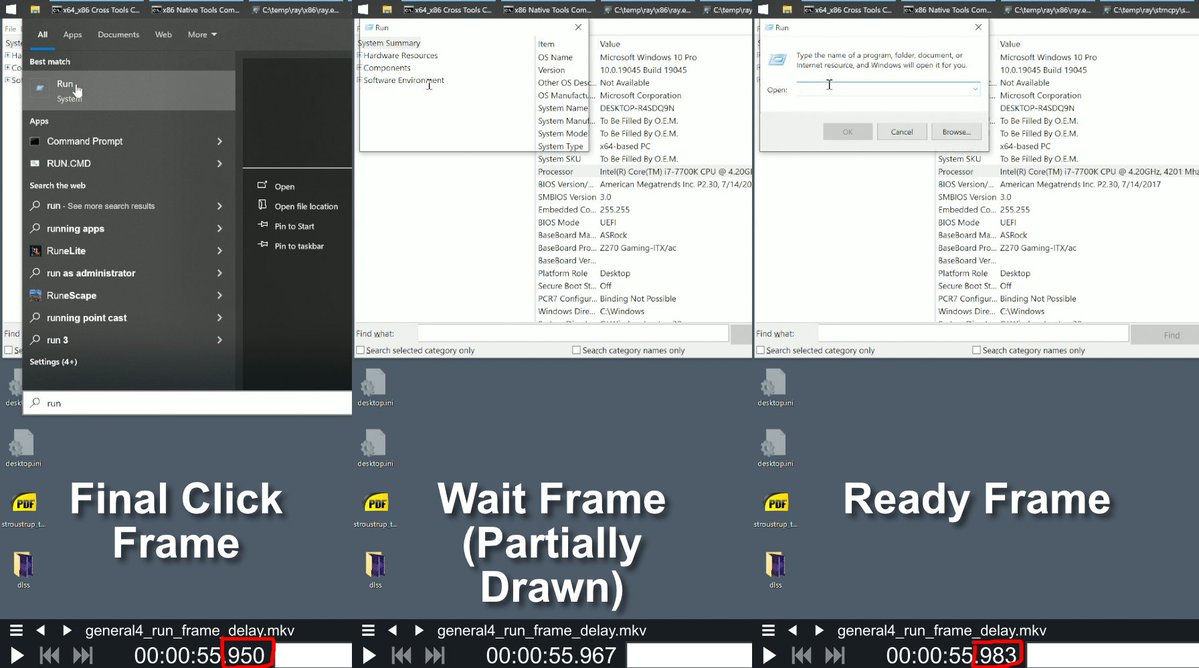

Just want to make sure I'm reading this right: Microsoft rewrote the run dialog with performance "top-of-mind", and the best they could manage to do when putting up a single text box was 10fps?

Just want to make sure I'm reading this right: Microsoft rewrote the run dialog with performance "top-of-mind", and the best they could manage to do when putting up a single text box was 10fps?

Ghostty is leaving GitHub. I'm GitHub user 1299, joined Feb 2008. I've visited GitHub almost every single day for over 18 years. It's never been a question for me where I'd put my projects: always GitHub. I'm super sad to say this, but its time to go. mitchellh.com/writing/ghostt…

@htmx_org THERE'S ANOTHER

Programming in 2026 is literally just sitting in a dark room and gaslighting Claude into fixing its own code hallucinations. We went from copy-pasting StackOverflow answers to acting like a deeply disappointed manager for a neural network. The entire tech industry is basically just AI babysitters now.