Niladri Dutt

161 posts

@niladridutt

ML PhD @ucl | Ex @adobe @nvidia | @ELLISforEurope | Interested in 3D generative modelling

🚀 New paper: arxiv.org/abs/2602.13191 VideoLMs are bottlenecked by a simple problem: they treat video like a stack of images. That means huge token costs, slow responses, and missed temporal details. What if we processed video the way codecs do? 🎬 Instead of dense per-frame RGB embeddings, we tokenize motion vectors + residuals and only encode sparse keyframes — turning video redundancy into a powerful inductive bias for efficient temporal reasoning. 🧵👇

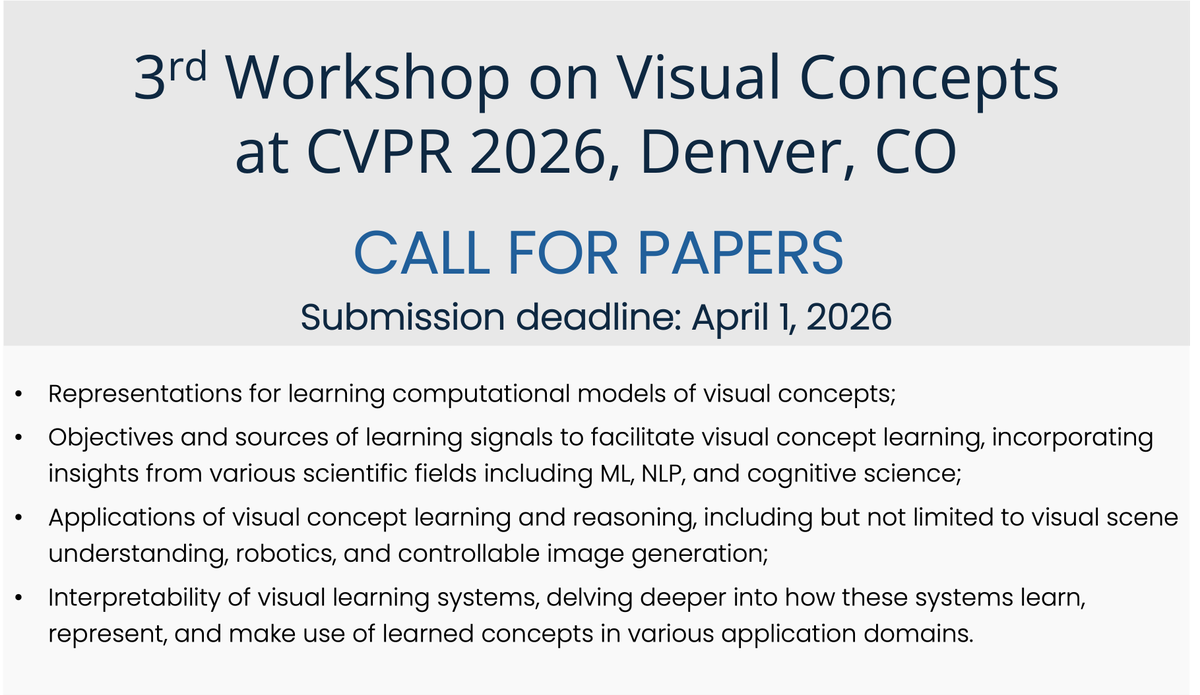

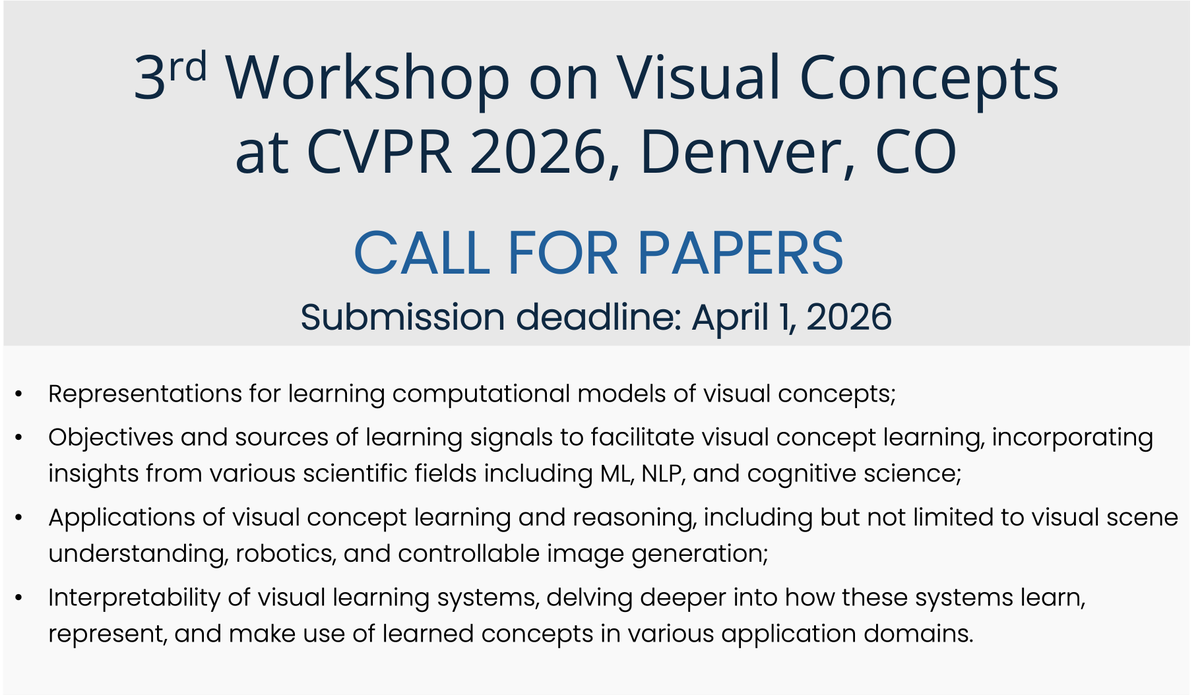

🚨Current data curation results in the creation of static datasets and the use of model-based filters that induce many biases. Can we fix this? We propose ✨CABS✨, a flexible concept-aware online batch curation method that improves CLIP pretraining! arxiv.org/abs/2511.20643 🧵👇

🧵1/10 Excited to share our @siggraph paper "MonetGPT: Solving Puzzles Enhances MLLMs' Image Retouching Skills" 🌟 We explore how to make MLLMs operation-aware by solving visual puzzles and propose a procedural framework for image retouching #SIGGRAPH #MLLM