"Work on problems nobody else is working on, especially if you’re uniquely capable of solving them." @david_perell summing up worldview of @peterthiel

Stanley Yuan 🔋

3.2K posts

@nonlineargrowth

Serial entrepreneur w 1x exit (acquired by co w/ 250m users). Technology investor. Mathematician by training, humanitarian at heart. Ex @GoldmanSachs @Columbia

"Work on problems nobody else is working on, especially if you’re uniquely capable of solving them." @david_perell summing up worldview of @peterthiel

@pbeisel Probably more like 160k wafers/month, factoring in yield

Terafab may be the most essential vertical integration Tesla has ever undertaken— and it is truly non-optional. It will take years to build and will test even Elon’s speedrunning abilities to the limit, but that won’t stop him from trying. The breakthrough likely lies in overhauling the overall facility’s cleanroom model. By moving wafers in sealed pods with localized micro-environments, the fab no longer needs a monolithic ultra-clean space. Elon’s line about “eating cheeseburgers and smoking cigars” on the fab floor isn’t silly, it’s the practical reality of a radically simpler, cheaper, faster approach that could finally change the economics of chipmaking. This is all forced by the brutal “pinch” in chip supply. Tesla must produce on the order of 100–200 billion AI chips per year just to saturate its roadmap. That volume powers: FSD cars & Robotaxis (tens of millions of vehicles needing AI5 inference for near-perfect autonomy), Physical Optimus (scaling from thousands today to millions per year, each requiring AI5/AI6-level compute), Digital Optimus (the new xAI-Tesla software agents for digital/office automation, running massive inference clusters), Space-based data centers (AI7/Dojo3 orbital compute for GW-scale training and inference beyond Earth limits). AI5 delivers the ~10× leap for vehicles and early robots; AI6 shifts focus to Optimus + terrestrial DCs; AI7 goes orbital. No external foundry (TSMC, Samsung, etc.) can deliver that scale or timeline— hence the Terafab launch. Without it, the entire robotics + autonomy future hits a brick wall. Terafab isn’t optional; it’s the only way forward.

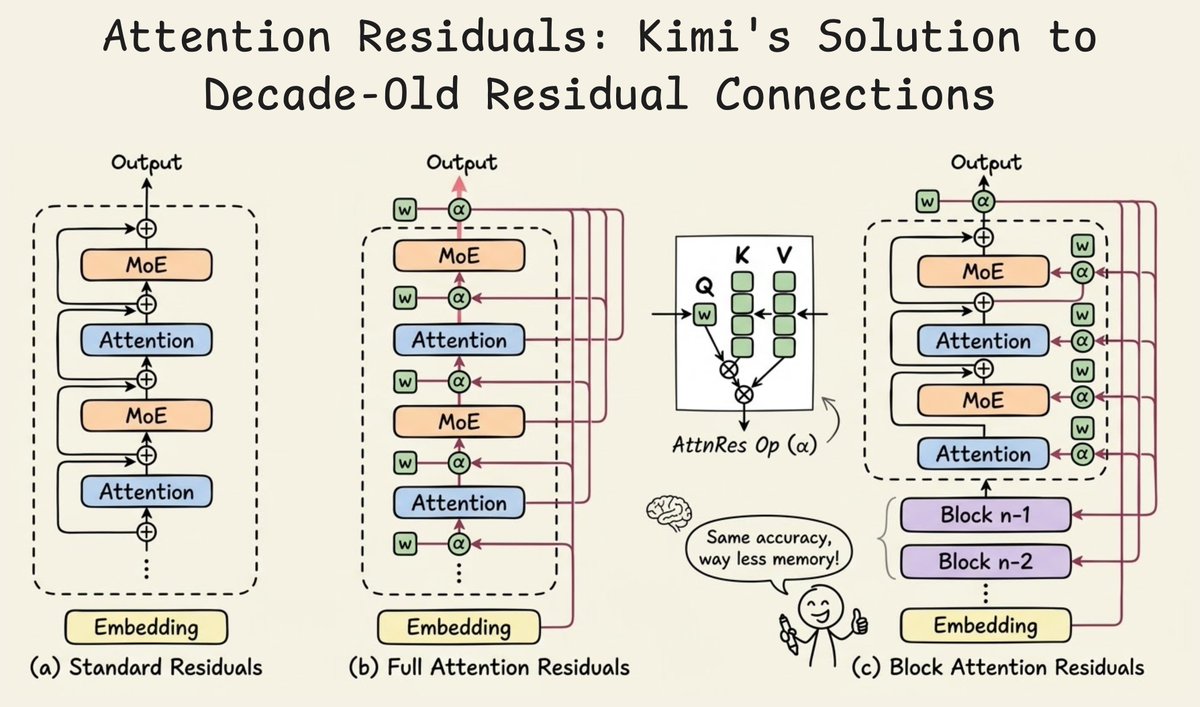

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

Oh and it works in all AI4-equipped cars, so your car can do office work for you when not driving. We’re also deploying millions of dedicated Digital Optimus units in the field at Superchargers where we have ~7 gigawatts of available power.