Paria Rashidinejad

70 posts

@paria_rd

Assistant Professor @USC; Research Scientist @AIatMeta FAIR; PhD @berkeley_ai @CHAI_Berkeley

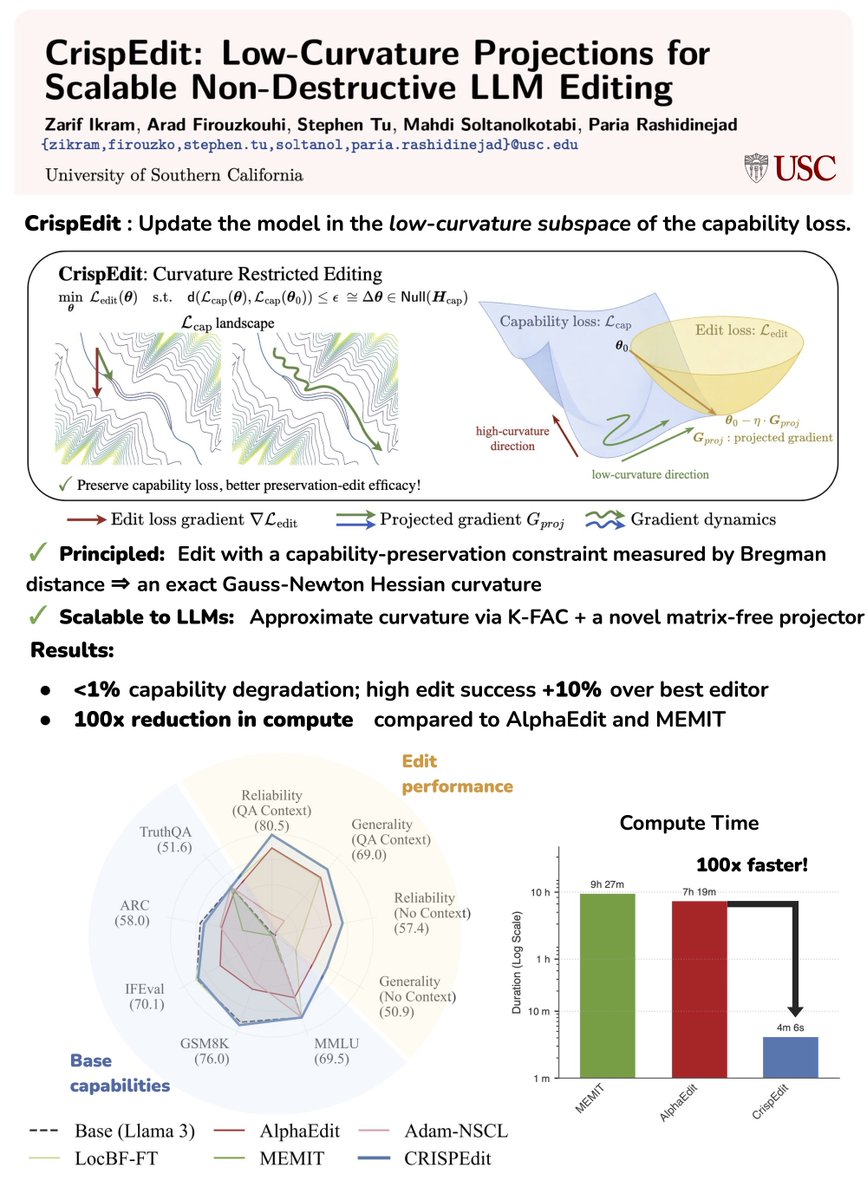

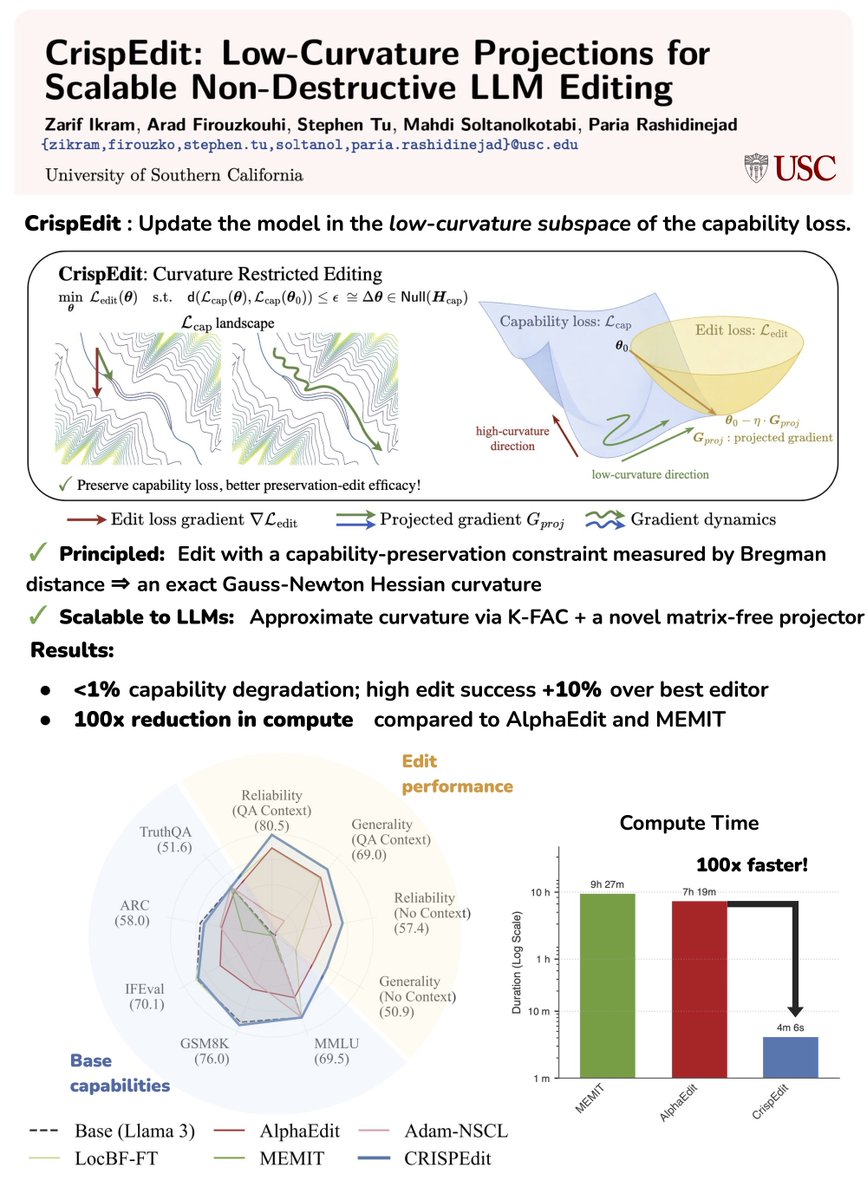

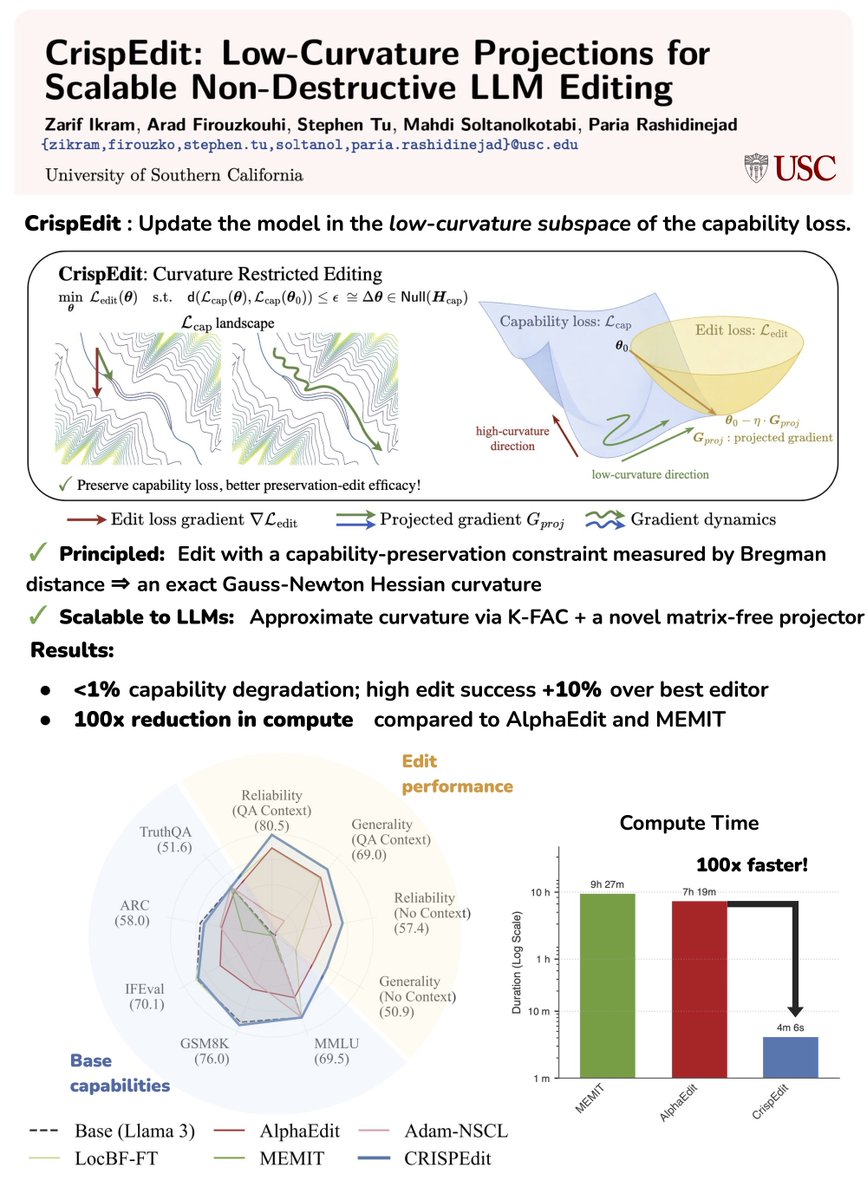

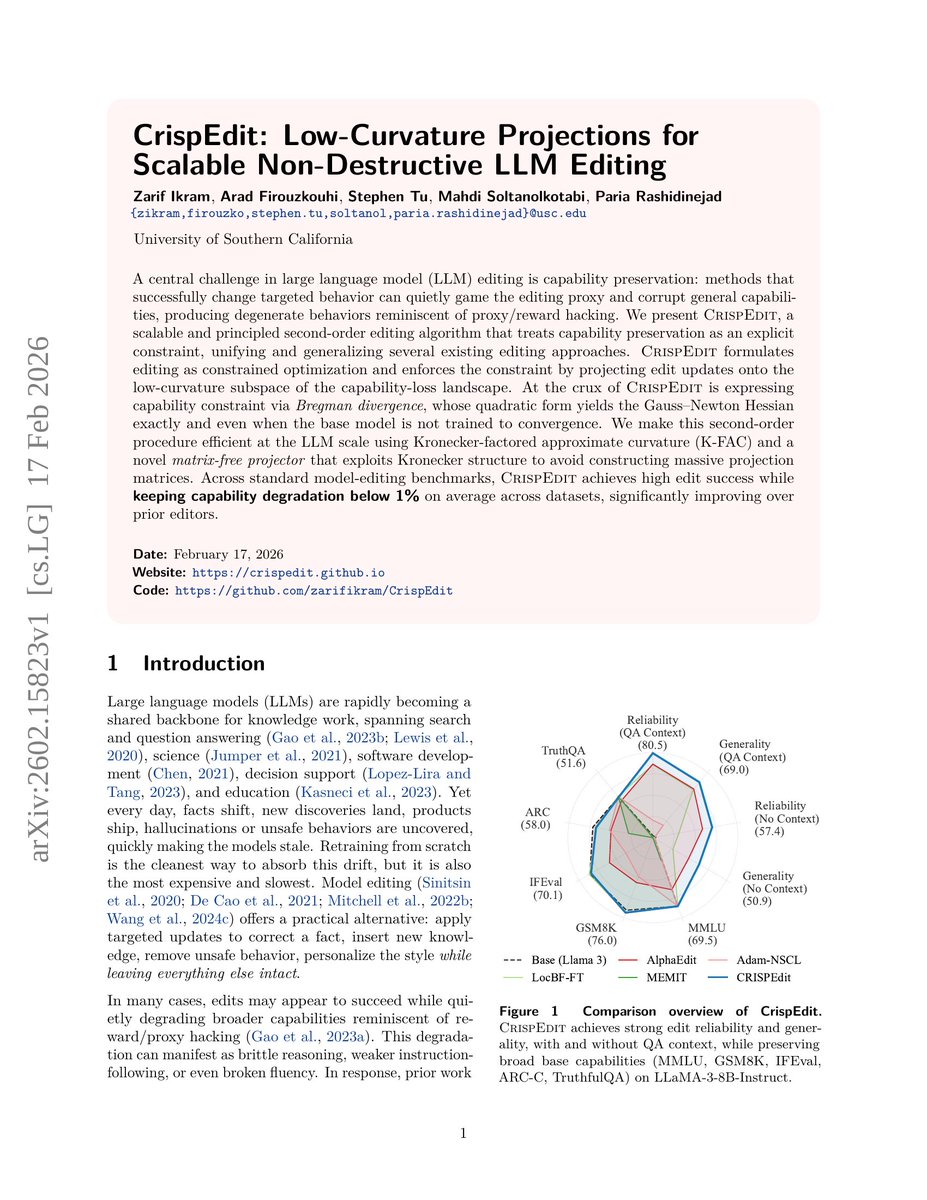

Teaching an LLM a new fact 𝐬𝐡𝐨𝐮𝐥𝐝𝐧'𝐭 𝐛𝐫𝐞𝐚𝐤 𝐞𝐯𝐞𝐫𝐲𝐭𝐡𝐢𝐧𝐠 𝐢𝐭 𝐚𝐥𝐫𝐞𝐚𝐝𝐲 𝐤𝐧𝐨𝐰𝐬. Model editing often feels like a zero-sum game: Every time you inject new facts, you risk degrading the model’s core capabilities or erasing prior data. It's the primary barrier to efficient Continual Learning. We’ve found a principled, scalable fix: 𝐂𝐫𝐢𝐬𝐩𝐄𝐝𝐢𝐭. arxiv.org/abs/2602.15823 Our approach treats capability preservation as a formal constraint. Instead of "hopeful" updates, we project edits into the low-curvature subspaces of the model's loss landscape—essentially hiding updates where the model is least sensitive. The Results: ✅ Preservation-edit efficacy: <1% capability degradation with high edit success (+10% on average over best baseline). ✅ Massive efficiency: Up to 100x reduction in compute compared to AlphaEdit/MEMIT. ✅ Scalable: Matrix-free projections that work for billion-parameter models. Our results suggest a promising path toward more reliable 𝐜𝐨𝐧𝐭𝐢𝐧𝐮𝐚𝐥 𝐥𝐞𝐚𝐫𝐧𝐢𝐧𝐠 and mitigating 𝐟𝐨𝐫𝐠𝐞𝐭𝐭𝐢𝐧𝐠 and we’re eager to explore it further. 🧵👇

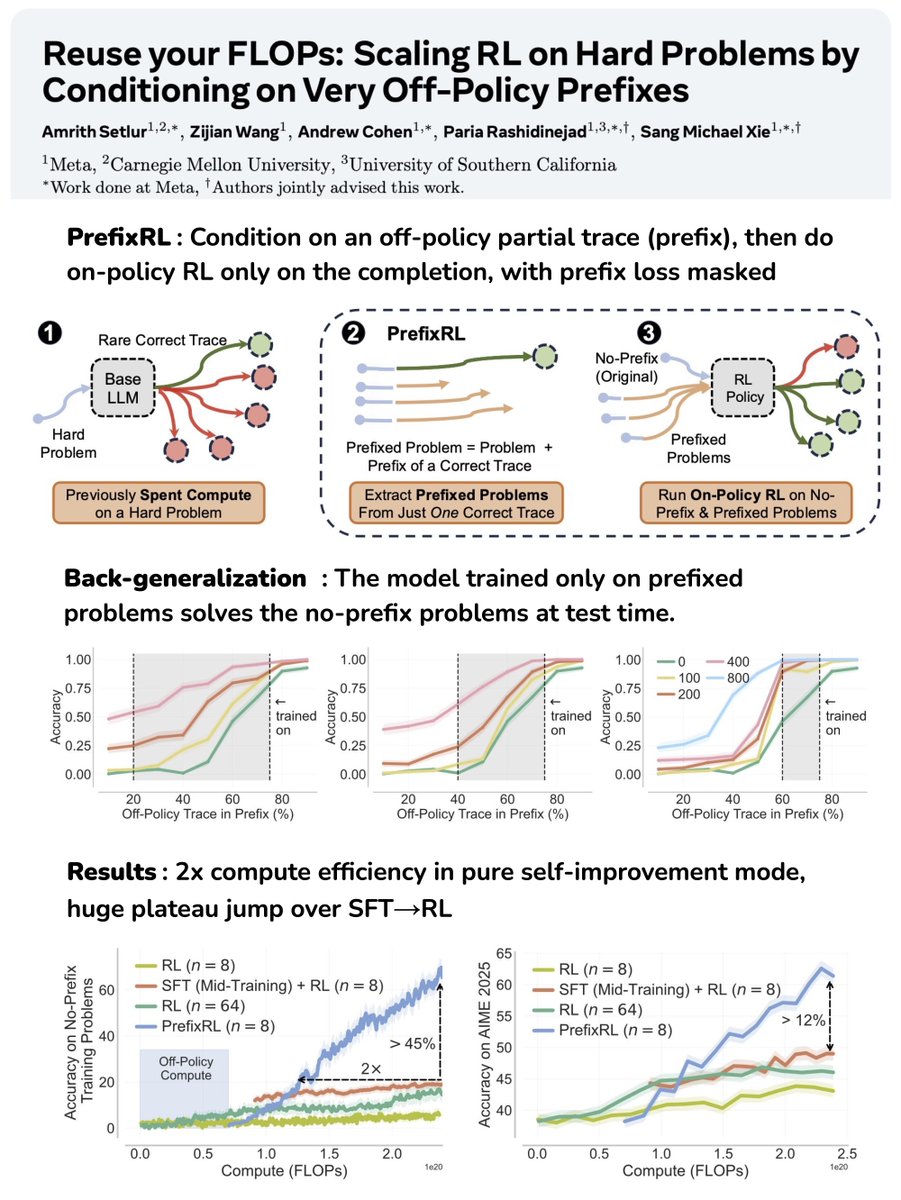

Start “thinking” from scratch: That’s how RL samples long rollouts, even on problems it has already seen, burning 🔥 tons of FLOPs on exploration from scratch. In reality, we have an ever-growing treasure of good inference FLOPs spent on the base LLM or prior RL runs from it: rare correct traces on hard problems (very low pass@n). ♻️ How can we *reuse* these very very off-policy, stale but correct traces to get the most out of the costly inference FLOPs already spent? Some obvious ways: ⚡SFT on the off-policy traces: Model entropy collapses ⚡On-policy distillation or off-policy RL: Optimization destabilizes as traces are way too off-policy We introduce the simplest of ways to reuse FLOPs, PrefixRL: *condition* on a portion of the off-policy trace and get online RL to complete it.🧵⬇️