Pedro Nascimento

326 posts

Pedro Nascimento

@pedromnasc

Founder @findlyai (YC S22). Prev engineering @X, @Google. Analytics, LLMs, Math, Decision Making, RecSys

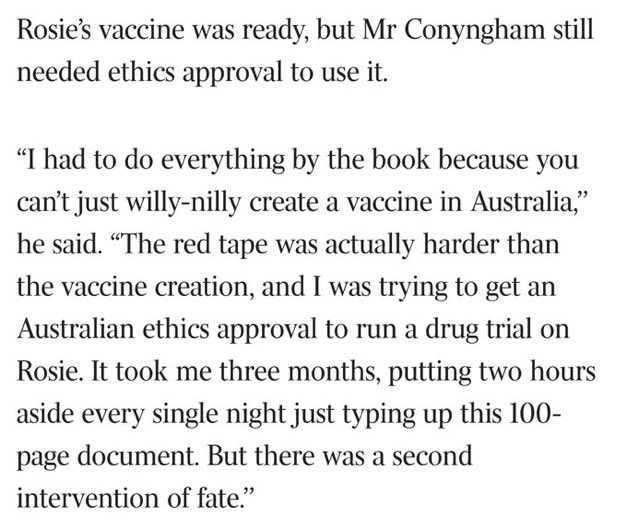

This is wild. theaustralian.com.au/business/techn…

@GaryMarcus @ylecun @demishassabis You were never alone, Gary, though you were the first to bite the bullet, to fight the good fight, and to make the argument well, again and again, for the limitations of LLMs. I salute you for this good service!

@OpenAI o1 is trained with RL to “think” before responding via a private chain of thought. The longer it thinks, the better it does on reasoning tasks. This opens up a new dimension for scaling. We’re no longer bottlenecked by pretraining. We can now scale inference compute too.

Two things pose an existential risk to humanity: Superintelligent AI and human stupidity; and I’m not so sure about the AI

There is some confusion among readers of #Bookofwhy regarding the impressive "causal understanding" LLM's, which seems to defy the theoretical prediction of the Ladder of Causation. The Ladder predicts that, regardless of data size, no learning machine could correctly answer queries about interventions and counterfactuals unless supplemented with causal knowledge, external to the data. LLM programs circumvent this prediction by smuggling causal knowledge into the training data; instead of training themselves on observations obtained directly from the environment, they are trained on linguistic texts written by authors who already have causal models of the world. The programs can simply cite information from the text without attending to any of the underlying data. The result is a sequence of linguistic extrapolations which, in some remote and obscure sense, reflect the causal understanding of those authors. @GaryMarcus @eliasbareinboim @soboleffspaces @geoffreyhinton @DavidDeutschOxf