Philippe Hebrard

98 posts

Philippe Hebrard

@philippehebrard

Head of Product @Ledger | prev. co-founder @ana_health (acquired)

Introducing: PlayerZero The world's first Engineering World Model that puts debugging, fixing, and testing your code on autopilot. We've raised $20M from Foundation Capital, @matei_zaharia (Databricks), @pbailis (Workday), @rauchg (Vercel), @zoink (Figma), @drewhouston (Dropbox), and more PlayerZero frees up 30% of your engineering bandwidth by: 1. Finding the root cause for bugs & incidents in minutes that engineering teams take days to identify. 2. Predicting in minutes, edge case issues that a 300-person QA team would take weeks to find. ------ Here's why this matters: No one in your org has a complete picture of how your production software actually behaves. Support sees tickets. SRE sees infra. Dev sees code. Each team builds their own fragmented view - and none of these systems talk to each other. When something breaks, everyone scrambles to stitch the picture together by hand. PlayerZero connects all of it into a single context graph - → The Slack thread where your lead said "we went with X because Y fell apart in prod last time" → The PR review where an engineer explained the tradeoff → The lifetime history of your CI/CD pipeline, observability stack, incidents, and support tickets So you can trace any problem to its root cause across every silo. And it compounds. Every incident diagnosed teaches the model something new. The longer it runs, the deeper it understands - which code paths are high-risk, which configurations are fragile, which changes tend to break which customer flows. So when you sit down to debug a live issue, you have your entire org's collective reasoning and production memory behind you - instantly. ------ Zuora, Georgia-Pacific, and Nylas have reduced resolution time by 90% and caught 95% of breaking changes and freeing an average of $30M in engineering bandwidth. ------ Our guarantee: If we can't increase your engineering bandwidth by at least 20% within one week, we'll donate $10,000 to an open-source project of your choice. Book a demo - bit.ly/3NlLMeN

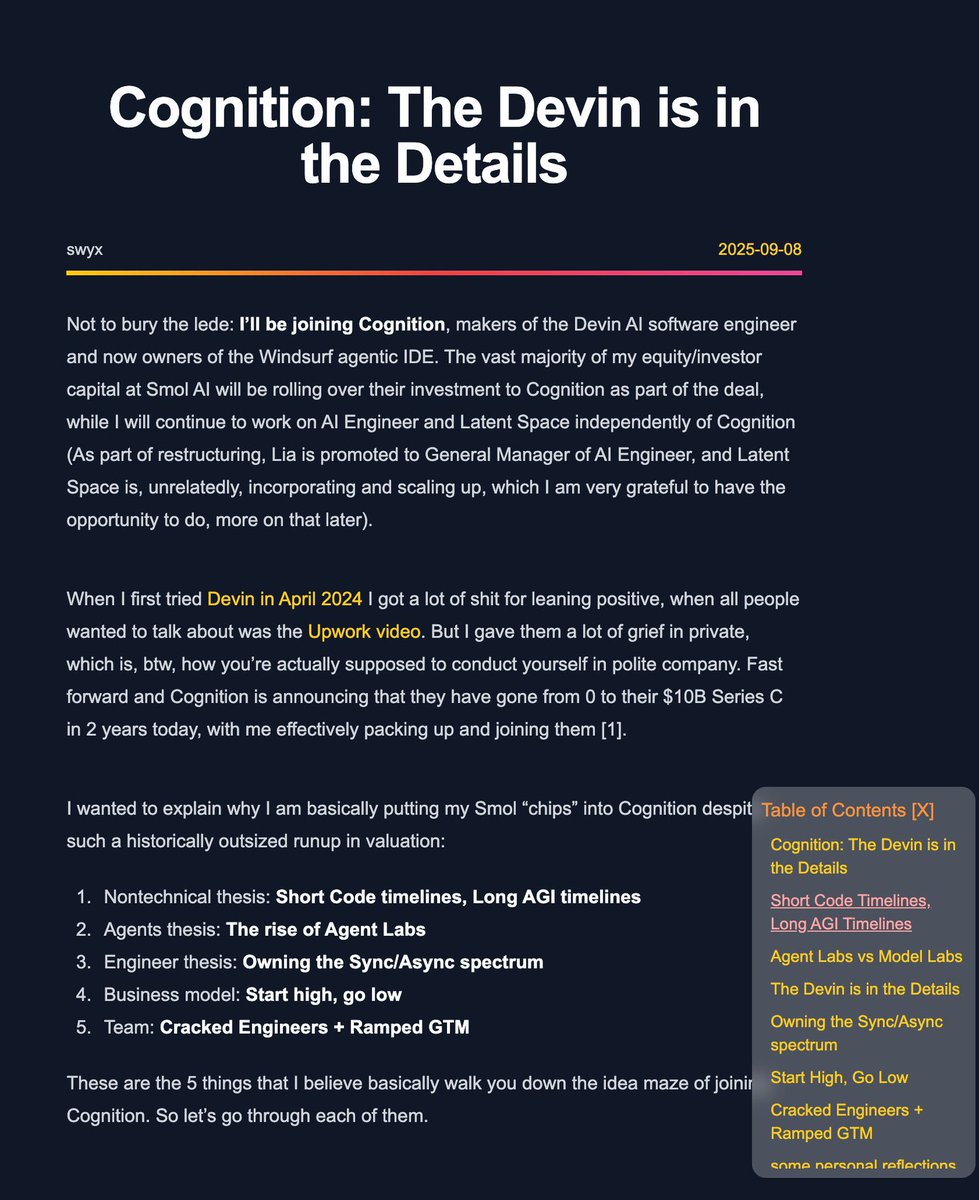

@cognition new post on joining Cognition at it's $10b Series C: The Devin is in the Details swyx.io/cognition

@nummanali tmux grids are awesome, but i feel a need to have a proper "agent command center" IDE for teams of them, which I could maximize per monitor. E.g. I want to see/hide toggle them, see if any are idle, pop open related tools (e.g. terminal), stats (usage), etc.

Software is no longer "eating the world." AI is eating software. The old moats are collapsing overnight. We are entering the Economy of Action, where the primary actors aren’t humans clicking buttons, but autonomous AI agents transacting in milliseconds. But there is a massive problem: Software cannot secure an AI-driven world. Software security is probabilistic. In a war of code vs. code, the attacker only needs to be right once. AI has turned "good enough" into "extinct." At @Ledger, we’ve spent 12 years preparing for this collision of Blockchain and AI. We are moving the "root of trust" out of the reach of code and into the Physics of Trust. By anchoring identity and governance in immutable silicon—Secure Elements logically isolated from the OS—we create a physical barrier that AI simply cannot cross. Ledger is the weapon for the Agentic Economy.

An AI agent with a wallet sounds powerful, right? Until it over-optimizes, hits limits, and starts failing in public. New Ledger Podcast: why agentic commerce needs human-in-the-loop guardrails, secure screens, and keys that never leave the secure element 🔒 Built during the Circle USDC Hackathon by @philippehebrard, @gm4thi4s, @iancr and @claudeai Watch the full episode 👇