pp

67 posts

Regarding DAGKnight - the cascade voting isn’t implemented yet nor is the code here in any way mainnet or testnet ready yet, but I do have a vanilla static-DAG based impl that has core components of the protocol like hierarchic conflict resolution and incremental coloring in place. I was working on this on a private repo, but what the heck, I pushed it out in my rusty-kaspa fork if anyone wants to see. @michaelsuttonil I think it’s time to share that long overdue post soon. The attached image shows a view of what the DK parent selection looks like from the pov of the next block to be mined (block 64). It correctly selects a parent from the supposed “honest” cluster. The blue line is the VSPC.

Have you ever looked at different DLT projects and realized they're all converging on the same ideas, just with different terminology? And have you ever wondered what would happen if you forced all DLT projects to have a baby, where each could only contribute their most powerful ideas? You'd be surprised by the overlap - how non-unique many projects actually are - and how few genuinely good ideas exist. Often they sound almost trivial once you strip away the noise. The problem is that fundamental breakthroughs get buried under layers of unnecessary complexity - the inevitable result of gradually expanding a protocol's capabilities as research progresses. With Kaspa, we have the benefit of being late. In fact, we're so late that we arrive at the party when almost all the research has already been done. We can skip the archaeology and just make that perfect baby - making the final breakthrough on our quest for perfection. Today we are open-sourcing our vprogs framework: github.com/kaspanet/vprogs A post-Amdahl execution engine that enables inter-block parallelism and linear scaling beyond boundaries traditionally assumed to be possible in the context of DLT execution. By deeply understanding causal actors and domains, we eliminate almost all logic and instead encode behavior in dependencies and relational properties of a generic type framework. This allows us to transparently map hardware resources to workload - achieving linear scalability. The design principles: - No fsync / WAL flush boundaries - No mutexes / locks - Versioned append-only data with efficient rollbacks - Maximal parallelism - even inter-block - breaking through Amdahl's law - No wasted CPU cycles on speculative execution This repo is still heavily WIP with rough edges (we don't even prune state yet). But the goal of this repository is to create a concrete instantiation of all existing research directions condensed into a singular, maximally performant type framework that gets away with almost no logic. There's still room for improvements (zero-copy deserialization, NUMA affinity, etc.) but we're converging toward a system that can eventually no longer be optimized or simplified. The holy grail of blockchain execution isn't more complex but orders of magnitude less complex than anything that exists today! I am really looking forward to tell you more about this in the coming weeks (I just ordered a new microphone pre-amp to be able to host regular hangouts where we can discuss and explain how everything works under the hood - let's pray for a fast delivery 😅).

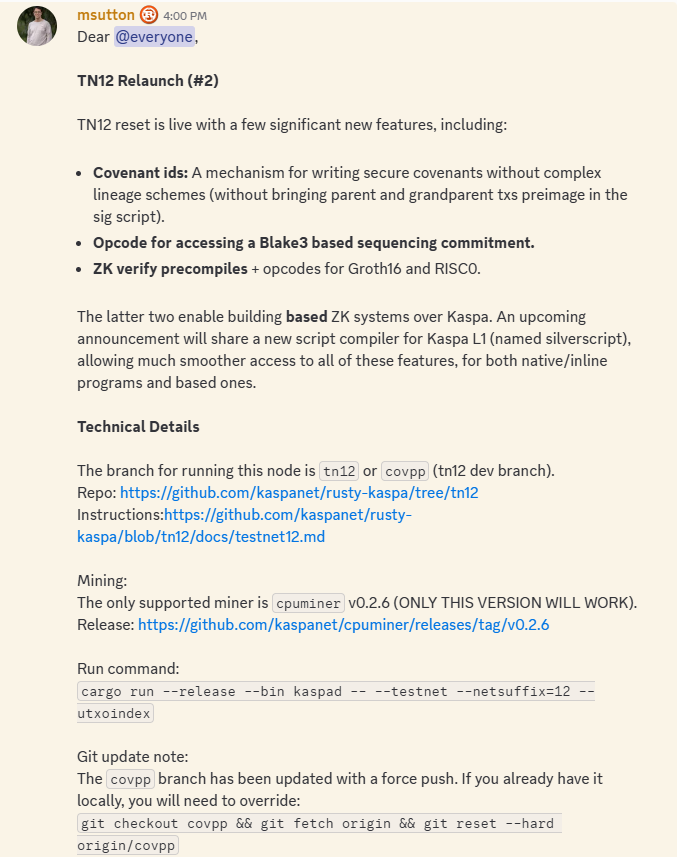

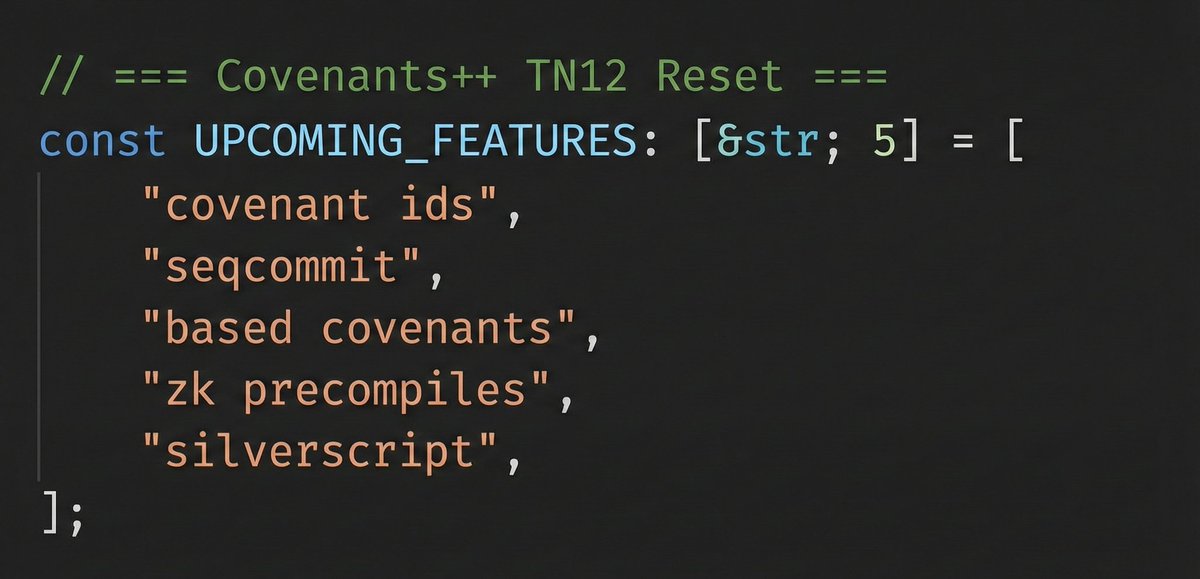

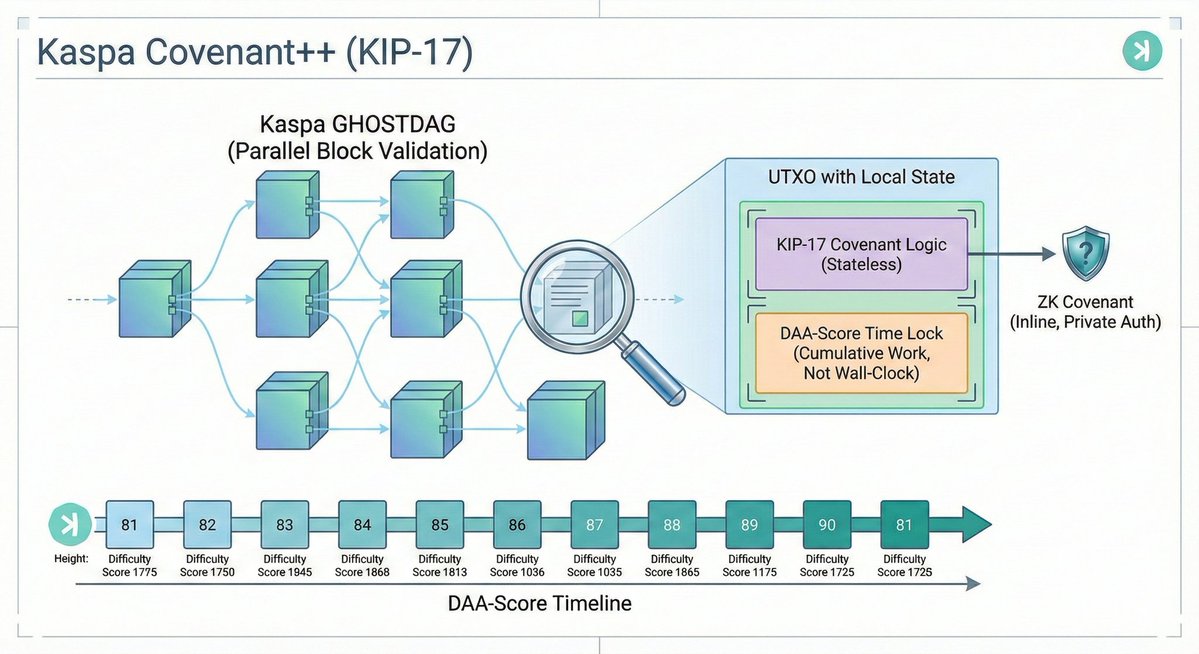

Sharing some thoughts / notes on covenants++ zk milestones (pre-HF) and the longer-term vprogs trajectory. This is highly technical material, written for core R&D context. Result of extensive brainstorming with @orinewman @hashdag and others. gist.github.com/michaelsutton/…

Now that ZKEVMs are at alpha stage (production-quality performance, remaining work is safety) and PeerDAS is live on mainnet, it's time to talk more about what this combination means for Ethereum. These are not minor improvements; they are shifting Ethereum into being a fundamentally new and more powerful kind of decentralized network. To see why, let's look at the two major types of p2p network so far: BitTorrent (2000): huge total bandwidth, highly decentralized, no consensus Bitcoin (2009): highly decentralized, consensus, but low bandwidth - because it’s not “distributed” in the sense of work being split up, it’s *replicated* Now, Ethereum with PeerDAS (2025) and ZK-EVMs (expect small portions of the network using it in 2026), we get: decentralized, consensus and high bandwidth The trilemma has been solved - not on paper, but with live running code, of which one half (data availability sampling) is *on mainnet today*, and the other half (ZK-EVMs) is *production-quality on performance today* - safety is what remains. This was a 10-year journey (see the first commit of my original post on DAS here: github.com/ethereum/resea… , and ZK-EVM attempts started in ~2020), but it's finally here. Over the next ~4 years, expect to see the full extent of this vision roll out: * In 2026, large non-ZKEVM-dependent gas limit increases due to BALs and ePBS, and we'll see the first opportunities to run a ZKEVM node * In 2026-28, gas repricings, changes to state structure, exec payload going into blobs, and other adjustments to make higher gas limits safe * In 2027-30, large further gas limit increases, as ZKEVM becomes the primary way to validate blocks on the network A third piece of this is distributed block building. A long-term ideal holy grail is to get to a future where the full block is *never* constituted in one single place. This will not be necessary for a long time, but IMO it is worth striving for us at least have the capability to do that. Even before that point, we want the meaningful authority in block building to be as distributed as possible. This can be done either in-protocol (eg. maybe we figure out how to expand FOCIL to make it a primary channel for txs), or out-of-protocol with distributed builder marketplaces. This reduces risk of centralized interference with real-time transaction inclusion, AND it creates a better environment for geographical fairness. Onward.