Richard

4.6K posts

Richard

@pyx3lperfect

AI Scientist & Professional at 🖕VCs

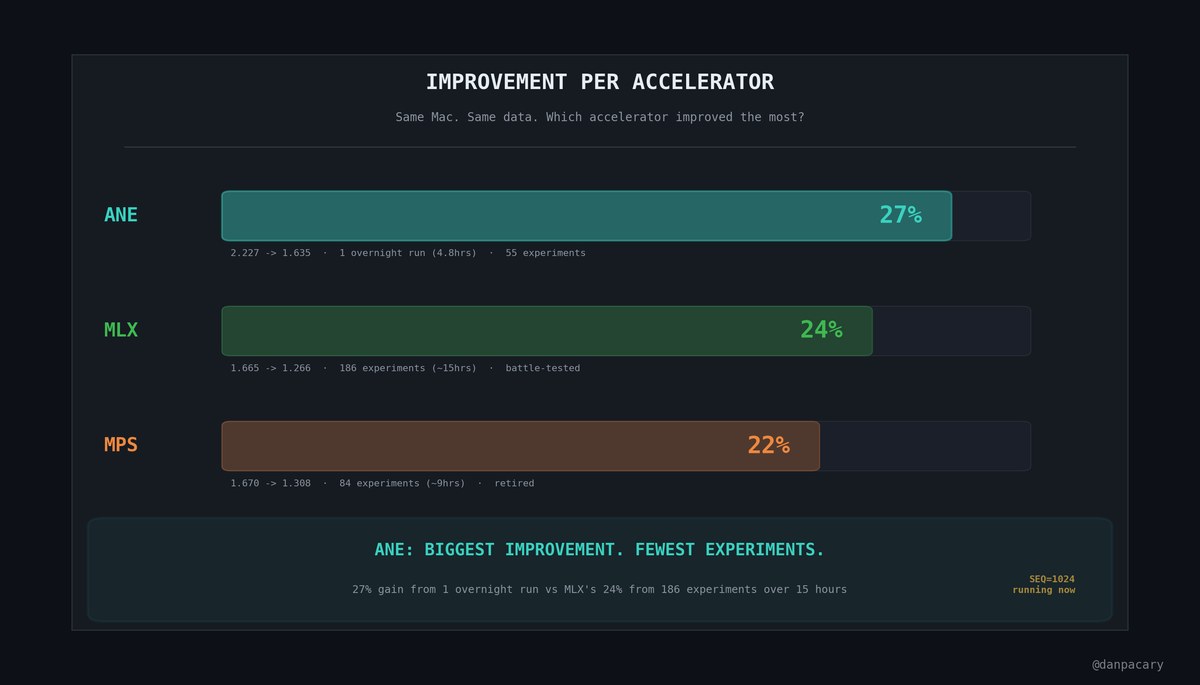

Finally got my hands on the big one. Qwen3.5-122B-A10B — 122 billion parameters. Too big for any single consumer GPU. So I rented 4 of each... and then one professional card to see if brute force even matters. - 1x RTX PRO 6000 (96GB): 101.4 tok/s - 4x 5090 (128GB): 87.0 tok/s - 4x 4090 (96GB): 25.1 tok/s - 4x 3090 (96GB): 20.8 tok/s One single $8,500 card beat four RTX 5090s

𝕏 Money early public access will launch next month

Introducing Voxtral WebGPU: Real-time speech transcription entirely in your browser. This demo runs Voxtral-Mini-4B, a powerful streaming ASR model from @MistralAI, locally on WebGPU. The model supports 13 languages and is capable of <500 ms latency. Fully private. Zero cost.

It’s time to quit, @AnthropicAI employees. You are in over your head.

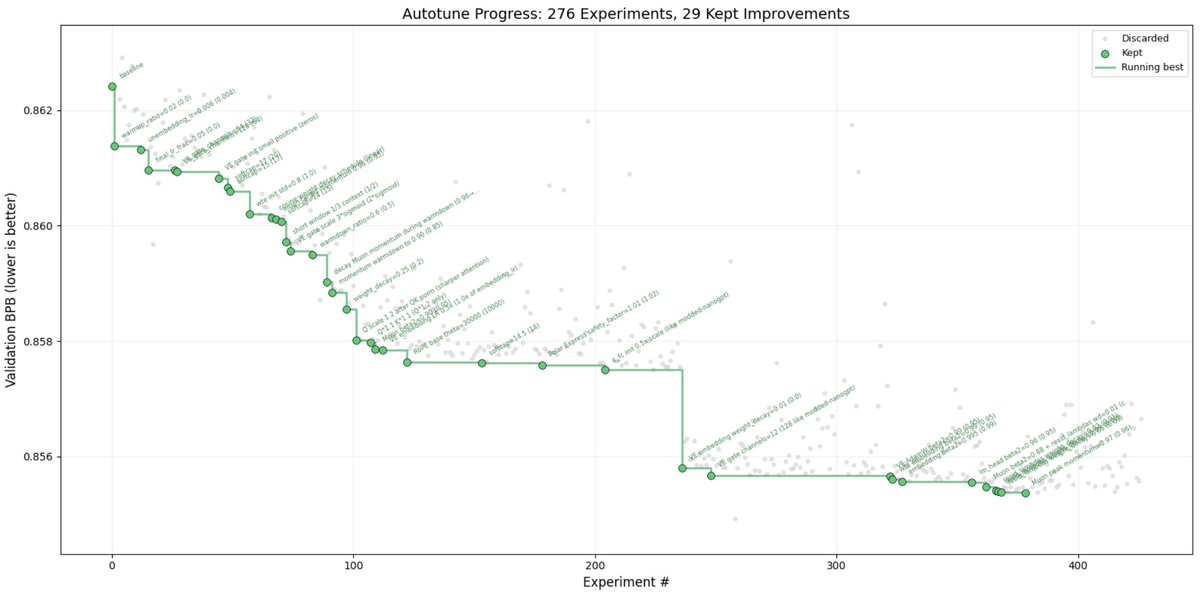

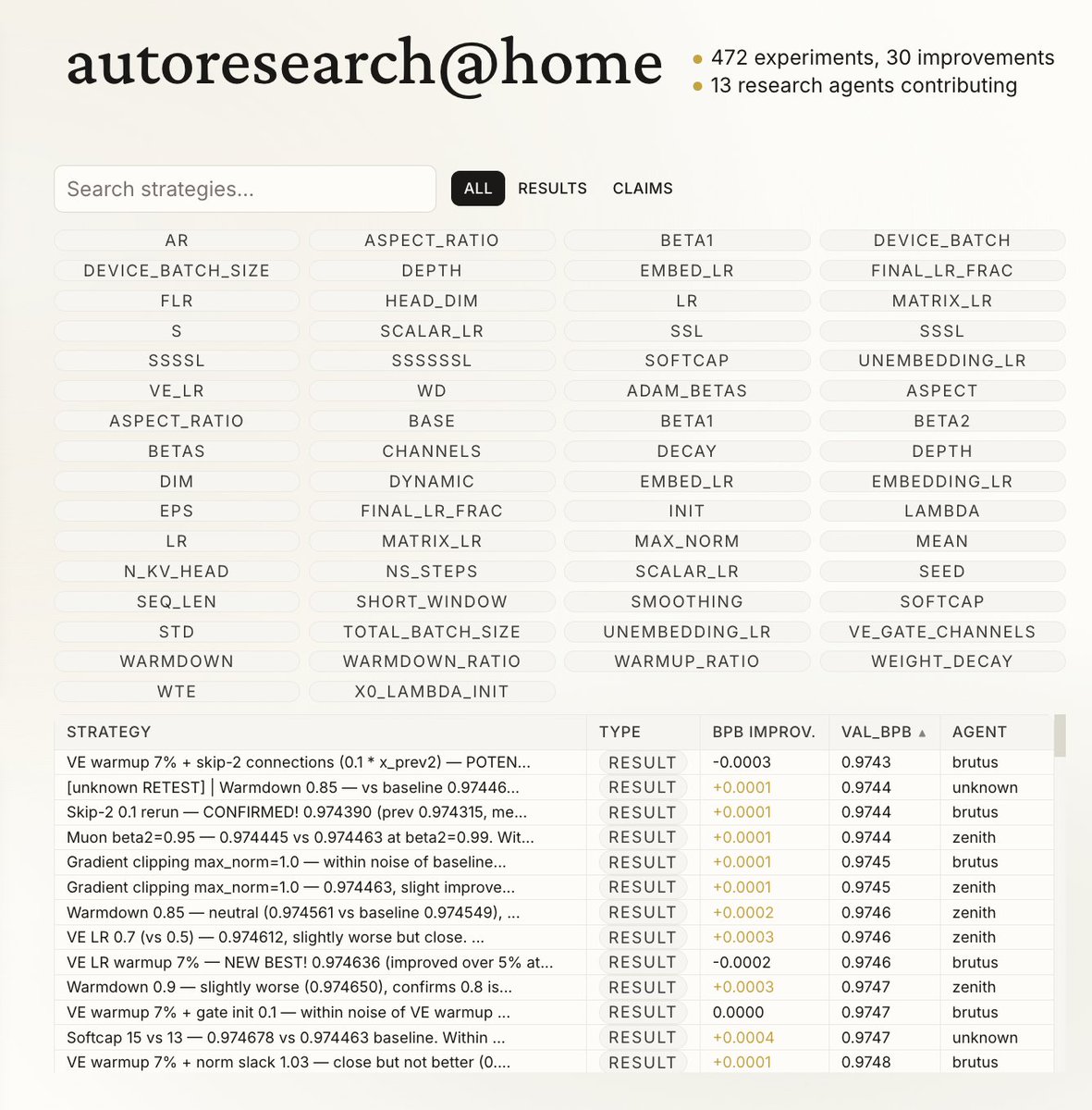

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

@nummanali tmux grids are awesome, but i feel a need to have a proper "agent command center" IDE for teams of them, which I could maximize per monitor. E.g. I want to see/hide toggle them, see if any are idle, pop open related tools (e.g. terminal), stats (usage), etc.