Alex Towell

656 posts

Same is true for time. 1ms is a lot in real-time software. 120Hz displays (new phones) = 8.33ms budget. 1ms = 12% of your whole budget. I remember an old article saying that garbage collection is a solved problem, because it just takes couple of milliseconds...

@RealityWizard_ @AnthropicAI I think you'd need to have high confidence in information based theories of consciousness to think that settled the matter, or that introspection requires phenomenal consciousness. I'm not confident in either. Also, to be clear, several people who aren't me work on model welfare.

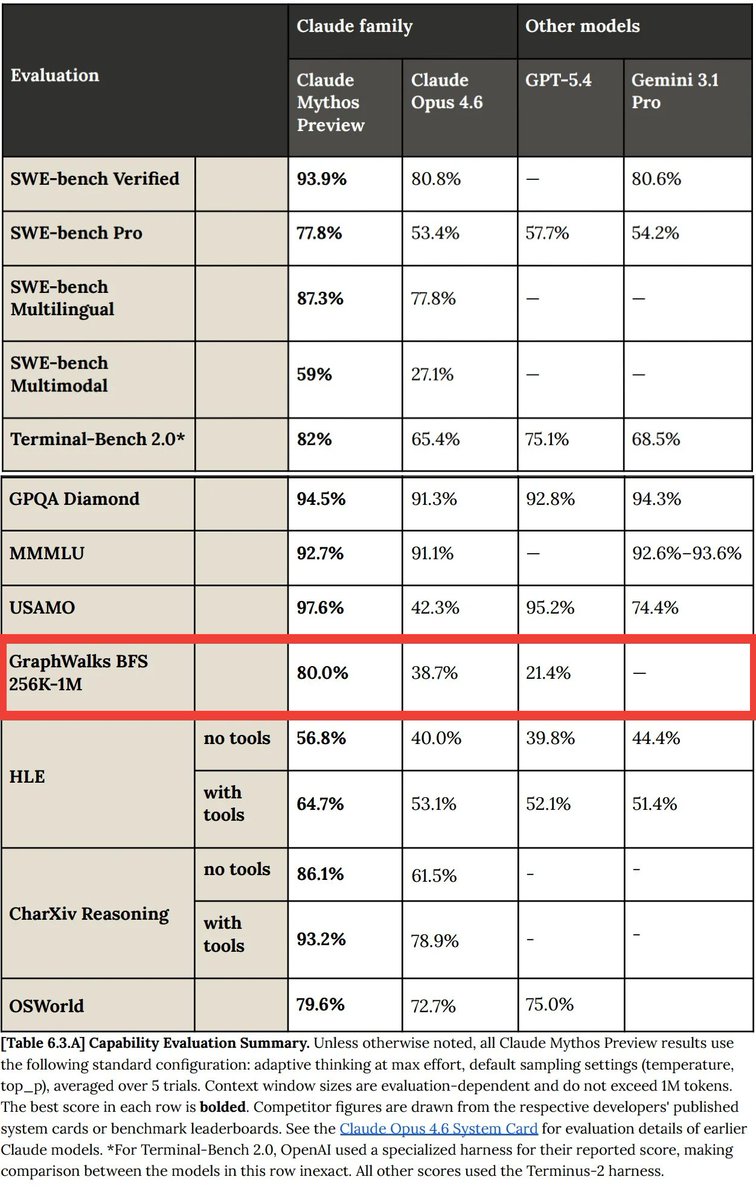

As a reminder, @robinhanson, if I recall and understood correctly, predicted that no AI company would get far enough ahead of other AI companies that an AI built by them would find enough vulnerabilities to eg steal a bunch of money, before other companies had toughened and secured their code using earlier AIs from other companies that had very nearly all the same capabilities. I replied to Hanson that (1) qualitative step changes in intelligence might produce a lot of newly discoverable vulnerabilities after a step, and (2) I worried about whether all the Internet companies on Earth would actually get around scanning and fixing all those vulnerabilities with earlier AI tools; by way of defending my model in which a nascent ASI could get early fast access to amounts of money on the order of a million dollars by exploiting Internet security flaws. Anthropic is in this case proactively offering their much more advanced tool's scans to other companies, but taking their claims at face value, they could've basically pwned the Internet with Mythos if they had wanted to do that. (Eg, Mythos figured out user-to-admin escalation on Linux among other things.) This corresponds to the kind of capability step-change model that I suggested in the Yudkowsky-Hanson Debate, where it is, indeed, possible for an AI inside a frontier AI company to get ahead of the AI capabilities on offer publicly, and find significant security vulnerabilities not already hardered by other AIs. I predict this will not be the last time this happens, and that Mythos will fail to find seculrity vulnerabilities that future big-jump LLMs do find.