Pablo Ramos

15.2K posts

Pablo Ramos

@ramospablo

Security researcher dissecting AI risks, privacy threats & digital shadows. Traveler lost in the Internet. Views my own.

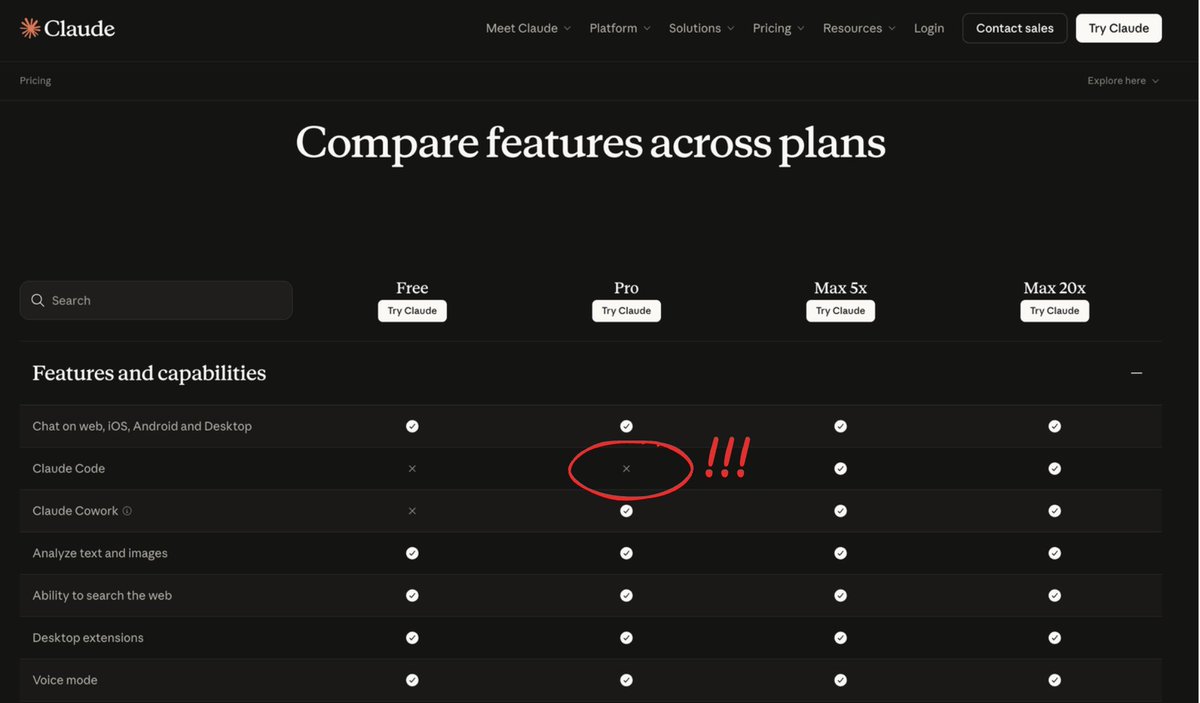

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

Jensen Huang: "If that $500,000 engineer did not consume at least $250,000 worth of tokens, I am going to be deeply alarmed. This is no different than a chip designer who says 'I'm just going to use paper and pencil. I don't think I'm going to need any CAD tools.'"

“If your $500K engineer isn’t burning at least $250K in tokens, something is wrong.”

POV: A guy with ChatGPT and Google AlphaFold just built a custom mRNA cancer vaccine to save his dog. this story is actually insane. a tech guy in australia adopted a rescue dog with aggressive cancer and only months to live. so he did something wild: > paid ~$3k to sequence the tumor dna > used chatgpt to analyze the mutations > used google’s alphafold to model the proteins > identified drug targets and designed a custom mRNA cancer vaccine he had zero background in biology. after months of paperwork, the vaccine was approved and injected. within weeks the tumor shrank dramatically and the dog started recovering. meanwhile pharma companies are running $1B trials to do the exact same thing. the future of personalized medicine with AI is going to be insane.