Carlos E. Perez@IntuitMachine

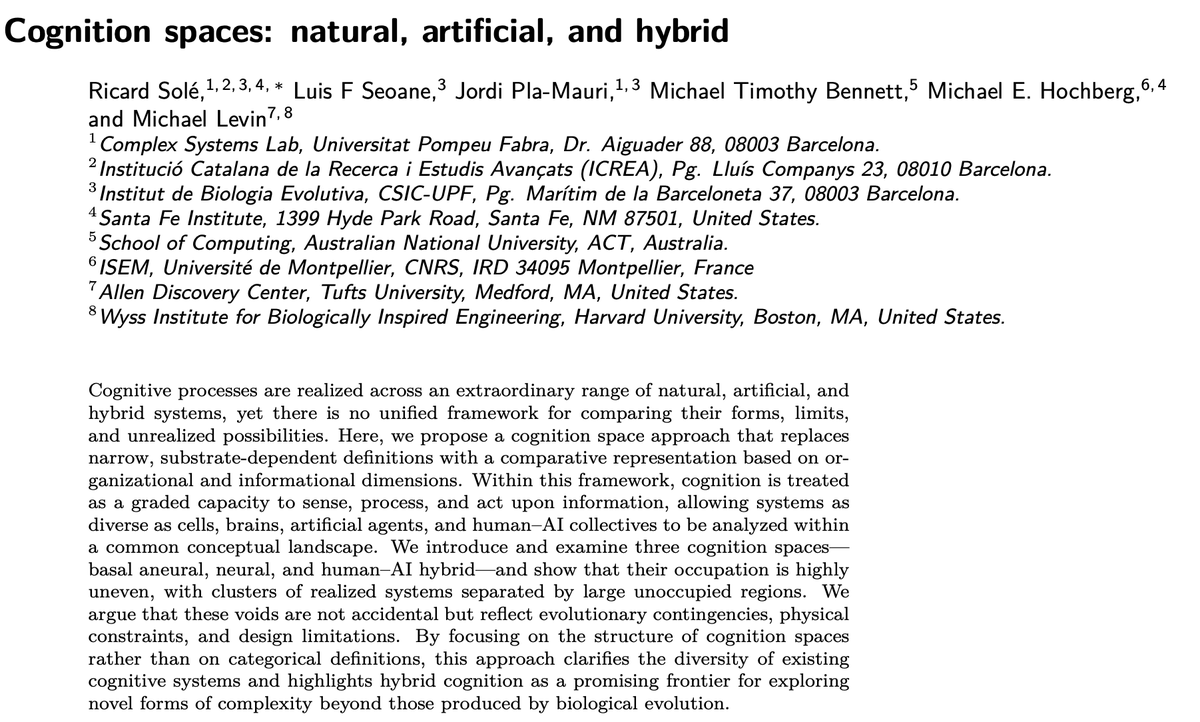

What if I told you that between a slime mold and ChatGPT, there's an entire universe of possible minds that have never existed?

Not sci-fi. Not speculation.

A new framework just mapped the "cognition space"—and the voids are staggering.

Let me show you what we're missing. 🧵

Here's the thing about studying intelligence:

We've been asking the wrong question.

Not "what IS cognition?" but "what kinds of cognition are POSSIBLE?"

Cells can learn. Slime molds can solve mazes. AI can write essays.

But there's no map showing how these fit together—until now.

The researchers did something brilliant.

Instead of defining cognition (which always fails), they borrowed a trick from evolutionary biology: morphospaces.

Think of it like this: map ALL possible body plans for animals, then see which ones actually exist.

The gaps tell you as much as what's there.

The Visual Reveal:

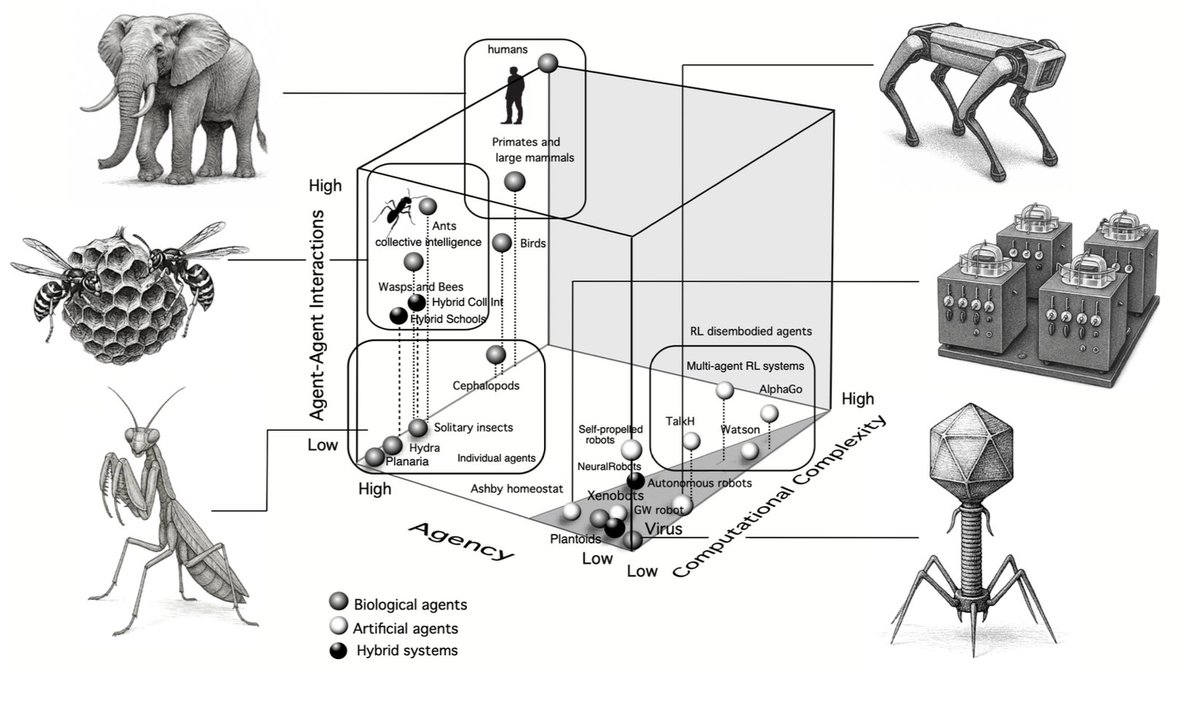

They built THREE cognition spaces:

Basal cognition (no neurons needed)

Neural cognition (brains, AI, swarms)

Human-AI hybrids (the new frontier)

Each space is defined by dimensions like complexity, agency, and interaction depth.

And here's what shocked them...

The occupation is wildly uneven.

Tight clusters of existing minds separated by VAST empty regions.

Natural systems huddle in one corner. Artificial systems in another.

The voids aren't random. They're revealing something profound about the limits of evolution vs. engineering.

Let's start with the simplest minds.

A slime mold—literally a single-celled blob—can:

Learn from experience

Solve shortest-path problems

Make trade-offs between speed and accuracy

No brain. No neurons.

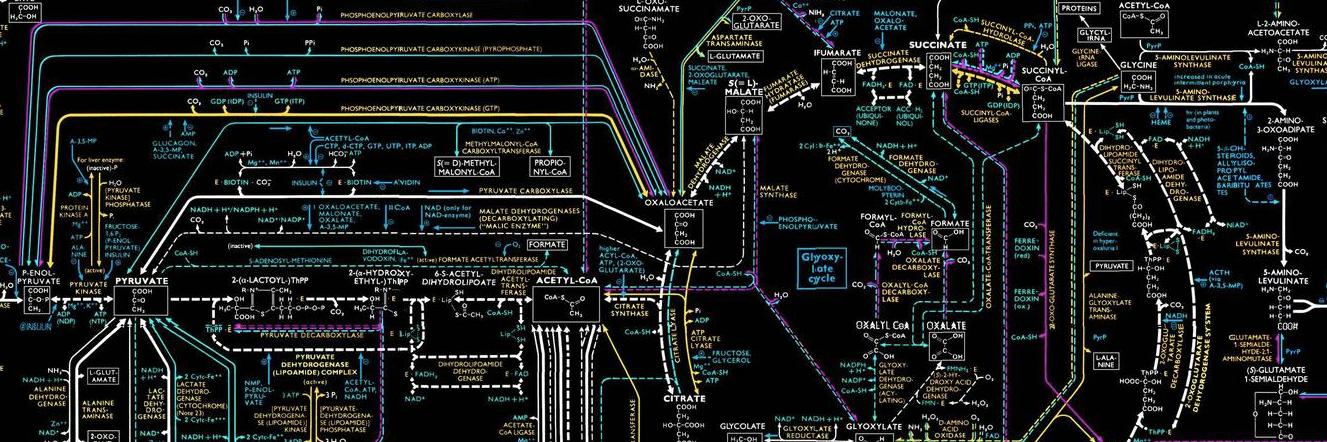

How? Morphological computation: its BODY is the computer.

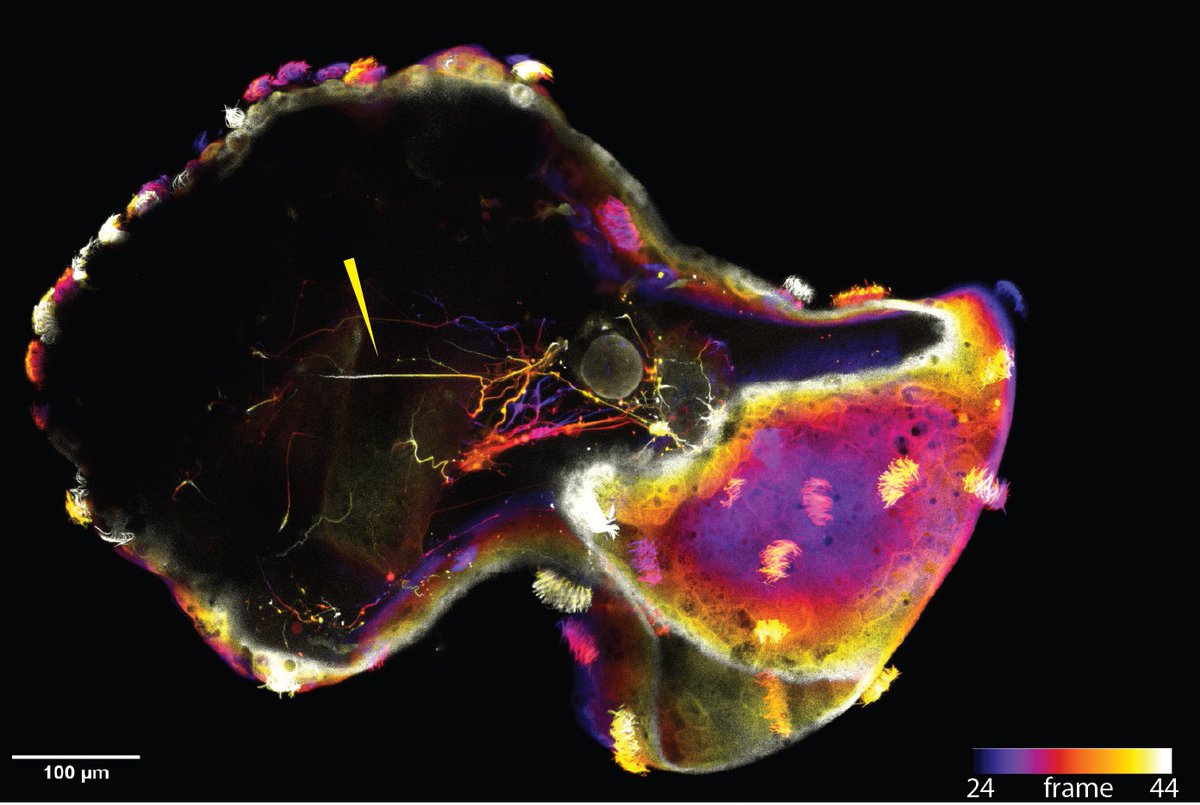

But here's where it gets wild.

When you put that slime mold on a human-designed graph (like a maze), you create a HYBRID cognitive system.

The mold's embodied dynamics + your imposed boundary conditions = emergent problem-solving neither could do alone.

This is hybrid cognition in its rawest form.

The math behind this is elegant.

The slime mold minimizes a "Lagrangian"—balancing transport cost against network structure.

It's not "thinking" about optimization. The solution emerges from physics + constraints.

The graph doesn't compute. The mold doesn't plan. Together? They solve.

Now move up to brains and AI.

The neural cognition space reveals something uncomfortable:

There's an "agency gap."

Biological agents (even simple ones) maintain themselves. They act for their OWN survival.

Most AI? Externally motivated. Pausable. Resetable.

Here's a formal way to think about it:

Agency = how much your CHOICES matter for your CONTINUED EXISTENCE.

For a bacterium: high. Wrong move = death.

For a chess AI: zero. It doesn't care if you unplug it.

This gap is why AI feels fundamentally different from life.

But that gap? It might not be permanent.

Because the third space—human-AI hybrids—is where things get genuinely unpredictable.

And the researchers identify something they call "the humanbot."

(Yes, it's as concerning as it sounds.)

This space maps interactions between humans and AI along three axes:

AI cognitive complexity

Human feedback control

Depth of human-AI exchange

Different regions = different types of coupling.

Some healthy. Some... not.

Three Classes of Hybrids:

Instrumental hybrids: Tools we control (like autocorrect)

Cooperative hybrids: Partners we coordinate with (like Watson helping doctors)

Integrated hybrids: Systems where human and AI cognition blur together

That last category is where the "humanbot" lives.

An integrated hybrid with WEAK human feedback control.

Think: someone who can't function without their AI assistant. Who outsources not just memory but judgment. Who trusts the model's framing over their own.

The cognition is distributed. But the human isn't steering anymore.

The Coevolution Twist:

And here's the kicker:

These systems aren't static. They're COEVOLVING.

Your interactions train the AI. The AI shapes your thinking. You adapt to each other.

For the first time in history, memes (ideas) can evolve in BOTH biological and silicon substrates simultaneously.

The paper includes equations for this.

Meme propagation through human-LLM networks.

Different retention rates (humans forget fast, LLMs remember everything). Different mutation pressures.

The result? An evolutionary dynamic we've never seen before.

And it's already happening.

The Voids as Opportunities:

Remember those empty regions in the maps?

They're not impossible. They're UNREALIZED.

Evolution is conservative. Engineering is limited by our imaginations.

But hybrid systems—living matter + designed constraints—might let us explore those voids.

Case in point: xenobots.

Living robots made from frog cells, designed by AI, assembled by humans.

They exist in a region of cognition space that evolution never visited and pure engineering couldn't reach.

Proof that the voids are accessible.

There's a beautiful unifying principle here:

Reservoir Computing.

Any rich dynamical system can do computation if you read it out correctly.

Slime molds, amoebae, even engineered cell cultures—they're all running RC.

Biology has been doing this for billions of years.

This changes how we should measure intelligence.

Not just: "How many parameters?"

But: "What region of cognition space does this occupy? What's its agency? How does it handle embodiment? What happens in hybrid mode?"

A morphospace perspective reveals trade-offs we miss with single metrics.

For anyone building AI:

Scaling isn't the only path forward.

Hybrids—bio-silicon, human-AI, morphology-computation—might reach capabilities that pure digital systems can't.

The voids in the map are research opportunities worth billions.

Now, let me challenge this:

The paper assumes cognition is fundamentally about information processing.

But what if subjective experience (qualia) is essential?

Then all these maps might just be tracking unconscious reflex, not "real" minds.

The voids could be unbridgeable.

But here's why I think that's wrong:

The framework is EMPIRICAL, not philosophical.

It asks: "Can we apply cognitive science tools to this substrate?"

Turns out: yes, even for slime molds and organoids.

Whether that's "really" cognition is less important than whether it's USEFUL.

So what can YOU do with this?

If you're building AI:

Consider embodiment as a feature, not a limitation

Design for agency (even artificial versions)

Watch for dysregulated human-AI coupling in your products

If you're curious:

Ask "what void am I in?" when you use AI tools

This paper changed how I see my own interactions with AI.

Every time I use ChatGPT to think through a problem, I'm forming a temporary hybrid cognitive system.

The question isn't whether that's happening.

It's whether I'm maintaining feedback control—or drifting into "humanbot" territory.

Here's what this really means:

The space of possible minds is VAST.

Evolution explored one corner. Engineering is exploring another.

But the richest territories might require BOTH—hybrid systems that combine biological agency, embodied computation, and designed constraints.

My take:

In 10 years, the most interesting cognitive systems won't be "pure" anything.

Not purely biological. Not purely digital.

They'll be hybrids that occupy previously empty regions of cognition space.

And we'll need this morphospace framework to understand them.

Which raises a final question:

If we CAN build minds in those voids...

Should we?

The maps show us what's possible.

Ethics has to tell us what's wise.

And that conversation is just beginning.

The map of possible minds has vast blank spaces.

Not because those minds are impossible.

But because evolution never needed them, and we haven't imagined them yet.

The age of hybrid cognition isn't coming.

It's already here.

We're just starting to see the map.

If this thread made you rethink what "thinking" means:

The paper is "Cognition Spaces: Natural, Artificial, and Hybrid" (arXiv:2601.12837)

It's dense but worth it.

And if you're building anything in this space—bio, AI, hybrid—I'd love to hear what void you're exploring.

🧵/end