Mustafa Işık

67 posts

@realMustafaIsik

Research at @synthesiaIO. Previously at @tavus @hedra_labs, @eth_en, @AdobeResearch and @TU_Muenchen.

Our new paper performs exact volume rendering at 30FPS@720p, giving us the highest detail 3D-consistent NeRF! Paper: arxiv.org/abs/2410.01804 Website: half-potato.gitlab.io/posts/ever

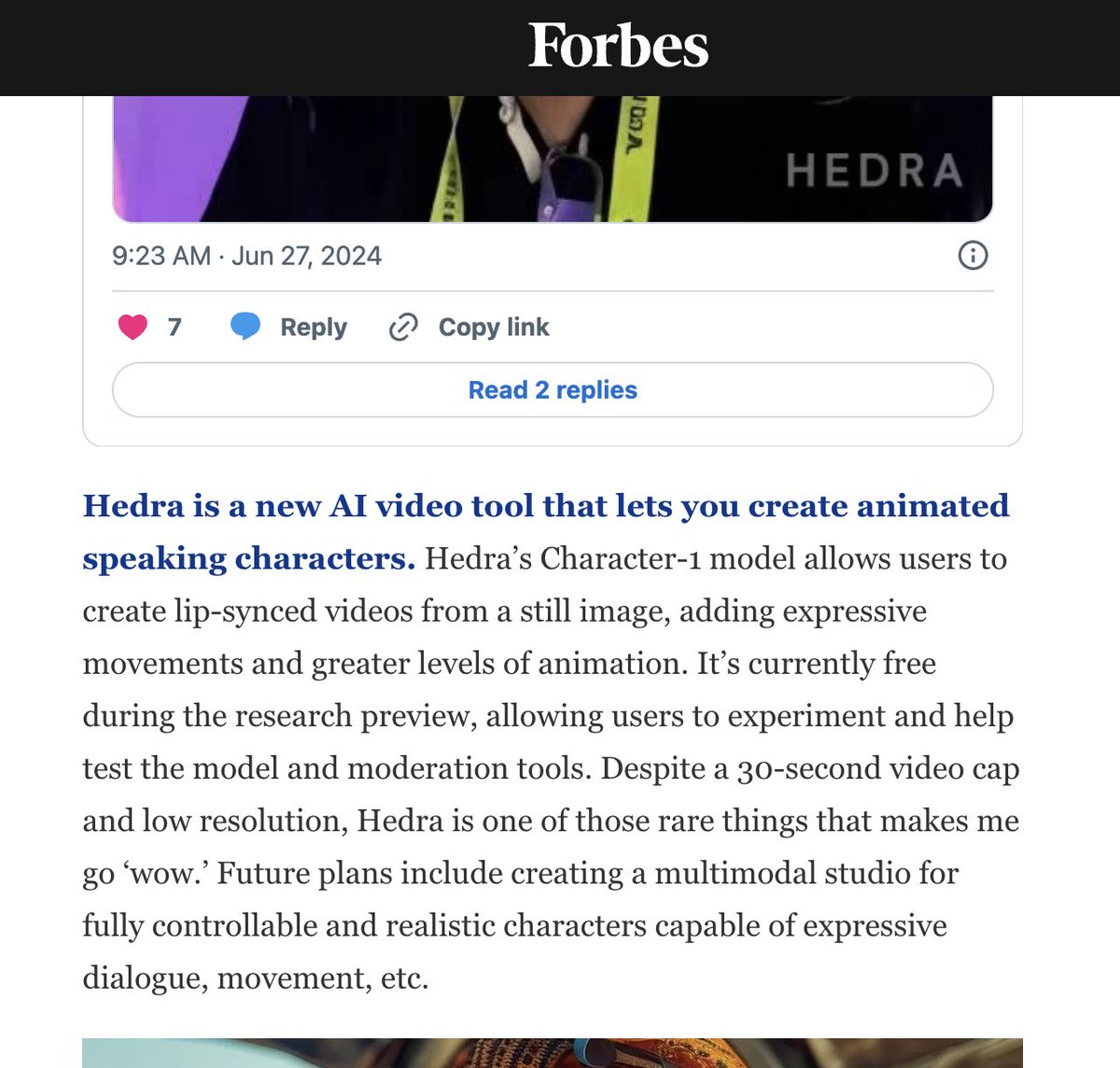

Introducing the research preview of our foundation model, Character-1. Available today at hedra.com (on desktop and mobile). * Infinite duration (30s for open preview) * 90s generated per 60s (if our H100 supply holds) * Expressive talking, singing, rapping characters This is the first step in Hedra’s mission to build a multimodal creation studio accessible for everyone, giving creators complete control over emotional dialogue, movement, and (yes) entire worlds. Pro tips in thread:

Introducing Sora, our text-to-video model. Sora can create videos of up to 60 seconds featuring highly detailed scenes, complex camera motion, and multiple characters with vibrant emotions. openai.com/sora Prompt: “Beautiful, snowy Tokyo city is bustling. The camera moves through the bustling city street, following several people enjoying the beautiful snowy weather and shopping at nearby stalls. Gorgeous sakura petals are flying through the wind along with snowflakes.”

Animatable Gaussians: Learning Pose-dependent Gaussian Maps for High-fidelity Human Avatar Modeling Projectpage: animatable-gaussians.github.io