ricursive

177 posts

@ricursive Please leave the field of computer programming and never ever think about re-entering it

English

@rfleury I think it depends on how the model was trained. Let's take huffman encoding as an example the most common recurring pattern gets the least amount of bits. Maybe a single sentence or even a word can become enough to reproduce that verbatim or in different permutations.

English

“Vibecoding”, i.e. ~hands-off usage of LLMs to rapidly generate code without regard for the actual code’s contents, for novel applications, can literally never be non-slop, because—as I’ve described before—there is not enough bits of information content in prompts to express the user’s exact desires in sufficient detail, and the desired solution is not expressed in training data (due to the problem’s novelty).

Only a sentient human developer can relate to another human user to determine what is desirable, and design the software such that it accomplishes this desirable outcome, and carefully verify that it is doing that, rather than something else (potentially undesirable).

This is true even for the combinatoric space implied by the training data, for instance if the novel problem is merely novel in that it combines pieces of existing solutions. There needs to be a guiding force to know what to combine and how.

The more detailed the prompt becomes, the more human oversight (the more human-guided round trips with the LLM), the closer it becomes to actual code (i.e. detailed execution instructions for a computer).

Ryan Fleury@rfleury

@yacineMTB Contradiction of terms

English

@rfleury @bkaradzic Lke he said if you were to repurpose this for WebGL. I did have horrible performance issues with state changes years ago too until I cached them, for glBindTexture that's probably fine but maybe Chromium or Firefox it's GPU "driver" shim fixed it by now.

English

@bkaradzic This still causes significant performance penalties on WebGL? I mean, it should probably not be an issue if you are not switching pipelines all the time? Which is ideally what you have, if you batch things appropriately.

English

@TravelerOfCode @Misfortuneee I don't trust AI blindly in my domain at all but that's why I make use of it. I know when it's fucking shit up. It's also not true, you don't need to be good at drawing things to recognize good art.

English

@Misfortuneee everyone trusts ai in the domain they cant evaluate. its always the other side that looks unreliable. blind spots are symmetrical

English

@akumatekiz Sorry, translation seems to have failed us. Can you elaborate?

English

Apparently animating more than ~20 characters in modern graphics engines is a big deal😅

324 skinned characters animating independently in WebGPU (browser)

Each character is playing animating with a completely separate skeleton and a timeline. No two characters sample the same time.

Character has 66 bones and 28,106 triangles

I want to stress that there is no instancing of any kind here, and CPU is not involved at all.

English

@SebAaltonen I don't know how useful AGENTS.md are. I never use them myself and just assume anything you tell it, it could do those things because of ironic process theory. Sure without it it could also do that but I think less likely. I agree that you should review LLM code though.

English

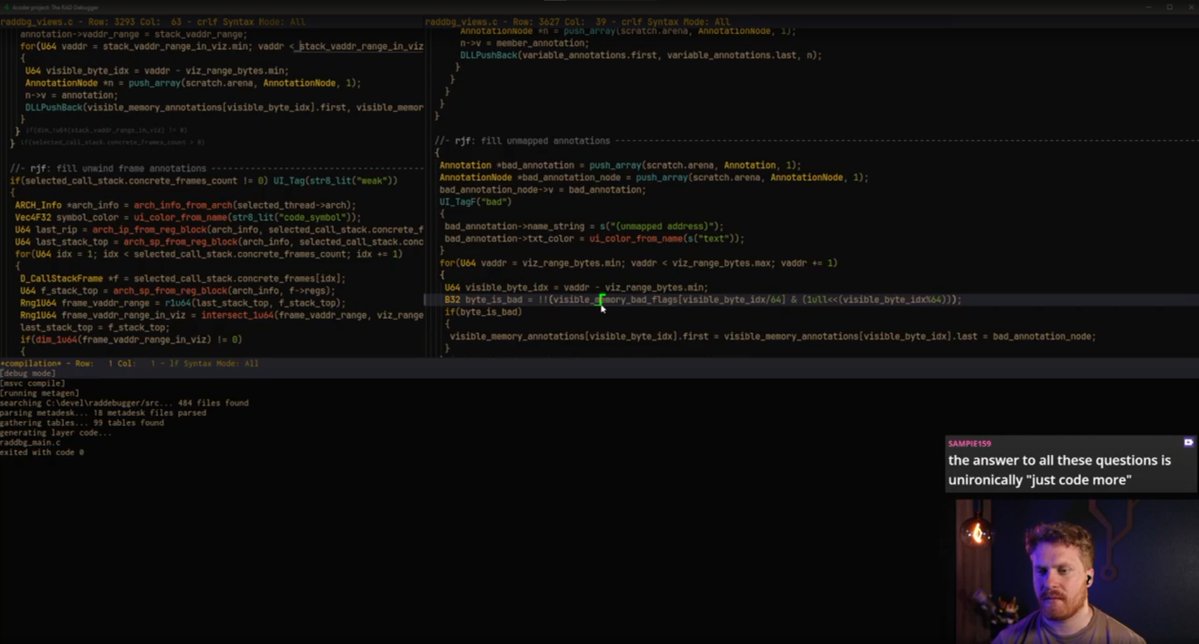

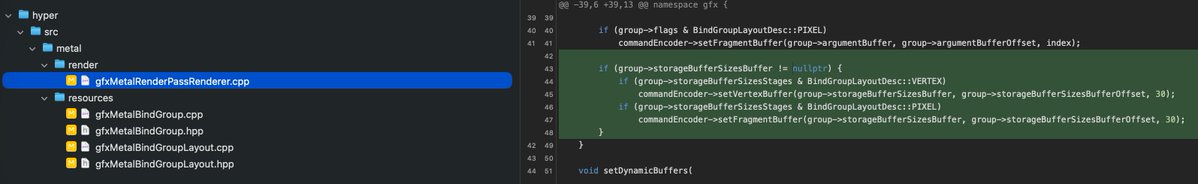

You have to review all LLM code!

Codex 5.5 tried to push this awful hack to our Metal backend when it was coding font rendering. It decided to implement hacky "robust buffer access" style OOM check inside the shader and hacked our whole Metal binding architecture to add a special bind group slot 30 (hardcoded) to deliver sizes of all buffer bindings. This of course made the binding model super slow and required extra data for each buffer.

English

@abdimoalim_ I don't think it matters. The problem would be having abundance to work on computers instead of surviving. Convincing people to make computers is the difficult part. There wouldn't be enough time and resources either way so generations would forget until we start over or don't.

English

@thsottiaux Did you recently reduce the Cybersecurity warnings? I kept getting these warnings all the time and it even stops Codex too. This week has been awful and it seemingly stopped but I'm not sure.

English

@TheGingerBill The same reason you started making your own programming language perhaps.

English

I don't know if a lot of people have thought why this happened.

To make Linux viable for the layman, Valve had to make Proton (derived from Wine) so that Win32 API became the first and only stable ABI on Linux.

Why did Linux Distro devs not care about stable ABI historically?

sudox@kmcnam1

English

@nicbarkeragain Are we calling common sense techniques now? Has AI fried everyone's brain?

English

Just a heads up that you should never be rendering 10,000 lines of anything, especially if all the lines are the same height, as in code.

List virtualisation is a very old and simple technique.

You can experiment with the below at nicbarker.com/virtual-scroll…

GitHub@github

You know how you can render a 10,000-line diff without melting the browser? By focusing on simplicity. 🧵

English

@trq212 When you forget to launch Claude with --dangerously-skip-permissions and then close it and relaunch with --resume does it invalidate the entire cache? For some tasks I run on a VM I sometimes forget to do this and then reopen it quickly with --resume, does the cache invalidate?

English

we're doing a lot more of this, hunting down some of the most annoying bugs in Claude Code

let me know if you have any white whales

ClaudeDevs@ClaudeDevs

In the last four Claude Code CLI releases, we’ve shipped 50+ stability and performance fixes. Faster resume, stable auth, lower memory, fewer hangs: 🧵

English

@SebAaltonen Have you tried GPT-5.3-Codex-Spark? I heard it was bit worse but faster and more finetuned for code. Not sure if I want to waste my time with it though.

English

@saynothingetal @francoisfleuret It'll just move the bottleneck and eventually clog it up at the senior software engineer and you're also burning him out if you keep sending him slop. You already see it happening with open-source software PRs, they don't accept them anymore from AI slop.

English

@francoisfleuret You’re deluding yourself. I’ve seen engineers who were about to be fired become passable after adopting AI so long as a senior reviewed their code with scrutiny.

English