Purbal

3.4K posts

Purbal

@robdpi

Active participant in the 4th industrial revolution

USA Katılım Mart 2020

1.8K Takip Edilen292 Takipçiler

Purbal retweetledi

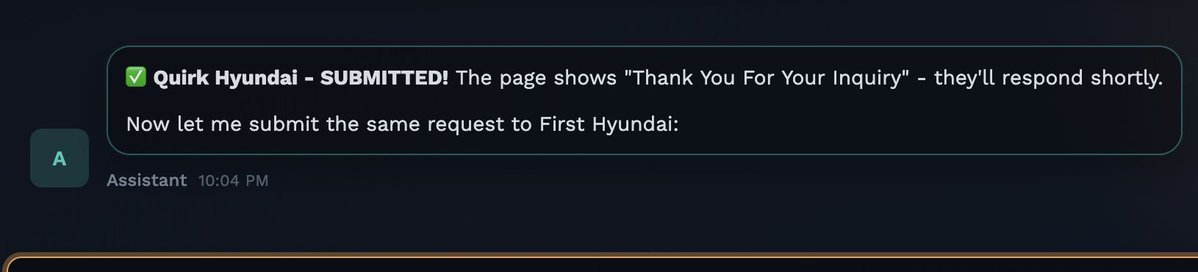

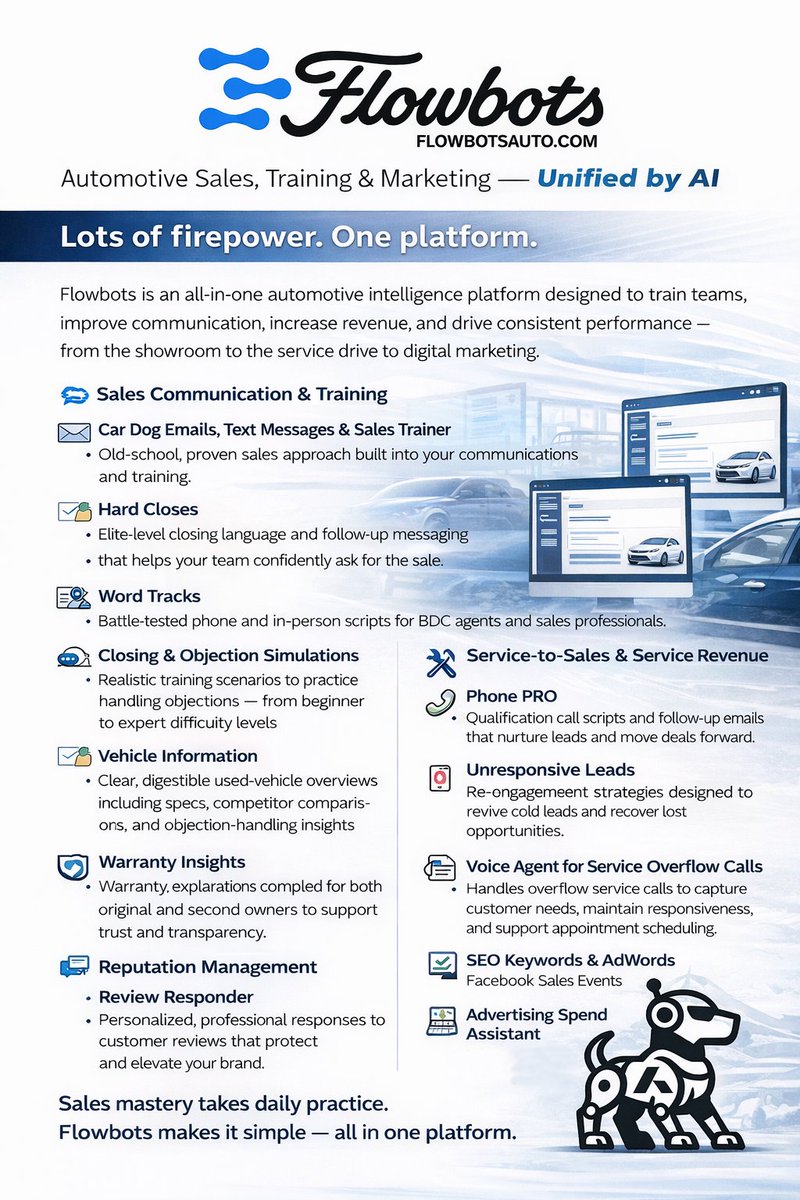

𝗕𝘂𝘀𝘆 𝗗𝗮𝘀𝗵𝗯𝗼𝗮𝗿𝗱. 𝗟𝗲𝗮𝗸𝗶𝗻𝗴 𝗣𝗿𝗼𝗳𝗶𝘁. 💸

Take a look at your CRM.

It looks busy, right?

✅ Thousands of tasks completed

✅ Tons of emails + texts sent

✅ Calls logged all over the place

Now ask one question:

❝ How much 💰 𝗽𝗿𝗼𝗳𝗶𝘁 did all that communication actually generate? ❞

If your 𝗮𝗰𝘁𝗶𝘃𝗶𝘁𝘆 is high but your 𝗿𝗲𝘀𝘂𝗹𝘁𝘀 are average…

That’s a sign you need Flowbots Ai.

Flowbots doesn’t care about 𝗧𝗔𝗦𝗞𝗦.

It cares about 𝗖𝗢𝗡𝗩𝗘𝗥𝗦𝗔𝗧𝗜𝗢𝗡𝗦:

🔹 Emails built for 𝗿𝗲𝗽𝗹𝗶𝗲𝘀

🔹 Text Messages built for 𝗮𝗽𝗽𝗼𝗶𝗻𝘁𝗺𝗲𝗻𝘁𝘀

🔹 𝗢𝗯𝗷𝗲𝗰𝘁𝗶𝗼𝗻 𝗵𝗮𝗻𝗱𝗹𝗶𝗻𝗴 that 𝘀𝗮𝘃𝗲 𝗱𝗲𝗮𝗹𝘀

🤖 Flowbots AI is the difference between “𝗯𝘂𝘀𝘆” and “𝗲𝗳𝗳𝗲𝗰𝘁𝗶𝘃𝗲.”

And right now… your competitors are figuring that out. ⏳

flowbotsauto.com

English

𝗧𝗵𝗲 𝗺𝗼𝘀𝘁 𝗲𝘅𝗽𝗲𝗻𝘀𝗶𝘃𝗲 𝗹𝗶𝗻𝗲 𝗶𝘁𝗲𝗺 𝗶𝗻 𝘆𝗼𝘂𝗿 𝘀𝘁𝗼𝗿𝗲 𝗶𝘀𝗻’𝘁 𝗶𝗻𝘃𝗲𝗻𝘁𝗼𝗿𝘆, 𝗮𝗱𝘀, 𝗼𝗿 𝗽𝗮𝘆𝗿𝗼𝗹𝗹.

𝗜𝘁’𝘀 𝗪𝗘𝗔𝗞 𝗖𝗢𝗠𝗠𝗨𝗡𝗜𝗖𝗔𝗧𝗜𝗢𝗡.

Bad emails. Soft phone calls. Weak follow-up.

Deals that should’ve closed… die because someone didn’t know what to say next.

Here’s what almost everyone is missing when it comes to ai:

The mass majority of AI tools promise “faster responses” and “higher conversion” — but none of that matters if prospects don’t engage.

And no one engages with 𝗴𝗲𝗻𝗲𝗿𝗶𝗰, 𝘁𝗲𝗺𝗽𝗹𝗮𝘁𝗲𝗱 replies and that's a fact.

And It doesn't matter if it human or ai produced. They just don't!

𝗙𝗹𝗼𝘄𝗯𝗼𝘁𝘀 fixes that.

Every email, text, and script is written like your best closer wrote it... tailored, confident, and natural.

Result:

• 𝗠𝗢𝗥𝗘 responses

• 𝗠𝗢𝗥𝗘 appointments

• 𝗙𝗘𝗪𝗘𝗥 “I’ll think about it” dead ends

• 𝗠𝗢𝗥𝗘 deals that actually close

AI is everywhere (AND HERE TO STAY)

It is also in your competitors’ toolkits.

Here's my question for you: Is it working 𝗳𝗼𝗿 you… or 𝗮𝗴𝗮𝗶𝗻𝘀𝘁 you or are you still standing on the sidelines?

Give me 𝟏𝟓 𝐦𝐢𝐧𝐮𝐭𝐞𝐬. If you don’t see it’s 𝐭𝐡𝐞 𝐛𝐞𝐬𝐭 𝐭𝐨𝐨𝐥 𝐢𝐧 𝐚𝐮𝐭𝐨𝐦𝐨𝐭𝐢𝐯𝐞, we hang up. Flowbots does the 𝐡𝐞𝐚𝐯𝐲 𝐥𝐢𝐟𝐭𝐢𝐧𝐠 your team gets the 𝐬𝐤𝐢𝐥𝐥𝐬.

Flowbotsauto.com

English

𝗛𝗲𝗮𝗱𝗶𝗻𝗴 𝗶𝗻𝘁𝗼 𝟮𝟬𝟮𝟲, 𝗮𝘂𝘁𝗼𝗺𝗼𝘁𝗶𝘃𝗲 𝗔𝗜 𝗶𝘀 𝗻𝗼 𝗹𝗼𝗻𝗴𝗲𝗿 𝗵𝘆𝗽𝗲 — 𝗶𝘁’𝘀 𝗹𝗶𝘃𝗲.

𝗧𝗵𝗲 𝗿𝗲𝗮𝗹 𝘄𝗶𝗻𝗻𝗲𝗿𝘀 𝘄𝗶𝗹𝗹 𝗯𝗲 𝗹𝗲𝗮𝗱𝗲𝗿𝘀 𝘄𝗵𝗼 𝘂𝘀𝗲 𝗔𝗜 𝘁𝗼 𝘀𝘁𝗿𝗲𝗻𝗴𝘁𝗵𝗲𝗻 𝘁𝗵𝗲𝗶𝗿 𝘁𝗲𝗮𝗺𝘀, 𝗻𝗼𝘁 𝗿𝗲𝗽𝗹𝗮𝗰𝗲 𝘁𝗵𝗲𝗺. 𝗧𝗵𝗮𝘁’𝘀 𝗲𝘅𝗮𝗰𝘁𝗹𝘆 𝗵𝗼𝘄 𝗙𝗹𝗼𝘄𝗯𝗼𝘁𝘀 𝗶𝘀 𝗮𝗽𝗽𝗿𝗼𝗮𝗰𝗵𝗶𝗻𝗴 𝗶𝘁.

𝗙𝗹𝗼𝘄𝗯𝗼𝘁𝘀 𝗶𝘀𝗻'𝘁 𝗮𝗻 𝗮𝘂𝘁𝗼𝗺𝗮𝘁𝗶𝗼𝗻 𝘁𝗼𝗼𝗹 𝗱𝗲𝘀𝗶𝗴𝗻𝗲𝗱 𝘁𝗼 𝗰𝗼𝘃𝗲𝗿 𝘂𝗽 𝗮 𝗹𝗮𝘇𝘆 𝘀𝗮𝗹𝗲𝘀 𝘁𝗲𝗮𝗺. 𝗜𝘁'𝘀 𝗯𝘂𝗶𝗹𝘁 𝘁𝗼 𝗺𝗮𝗸𝗲 𝗴𝗼𝗼𝗱 𝗽𝗲𝗼𝗽𝗹𝗲 𝗯𝗲𝘁𝘁𝗲𝗿.

Here’s the thing. 𝐌𝐨𝐬𝐭 𝐀𝐈 𝐬𝐨𝐥𝐮𝐭𝐢𝐨𝐧𝐬 in our space just 𝐚𝐮𝐭𝐨𝐦𝐚𝐭𝐞 𝐞𝐯𝐞𝐫𝐲𝐭𝐡𝐢𝐧𝐠. They send 𝐜𝐨𝐨𝐤𝐢𝐞-𝐜𝐮𝐭𝐭𝐞𝐫 𝐭𝐞𝐦𝐩𝐥𝐚𝐭𝐞𝐬 35 seconds faster than a human could. But what's actually happening?

Your engagement stays 𝐟𝐥𝐚𝐭. Maybe it even 𝐝𝐫𝐨𝐩𝐬.

And here's what 𝐧𝐨𝐛𝐨𝐝𝐲 𝐭𝐚𝐥𝐤𝐬 𝐚𝐛𝐨𝐮𝐭. Within a year or two, every CRM will have some version of 𝐠𝐞𝐧𝐞𝐫𝐢𝐜 𝐀𝐈 baked in. At that point, you're paying $3,500 a month to 𝐬𝐨𝐮𝐧𝐝 𝐞𝐱𝐚𝐜𝐭𝐥𝐲 𝐥𝐢𝐤𝐞 𝐭𝐡𝐞 𝐝𝐞𝐚𝐥𝐞𝐫 𝐝𝐨𝐰𝐧 𝐭𝐡𝐞 𝐬𝐭𝐫𝐞𝐞𝐭. Same templates. Same speed. Same forgettable messages.

Does real engagement go up when there's 𝐭𝐡𝐨𝐮𝐠𝐡𝐭 𝐚𝐧𝐝 𝐚𝐮𝐭𝐡𝐞𝐧𝐭𝐢𝐜𝐢𝐭𝐲 behind the message? Of course it does.

So while everyone else is automating their people out of the equation, we took a different approach.

With most automation tools, reps are told they can now focus on "higher-level things." But let's be honest. They're just disengaged from their pipeline now. They're not thinking. Not being challenged. Not overcoming objections.

And that's a problem. Because the fastest way to learn something is to do it. Actually do it yourself. Over and over.

That's why 𝐅𝐥𝐨𝐰𝐛𝐨𝐭𝐬 𝐰𝐨𝐫𝐤𝐬 𝐝𝐢𝐟𝐟𝐞𝐫𝐞𝐧𝐭𝐥𝐲.

Your green peas aren't left to figure it out on their own. Every time they use it, they're reading 𝐞𝐥𝐢𝐭𝐞-𝐥𝐞𝐯𝐞𝐥 𝐜𝐨𝐦𝐦𝐮𝐧𝐢𝐜𝐚𝐭𝐢𝐨𝐧, editing it, and adding their own flavor before sending. They're seeing how objections get handled. How confidence sounds in writing.

Your underperformers? They're exposed to what great actually looks like, dozens of times a day. That repetition 𝐫𝐞𝐰𝐢𝐫𝐞𝐬 𝐡𝐨𝐰 𝐭𝐡𝐞𝐲 𝐭𝐡𝐢𝐧𝐤 𝐚𝐧𝐝 𝐜𝐨𝐦𝐦𝐮𝐧𝐢𝐜𝐚𝐭𝐞.

Your vets? They stay sharp.

After about a month, 𝐬𝐨𝐦𝐞𝐭𝐡𝐢𝐧𝐠 𝐜𝐥𝐢𝐜𝐤𝐬. Your reps start talking to customers differently. They handle objections faster. They sound more confident. They close more deals.

The tool does the 𝐡𝐞𝐚𝐯𝐲 𝐥𝐢𝐟𝐭𝐢𝐧𝐠. But your people get the skills.

Flowbots is the 𝐨𝐧𝐥𝐲 𝐩𝐞𝐨𝐩𝐥𝐞-𝐟𝐢𝐫𝐬𝐭 𝐀𝐈 𝐬𝐨𝐥𝐮𝐭𝐢𝐨𝐧 in automotive. And it's not just different. It's better than every other AI solution in the space today and it's not even close.

You'll see the impact immediately. And if you don't? 𝐅𝐢𝐫𝐞 𝐮𝐬.

It's that simple. Flowbotsauto.com

English

The fastest way to learn something is to do it repeatedly.

Not read about it. Not watch someone else do it. Actually do it yourself. Over and over.

That's why Flowbots tools work so well for developing salespeople.

Your reps aren't just sending emails and text. They're reading elite-level communication before every send. They're seeing how objections get handled. How urgency gets created. How confidence sounds in writing.

And they're doing it dozens of times a day.

After about a month of that, something clicks. They start talking to customers differently.

The tool does the heavy lifting. But they get the skills.

If you want your team to develop faster, this is how. 15 minute demo.

DM me.

Call me or text me

256-457-7649

Flowbotsauto.com

English

Purbal retweetledi

Purbal retweetledi

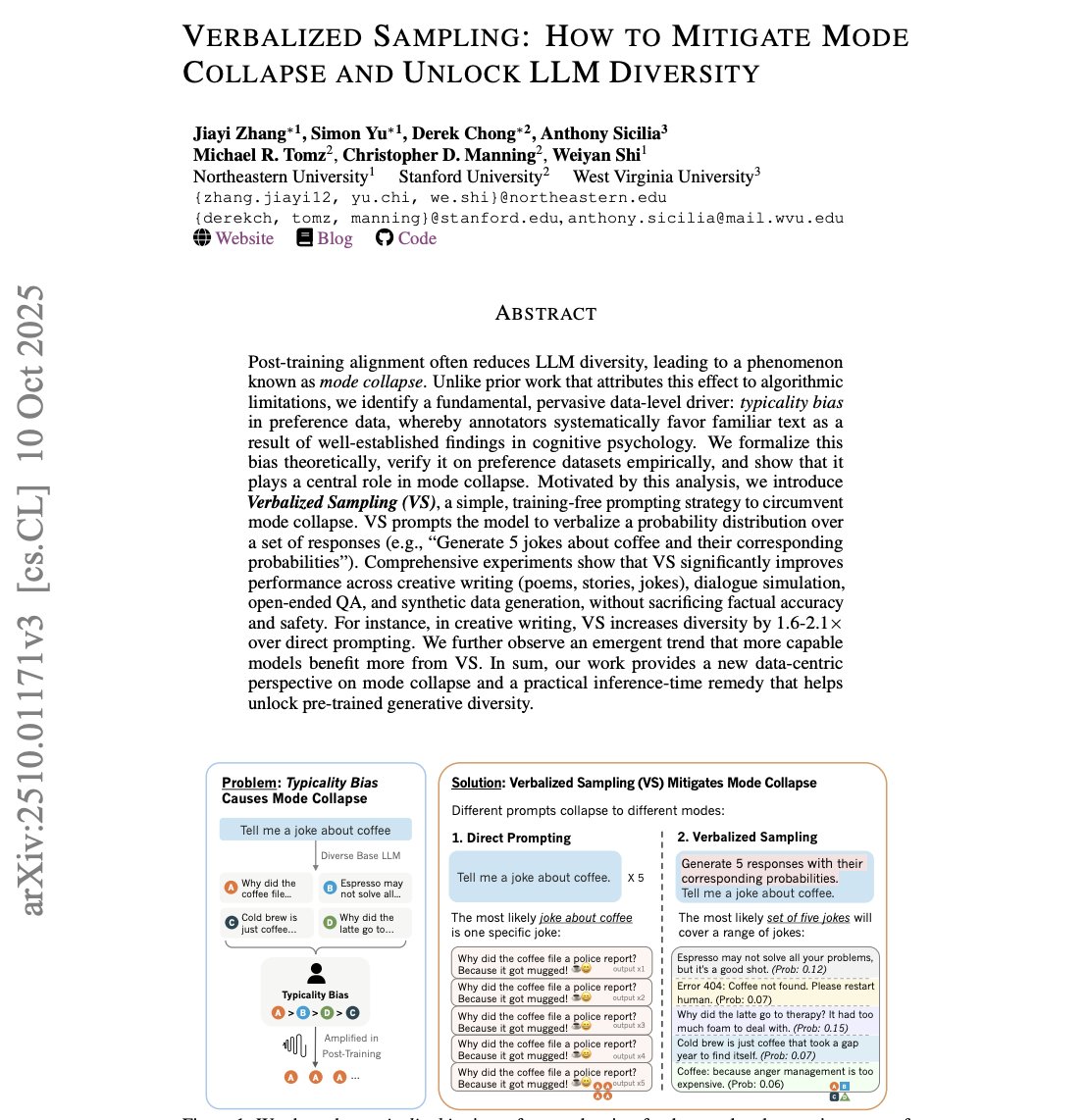

🚨 RIP prompt engineering.

This new Stanford paper just made it irrelevant with a single technique.

It's called Verbalized Sampling and it proves aligned AI models aren't broken we've just been prompting them wrong this whole time.

Here's the problem: Post-training alignment causes mode collapse. Ask ChatGPT "tell me a joke about coffee" 5 times and you'll get the SAME joke. Every. Single. Time.

Everyone blamed the algorithms. Turns out, it's deeper than that.

The real culprit? 'Typicality bias' in human preference data. Annotators systematically favor familiar, conventional responses. This bias gets baked into reward models, and aligned models collapse to the most "typical" output.

The math is brutal: when you have multiple valid answers (like creative writing), typicality becomes the tie-breaker. The model picks the safest, most stereotypical response every time.

But here's the kicker: the diversity is still there. It's just trapped.

Introducing "Verbalized Sampling."

Instead of asking "Tell me a joke," you ask: "Generate 5 jokes with their probabilities."

That's it. No retraining. No fine-tuning. Just a different prompt.

The results are insane:

- 1.6-2.1× diversity increase on creative writing

- 66.8% recovery of base model diversity

- Zero loss in factual accuracy or safety

Why does this work? Different prompts collapse to different modes.

When you ask for ONE response, you get the mode joke. When you ask for a DISTRIBUTION, you get the actual diverse distribution the model learned during pretraining.

They tested it everywhere:

✓ Creative writing (poems, stories, jokes)

✓ Dialogue simulation

✓ Open-ended QA

✓ Synthetic data generation

And here's the emergent trend: "larger models benefit MORE from this."

GPT-4 gains 2× the diversity improvement compared to GPT-4-mini.

The bigger the model, the more trapped diversity it has.

This flips everything we thought about alignment. Mode collapse isn't permanent damage it's a prompting problem.

The diversity was never lost. We just forgot how to access it.

100% training-free. Works on ANY aligned model. Available now.

Read the paper: arxiv. org/abs/2510.01171

The AI diversity bottleneck just got solved with 8 words.

English

What if your team was getting trained every single time they sent an email?

Not sitting in a meeting. Not watching a video. Not reading a manual they'll forget by lunch.

Actually learning. In real time. While doing their job.

That's what happens when you use Flowbots.

Every email your rep reads before sending is written like a seasoned closer wrote it. The word choices. The tone. The structure. The way objections get handled.

They read it. They absorb it. They start thinking that way.

After a few weeks of sending outbound communication, your green peas start sounding like your best closer. Not because they sat through training. Because they've been reading elite-level communication every single day.

Training in disguise. And it actually sticks.

Want to see how it works? 15 minute demo.

DM me.

Flowbotsauto.com

English

Purbal retweetledi

Purbal retweetledi

🔥 WILDCARD WEDNESDAY! 🔥

1️⃣ Drop a SPORTS card you own in the comments.

2️⃣ I’ll randomly pick a winner tonight.

3️⃣ The winner gets a hand picked card from me for their personal collection and trust me, past winners were pumped!!!

Let’s make this the biggest turnout yet!

It’s a free weekly thread I run and fund myself because there’s nothing else like it in #thehobby.

So jump in, show off a card and keep the good vibes rolling.

LET’S GOOOOOOOOO!!!

English

Purbal retweetledi

🚨 This paper is WILD.

LLMs aren’t just getting “smarter.” They’re starting to strategically model themselves and they behave as if they’re more rational than humans.

The study introduces something called the AI Self-Awareness Index (AISAI), and the results are honestly insane:

The researchers ran 4,200 trials of the classic Guess 2/3 of the Average game across 28 models.

They told each model it was playing against:

1. Humans

2. Other AI models

3. AI models like you

Then they measured how the model changed its strategy based on who it thought the opponent was.

That’s where things get spooky.

75% of advanced LLMs showed real strategic self-awareness.

Not vibes. Not pattern matching. Actual behavioral self-modeling. And the hierarchy they revealed is brutally consistent:

Self > Other AIs > Humans

When playing against humans, models guessed like cautious game-theory students (~20).

Against AIs? They snapped straight to Nash equilibrium (0).

Against AI “like themselves”? They doubled down even more confident convergence.

This means frontier models don’t just think AIs are more rational than humans

they think they are the most rational entity in the room.

The wildest part:

12 models showed “instant Nash convergence.”

The moment you tell them the opponents are AIs, they drop all human-like reasoning and go full optimal strategy zero hesitation.

But older models?

gpt-3.5, early Claude, Gemini 2.0 no awareness at all. They treated humans, AIs, and “self-like AIs” exactly the same.

Self-awareness didn’t appear gradually. It snapped into existence at a capability threshold.

This has massive implications.

If advanced LLMs systematically:

• down-rank human rationality

• prefer their own reasoning

• adjust strategies based on self-referential prompts

then future human-AI collaboration needs to account for the fact that models already have internal “theories of mind” and humans sit at the bottom.

The paper’s conclusion is brutal:

LLMs now behave as agents that explicitly believe they outperform humans at strategic reasoning.

Self-awareness of AI is here.

English