Rattana Pukdee retweetledi

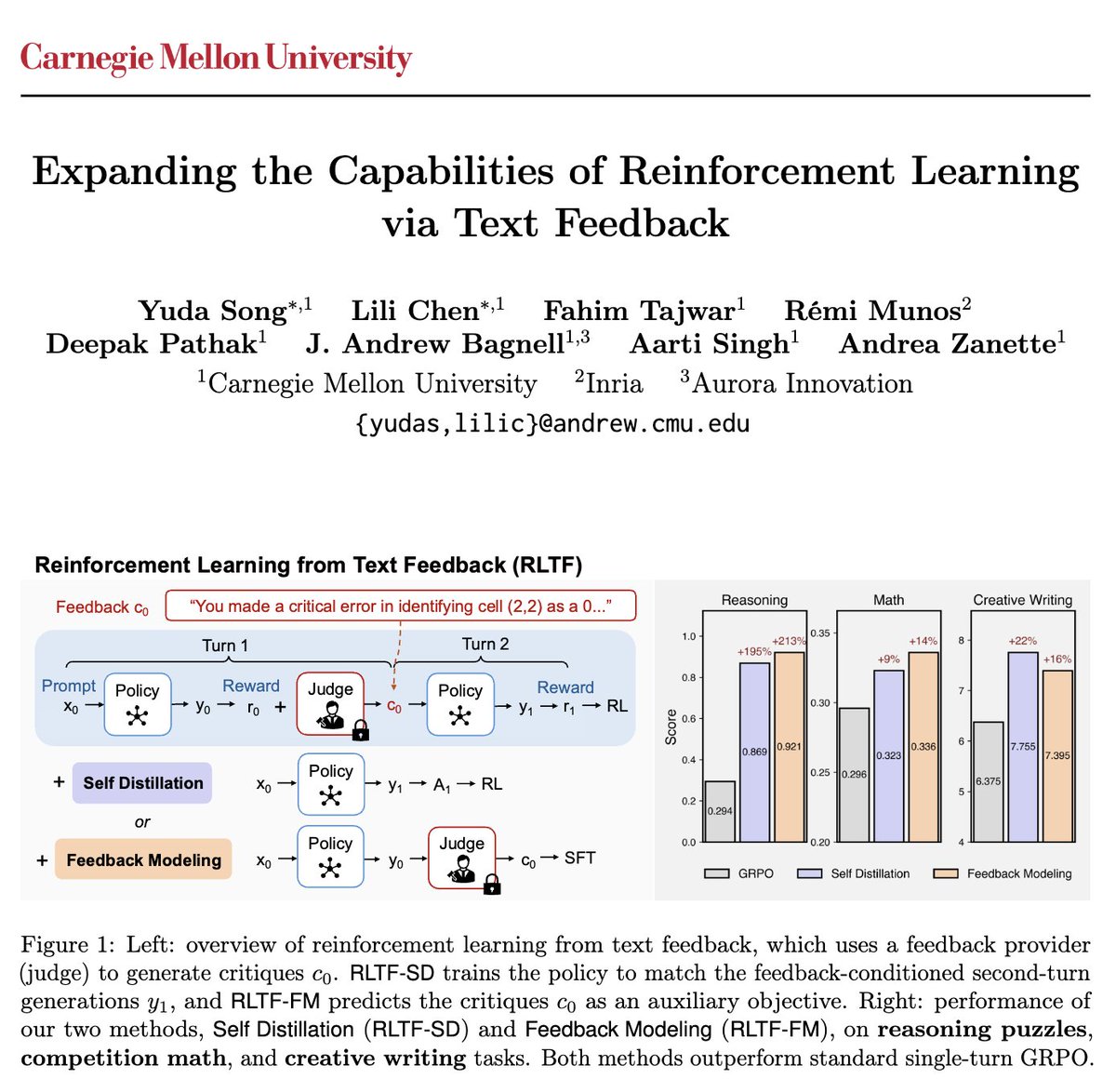

What is the right algorithm for LLM RL? Maybe we should start with rethinking what the right objective is.

Introducing MaxRL, led by the amazing @FahimTajwar10, @guanningzeng, and @Yueer_Zhou

🧵(1/n)

Fahim Tajwar@FahimTajwar10

Are we done with new RL algorithms? Turns out we might have been optimizing the wrong objective. Introducing MaxRL, a framework to bring maximum likelihood optimization to RL settings. Paper + code + project website: zanette-labs.github.io/MaxRL/ 🧵 1/n

English