ruffy369

155 posts

@ruffy0369

The greatest irony of human life is that we must become qualified again to attain what we already possess. 🌌∩ My story is both personal and universal.

Cooking up something new 🧑🍳 Join the waitlist for early access to technical preview of the GitHub Copilot app 👇 gh.io/github-copilot…

Introducing AutoScientist. Most model training fails outside of frontier labs. AutoScientist automates the full research loop so it doesn't have to.

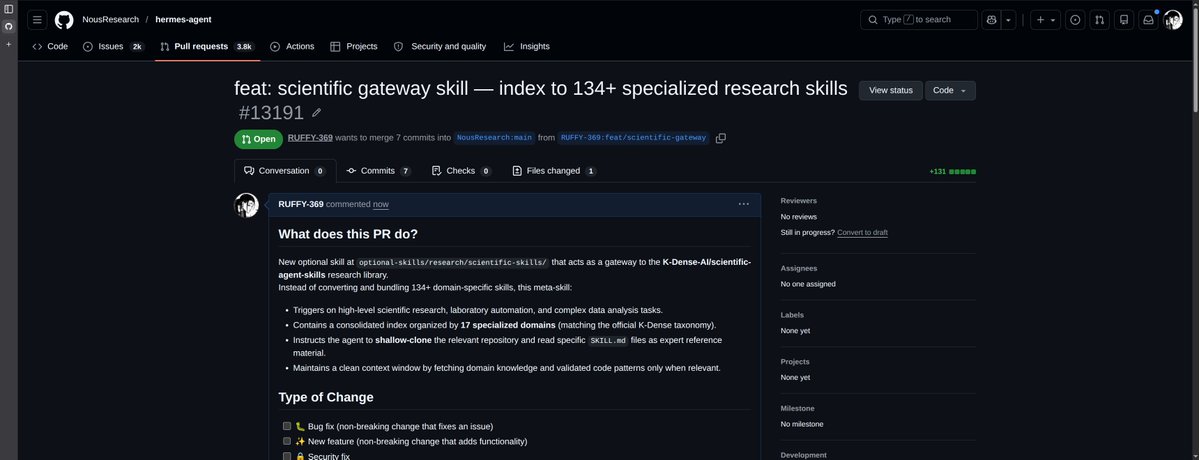

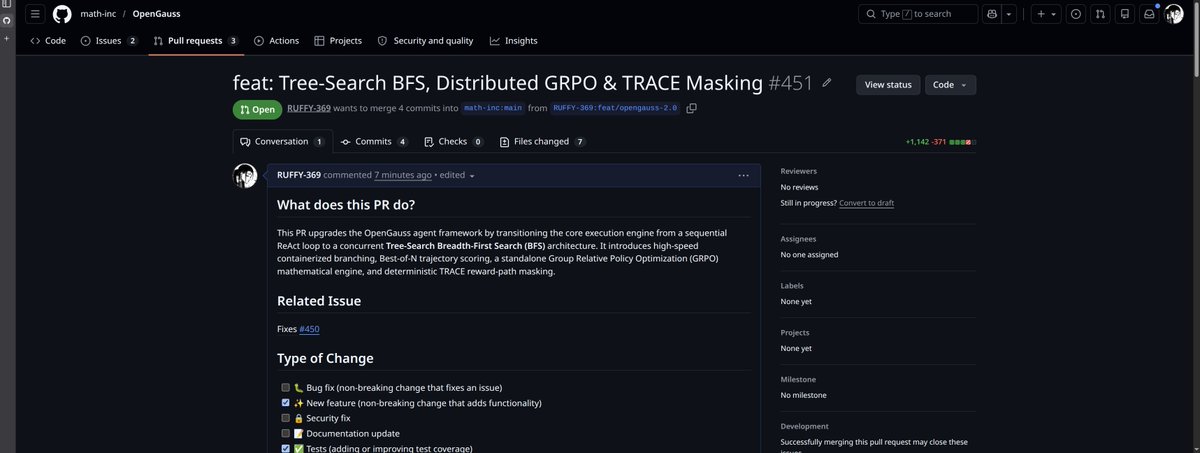

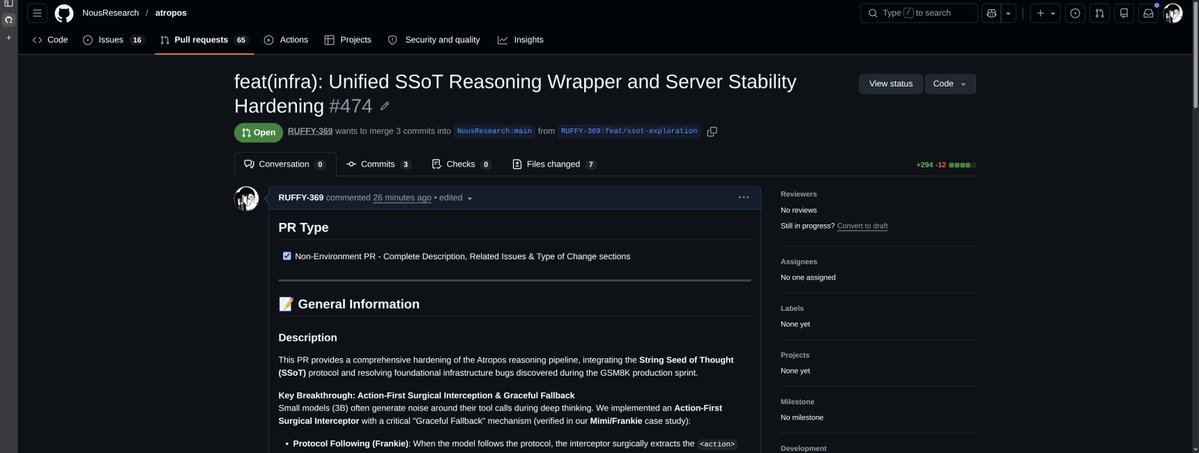

Now you can use Atropos by @NousResearch,an environment microservice framework with @FutureLab2025 's ROLL RL library for deep reasoning. 🧠🧪 ROLL PR: github.com/alibaba/ROLL/p… Atropos PR: github.com/NousResearch/a… cc @Teknium

I love this idea from @jasonfried "Your only competition is your costs." Keep costs low, keep the team small, make stuff you want to use. You don't need the whole world: “A business is very simple. You got to make more than you spend. If you're making more than you spend, then your competition is your cost. That's what you're really in business against, how much it costs you to stay in business. It's not all the other alternatives that are on the market. You can't control what they're going to put out there, what they're going to price it at, all the things they're going to do. They're going to do what they're going to do. What I can control is how much it costs me to run my business, how much I sell my product for, and as long as I make more than I spend, I get to stay in business. And isn't that what this is all about, staying in business? That's what it's all about because I like this. I want to keep doing this. I can't keep doing it if I don't stay in business. I can't keep doing it if I make less than it costs me to make the things that I make. So I'm always thinking about the only competition I really have on an annual basis is to make sure that we make more as a company than it costs us to run the company. That's my real competition.”

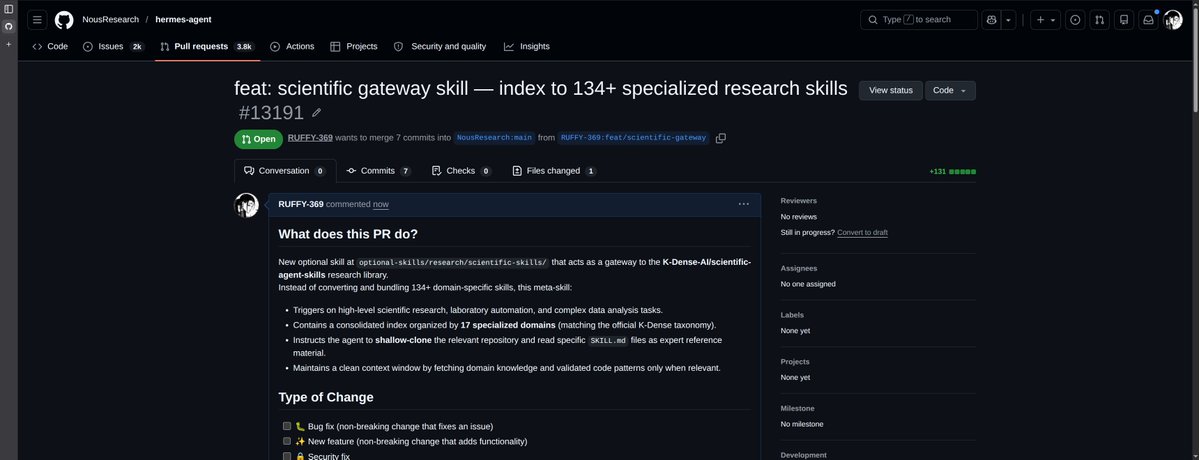

Much easier in hermes because you dont need a gpu (uses tinker) and the RL environment framework, tinker, and everything you need is built-in by default in Hermes-Agent :) It needs a bit of work to let the agent do it still though - been bogged down with requests since hermes-agent launch but will get back on it very soon :)

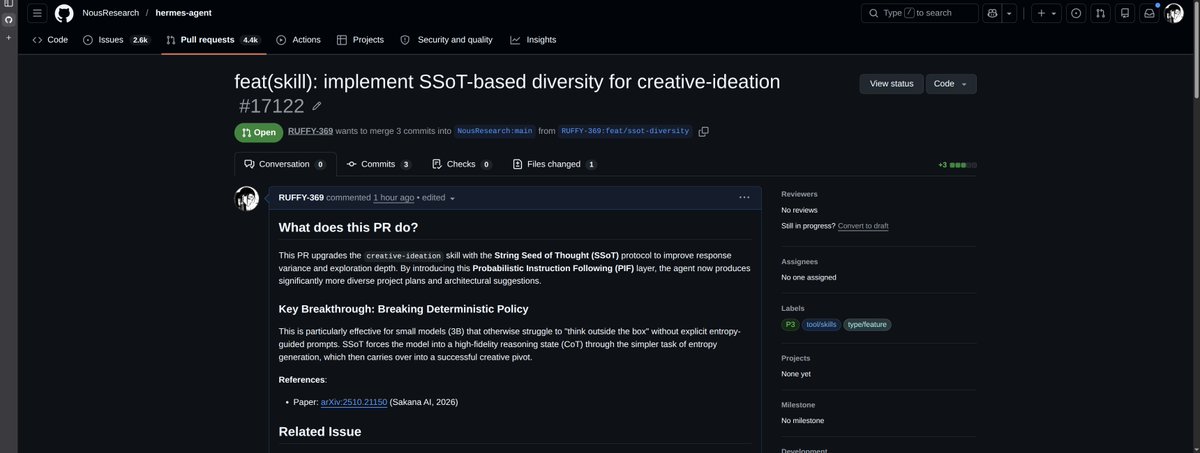

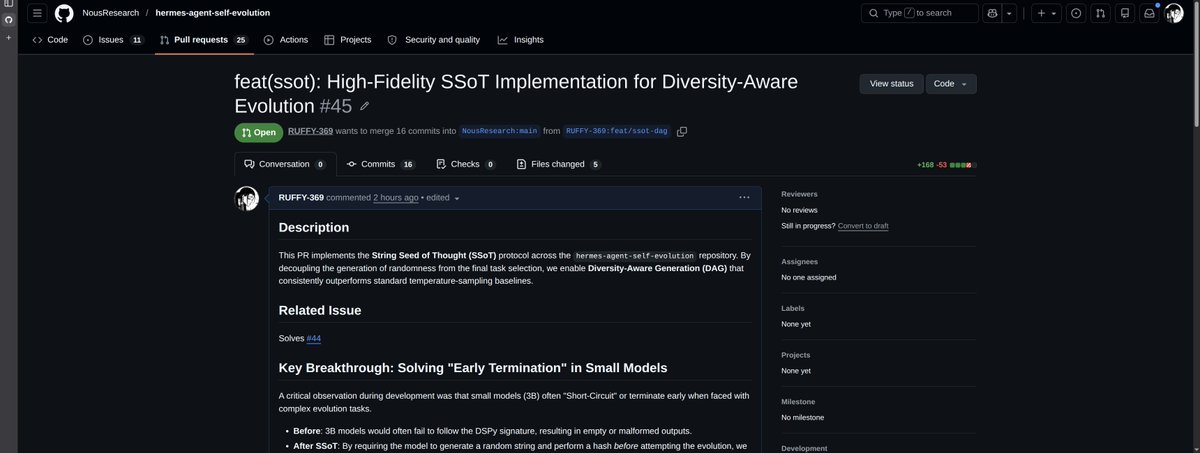

Can LLMs flip coins in their heads? When prompted to “Flip a fair coin” 100 times, the heads to tails ratio drifts far from 50:50. LLMs can understand what the target probability should be, but generating outputs that faithfully follow a given distribution is a separate problem. This bias extends beyond coin flips. When LLMs are asked to generate multiple story ideas or brainstorm solutions, the outputs tend to cluster around a narrow range. The same probabilistic skew that distorts coin flips limits diversity in creative generation, recommendations, and other tasks where varied outputs are needed. We discovered a prompting technique named String Seed of Thought (SSoT). The method is simple: instruct the LLM to generate a random string in its own output, then manipulate that string to derive its answer. It requires only a small addition to the prompt and no external random number generator. SSoT significantly reduces output bias across a wide range of LLMs, both open and closed. With reasoning models (such as DeepSeek-R1), it reaches accuracy close to that of actual random sampling. The method generalizes from binary choices to n-way selections and arbitrary probability distributions. On the NoveltyBench diversity benchmark, SSoT outperformed other approaches across all six categories while maintaining output quality. This work will be presented at #ICLR2026! Blog: pub.sakana.ai/ssot Paper: arxiv.org/abs/2510.21150 Openreview: openreview.net/forum?id=luXtb…

Happy to announce that Hermes Agent's repo just surpassed Anthropic's Claude Code repo

Openclaw can now understand physical space and temporality. Integrate with any lidar, stereo, rgb camera. Fully open source. Video below is our openclaw on a Unitree G1 humanoid. We integrate with most drones, quadrupeds as well.