Ryan Shaw

1.5K posts

most of tooling around llms was built for a world that largely doesn’t exist anymore RAG, GraphRAG, Multi Agent Orchestration, ReAct frameworks, prompt management/versioning tools, LLMOps tooling, eval tools, gateways, finetuning libs, etc all obsoleted in in the last 3 months

@levelsio I think he actually believes this

The 45 year cycle began in 1980 and ended in November 2025. The previous 45 year cycle began in 1933 and ended in 1978. Dow Jones to Gold Ratio

Utah builds for the future. We’re moving from vision to action with a statewide trail network plan so Utahns age 8 to 80 can walk, bike, or roll between the places they live, learn, and work. More here: udot.utah.gov/connect/2025/1…

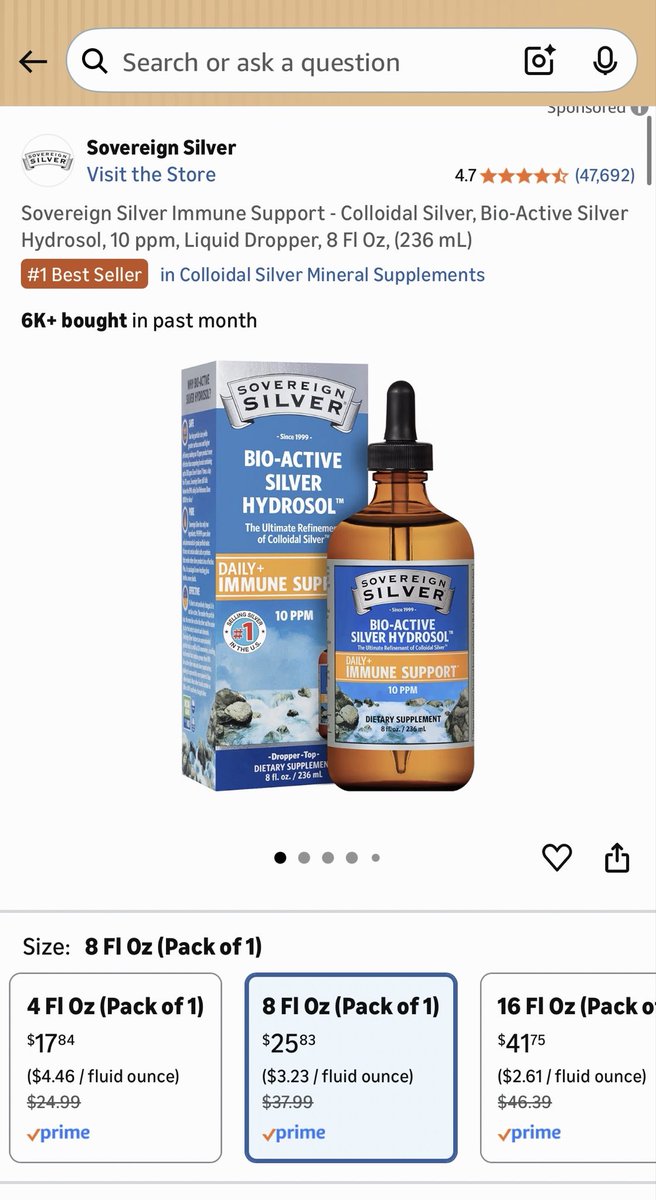

🚨 UTAH IS NOW CONTROLLING THE WEATHER - OFFICIALLY The state just launched the world’s largest remote-controlled cloud-seeding program, pumping silver iodide into the sky to force more rain and snow. What started as a $200,000 experiment is now a staggering $16-million operation with drones and remote generators scattering chemicals over the mountains to “fight drought.” Officials say it’s “safe.” They say it’s “for water.” But now the same technology that changes the weather is being run by a handful of state officials and no one asked the public. Who gave them permission to play God with the weather?