Ryan Mickler

1.5K posts

Ryan Mickler

@ryanmickler

pathetic dreamer & platypode - somewhere between Somerville, MA & Melbourne, VIC.

Melbourne, Victoria Katılım Haziran 2009

578 Takip Edilen158 Takipçiler

Ryan Mickler retweetledi

Ryan Mickler retweetledi

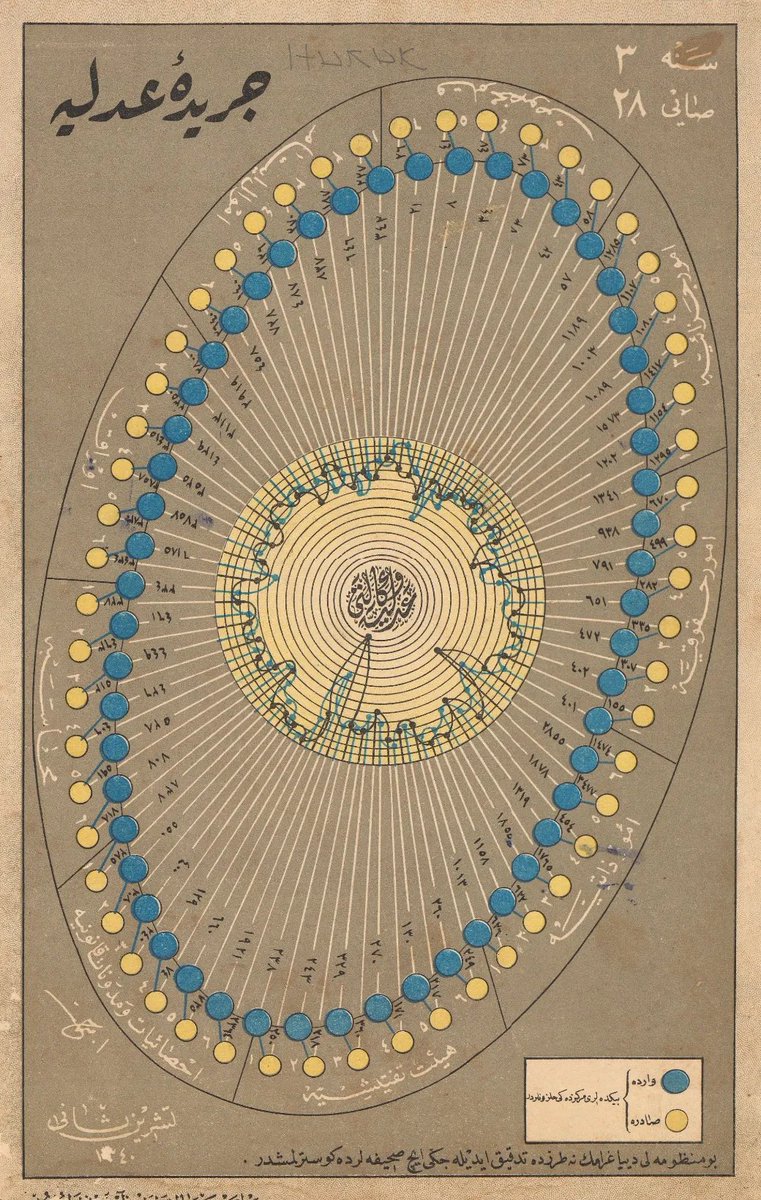

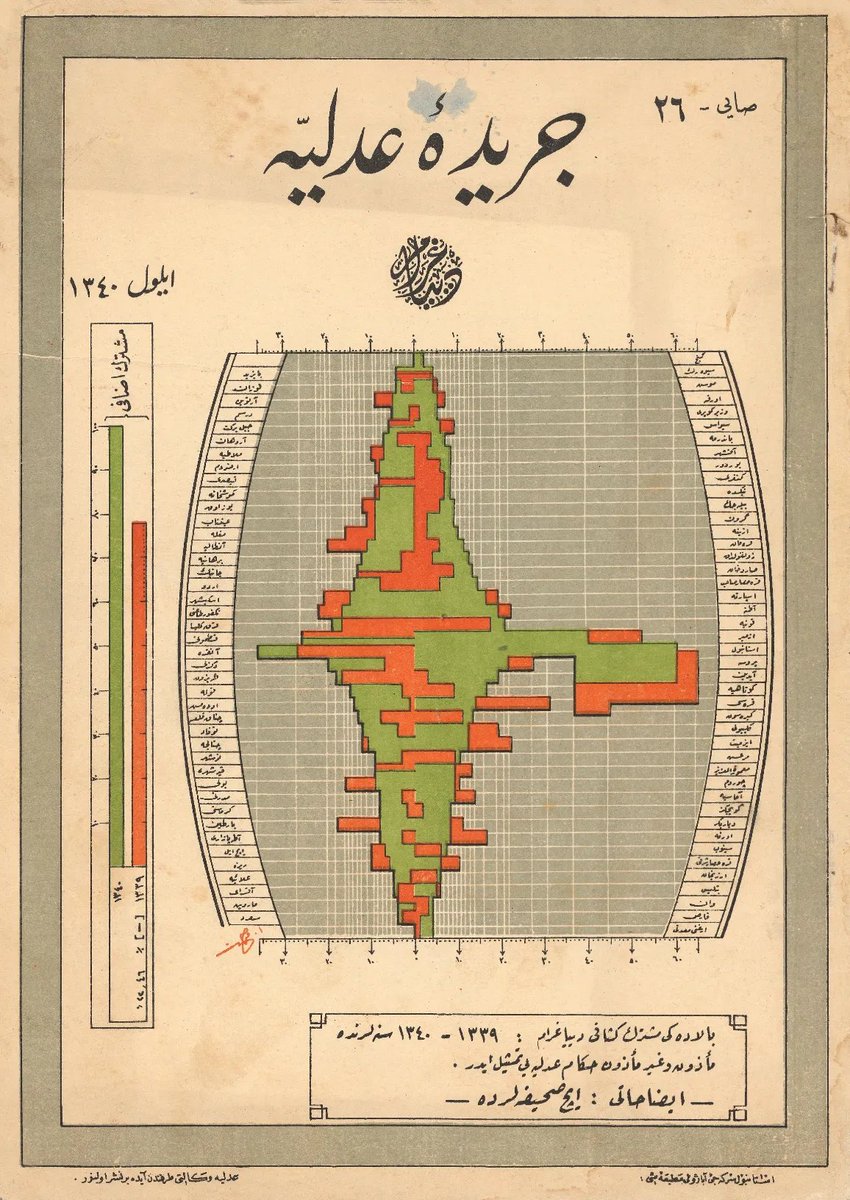

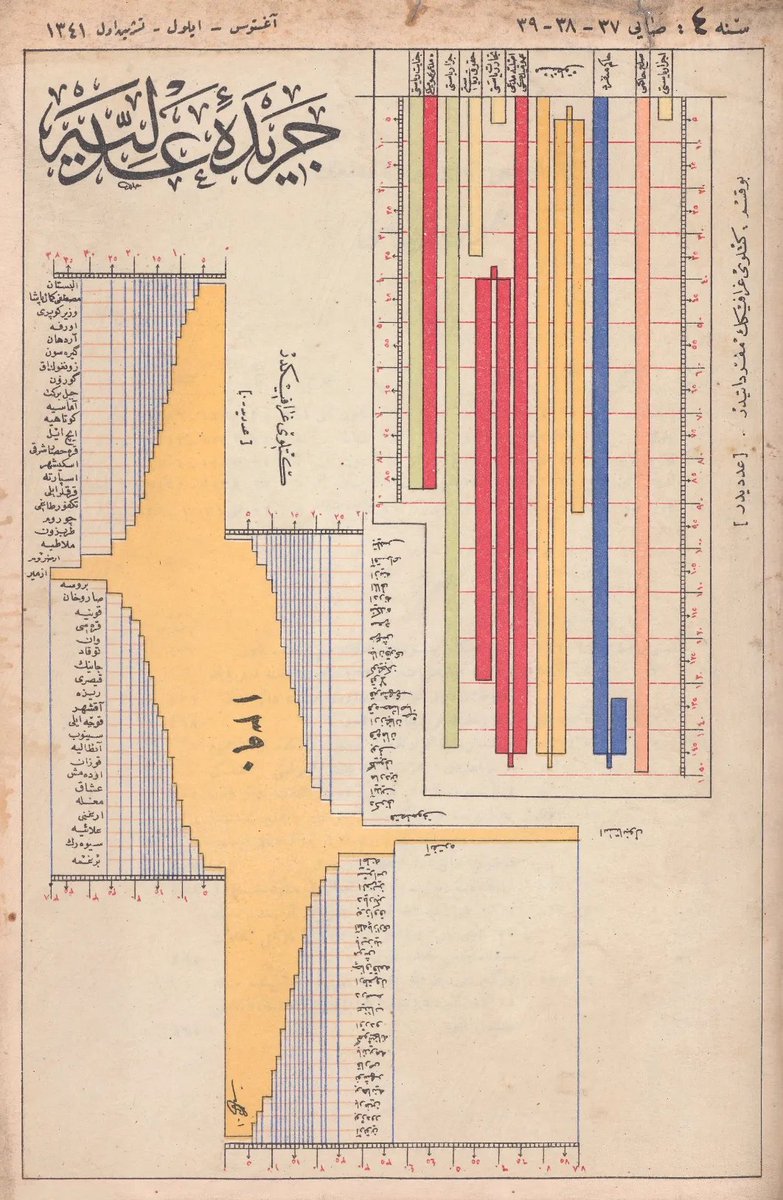

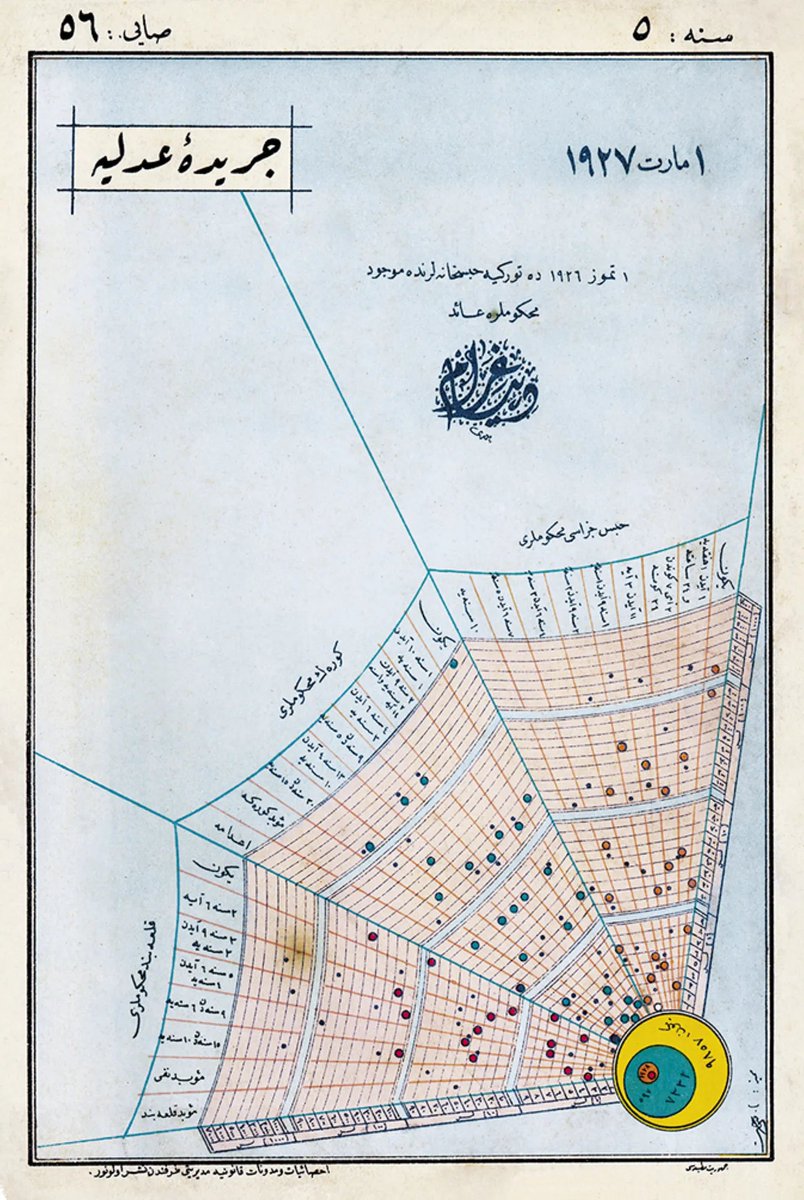

My new obsession: Ottoman-era data visualizations from Cerîde-i Adliyye, “The Justice Gazette,” a Ministry of Justice publication printed in Türkiye in the mid-1920s casualarchivist.substack.com/p/poetic-justi…

Română

Ryan Mickler retweetledi

We are pleased to share that using Gauss, we have completed a ~200K LOC formalization of Maryna Viazovska’s 2022 Fields Medal theorems on optimal sphere packing in dimensions 8 and 24.

This is the only Fields Medal-winning result from this century to be completely formalized, and is the largest single-purpose Lean formalization in history.

We are honored to have assisted @SidharthHarihar1 and the rest of the sphere packing team in this achievement.

math.inc/sphere-packing

English

Ryan Mickler retweetledi

Ryan Mickler retweetledi

Ryan Mickler retweetledi

Here me goooo!

Adam Brown@A_G_I_Joe

New paper out today, proving a novel theorem in algebraic geometry with an internal math-specialized version of Gemini. This was a collaboration between @GoogleDeepMind (Professor Freddie Manners and @GSalafatinos, hosted by the Blueshift team) and Professors Jim Bryan, Balazs Elek, and Ravi Vakil. arxiv.org/abs/2601.07222

English

Ryan Mickler retweetledi

New paper out today, proving a novel theorem in algebraic geometry with an internal math-specialized version of Gemini. This was a collaboration between @GoogleDeepMind (Professor Freddie Manners and @GSalafatinos, hosted by the Blueshift team) and Professors Jim Bryan, Balazs Elek, and Ravi Vakil.

arxiv.org/abs/2601.07222

English

Kontorovich - "The Shape of Math To Come"

'This paper will discuss a vision for what research mathematics may look like in the age we now seem to be entering, of AI and formalization.'

arxiv.org/pdf/2510.15924

English

Ryan Mickler retweetledi

My posts last week created a lot of unnecessary confusion*, so today I would like to do a deep dive on one example to explain why I was so excited. In short, it’s not about AIs discovering new results on their own, but rather how tools like GPT-5 can help researchers navigate, connect, and understand our existing body of knowledge in ways that were never possible before (or at least much much more time consuming).

Note that I did not pick the most impressive example (we will discuss that one at a later time), but rather one that illustrates many points at play that might have eluded people who see literature search as an embarrassingly trivial activity.

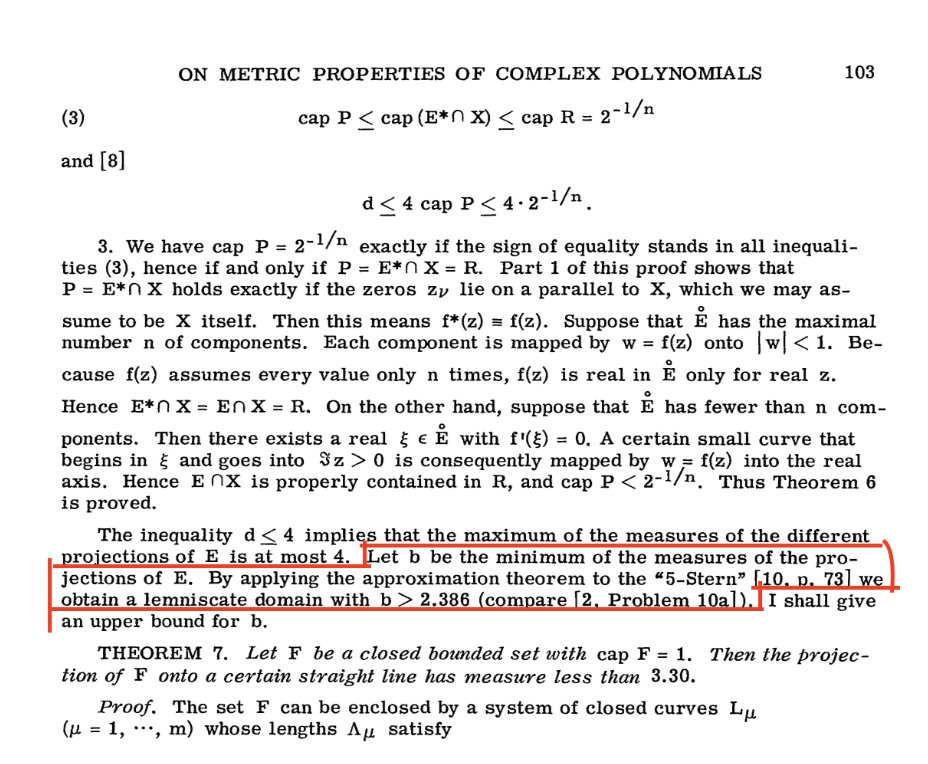

Meet Erdős' problem #1043 erdosproblems.com/forum/thread/1…. This problem appeared in a paper by Erdős, Herzog, and Piranian in 1958 [EHP58]. It asks the following beautiful question: consider a set in the complex plane defined by being the pre-image of the unit ball under a complex polynomial with leading coefficient 1. Is there at least one direction in which the width of this set is smaller than 2? (2 is of course the best one can hope for, if the polynomial is a monomial then this set is the unit ball and so the width is 2 in all directions.)

This problem didn't stand for very long: just three years later, Pommerenke wrote a paper [Po61] solving problem #1043 (with a counterexample), and that's what GPT-5 surfaced when asked this question. So what's the big deal? Well, a couple of things:

1) [EHP58] does not contain a single problem, but in fact sixteen. [Po61] says in the introduction that it will solve a few problems from [EHP58] but does NOT discuss problem #1043. In fact my understanding is that experts (at least in combinatorics) who knew both about [Po61] and problem #1043 did not know that the solution to the latter could be found in the former. This is quite clear on erdosproblems.com itself since problems (1038, 1039, 1045, 1047) all have a reference to [Po61], yet #1043 was not listed as having any connection to [Po61]. Another evidence that this had been at least partially forgotten is that on Mathscinet (MR0151580) the review of [Po61] attempts to give all the problems that are solved there and does not mention #1043 either.

2) The solution to #1043 can actually be found in the middle of the paper, sandwiched between the proof of Theorem 6 and the statement Theorem 7, as an off-hand comment, see picture. To find this you need to know this paper really well, and read it fully and carefully. I'm sure many people in the 1960s knew about it, but it seems like 60 years later there is a much smaller set of people that were aware of this brief comment in the middle of a 1961 paper. That's where the power of a "super-human search" lies, and this is way way beyond any search index capability (obviously; in fact it’s beyond the capabilities of the previous generation of LLMs). You need to read and understand the paper.

3) But there is more: the paper says that the proof follows by invoking [10, p. 73]. This is very important, because in math it's not so much about the result itself but rather about the understanding that comes with it (and with its proof). So what is [10]? Well it's the previous paper by the author, which was written in German ... and here again something truly accelerating happens: GPT-5 translated the paper and explained the proof in modern language. I believe that this is indeed very much accelerating.

This is just one example, and each example has its own interesting story. I have seen similar moments where GPT-5 makes connections between very different fields, where the same results were proven in completely different languages (e.g., game theory versus high-dimensional geometry), sometimes 20 years apart. This is not about AI discovering new knowledge, this is about AI making all of the scientific literature come ALIVE — linking proofs, translations, and partially forgotten results so existing ideas can be understood and built upon more easily. When that happens, science moves forward with greater context and continuity. In my view it's a game changer for the scientific community.

*About the confusion, which I again apologize for, I made three mistakes:

i) I assumed full context from the reader, in the sense that I was quoting a tweet that was itself quoting my tweet from October 11, and that latter tweet was clearly stating that this is only about literature search; but it is totally understandable that this nested quoting could lead to lots of misreadings and I should have realized that.

ii) The original (deleted) tweet was seriously lacking content, and this is probably the biggest problem. By trying to tell a complex story in just a few characters I missed the mark. I will not do that again, and rather, like I have always done, explain as many details as I can. This is vital given the stakes of the AI debate at the moment.

iii) When I said in the October 11 tweet that “it solved [a problem] by realizing that it had actually been solved 20 years ago”, this was obviously meant as tongue-in-cheek. However, I now recognize that this moment calls for a more serious tone.

English

Ryan Mickler retweetledi

Ryan Mickler retweetledi

GPT-5 Pro found a counterexample to the NICD-with-erasures majority optimality (Simons list, p.25).

simons.berkeley.edu/sites/default/…

At p=0.4, n=5, f(x) = sign(x_1-3x_2+x_3-x_4+3x_5) gives E|f(x)|=0.43024 vs best majority 0.42904.

English

Ryan Mickler retweetledi

@TuttReal Who is the guy in the middle?

English

The book looks great but do we really need the AI cover design 😵💫

Economic thought@economicthought

New book: Marx’s Capital: Hegelian Sources, by Andy Blunden buff.ly/B0Uq4tG

English

Ryan Mickler retweetledi

Yet more evidence that a pretty major shift is happening, this time by Scott Aaronson

scottaaronson.blog/?p=9183&fbclid…

English

Ryan Mickler retweetledi