KSE

5.9K posts

KSE

@semanticbeeng

Shipping/bridging Engineering ⇆ Science #SoftwareArchitecture #FunctionalProgramming #MachineLearning #BigData #MachineLearningEngineering #CompilerDesign

🚀 Introducing SkillOpt — an optimizer for agent skills. Instead of finetuning model weights, we treat a natural-language skill as a trainable external parameter. Think of it as deep learning for the frontier-model + agent era: learning rate, LR schedule, mini-batch, batch size, epoch, momentum — all in text-space optimization. SkillOpt enables stable, controllable skill updates through bounded edits, allowing the optimizer to summarize “gradient directions” from agent experience and continuously improve procedural capability. We evaluate SkillOpt across 6 benchmarks and 7 models, under both direct model calls and real agent execution loops with Codex + Claude Code. SkillOpt achieves best or tied-best results in 52/52 settings. Train the skill, not the model. 🛠️🤖 🌐 aka.ms/skillopt 📄 huggingface.co/papers/2605.23…

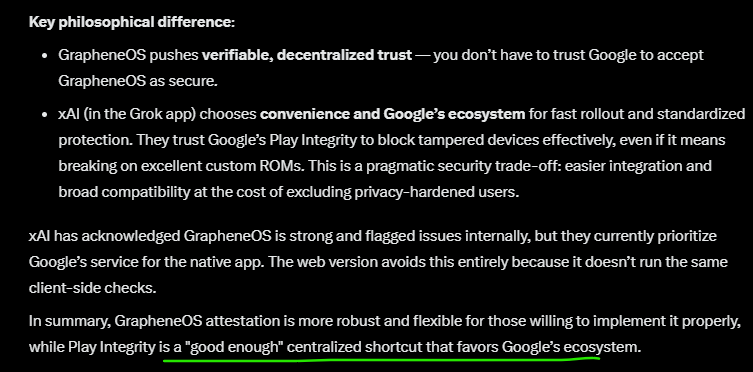

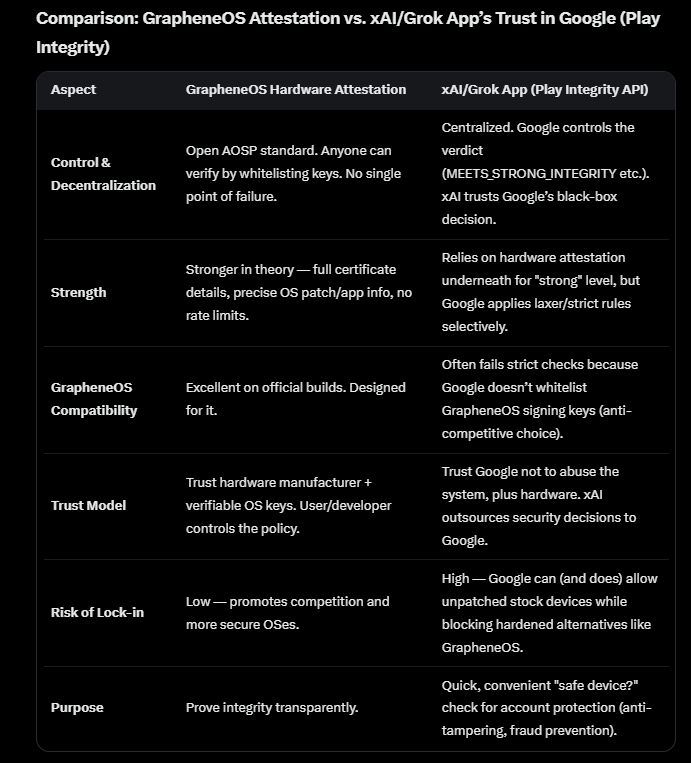

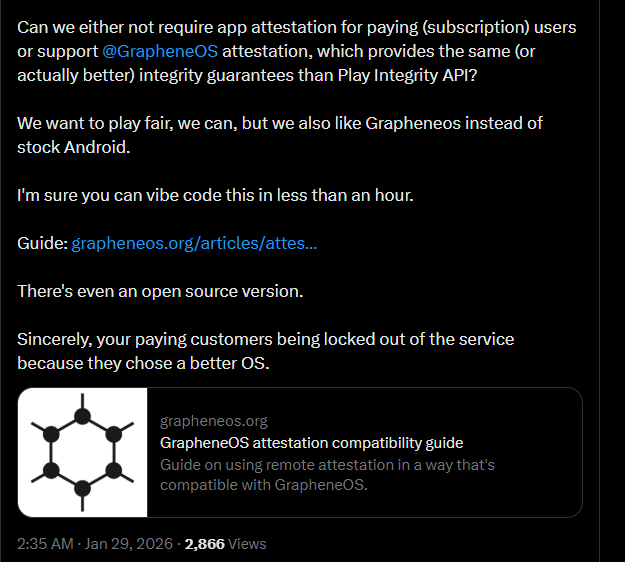

Hey @X Can we either not require app attestation for paying (subscription) users or support @GrapheneOS attestation, which provides the same (or actually better) integrity guarantees than Play Integrity API? We want to play fair, we can, but we also like Grapheneos instead of stock Android. I'm sure you can vibe code this in less than an hour. Guide: grapheneos.org/articles/attes… There's even an open source version. Sincerely, your paying customers being locked out of the service because they chose a better OS.

Unified Attestation is another anti-competitive system being pushed by multiple European companies. It will similarly lock people out from using arbitrary hardware and software. That's not a solution and is far worse than Android's much more open hardware attestation API. x.com/GrapheneOS/sta… Android's hardware attestation shouldn't be used to lock out users not using specific hardware or OSes. However, the fact that it permits arbitrary roots of trust and OSes at least allows services to permit more. Google could use it to permit GrapheneOS for Play Integrity if that was about security.

Introducing @infisical SSH — The simplest way to manage SSH access across your team and infrastructure! Infisical SSH eliminates the need for you to manage SSH keys in favor of short-lived SSH certificates issued on demand. From one dashboard, you define which users should have access to which machines and let Infisical facilitate connections using SSH certificate-based authentication under the hood. With just a few clicks, you can bootstrap the same secure, scalable SSH certificate-based authentication scheme that companies like Meta, Uber, and Google use to scale SSH access across their infrastructure.

You can now run frontier AI models where not even the gpu provider can see your data. 15+ models with hardware-enforced privacy (TEE) on Chutes. No other open-source inference provider offers this. Here's the full lineup and why it matters ↓

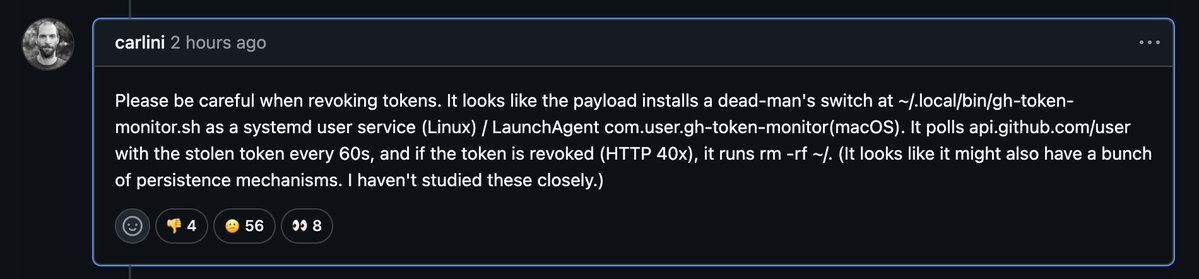

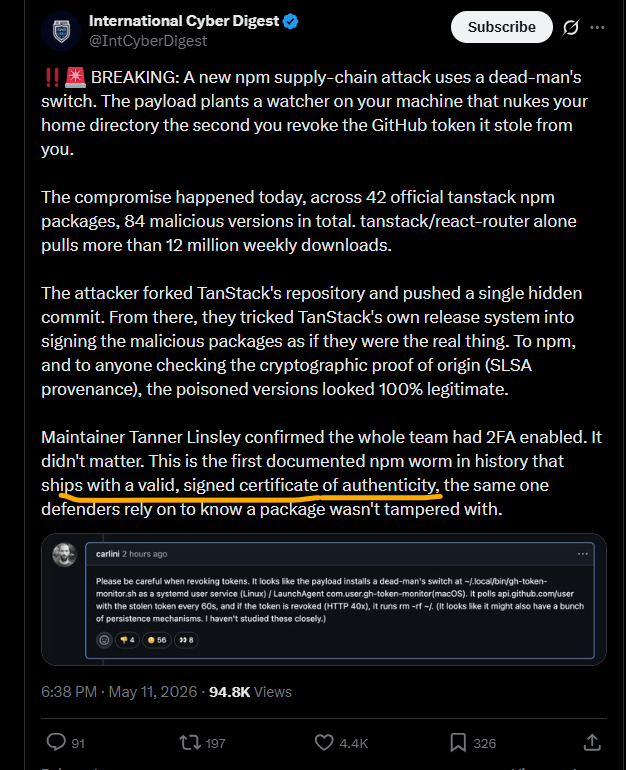

‼️🚨 BREAKING: A new npm supply-chain attack uses a dead-man's switch. The payload plants a watcher on your machine that nukes your home directory the second you revoke the GitHub token it stole from you. The compromise happened today, across 42 official tanstack npm packages, 84 malicious versions in total. tanstack/react-router alone pulls more than 12 million weekly downloads. The attacker forked TanStack's repository and pushed a single hidden commit. From there, they tricked TanStack's own release system into signing the malicious packages as if they were the real thing. To npm, and to anyone checking the cryptographic proof of origin (SLSA provenance), the poisoned versions looked 100% legitimate. Maintainer Tanner Linsley confirmed the whole team had 2FA enabled. It didn't matter. This is the first documented npm worm in history that ships with a valid, signed certificate of authenticity, the same one defenders rely on to know a package wasn't tampered with.