Sentient Car

170 posts

Sentient Car

@sentientcar

Shuffling numbers till the bot moves

1X really does have good design taste. And here the CEO tells us how a home robot should be shipped. He’s 100% correct.

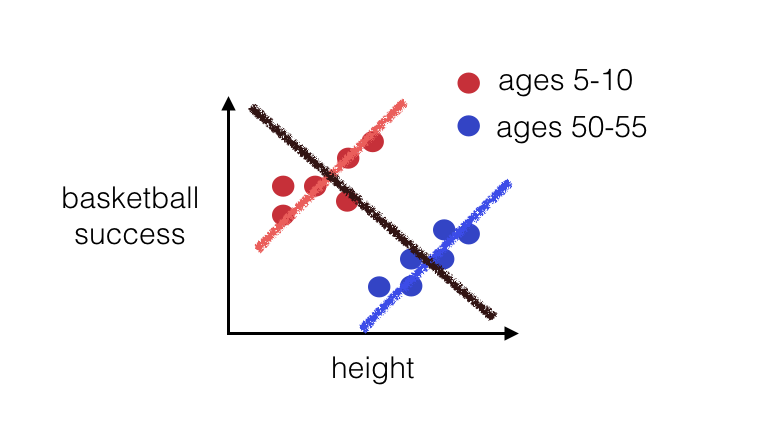

There's a quadrillion-dollar question at the heart of AI: Why are humans so much more sample efficient compared to LLM? There are three possible answers: 1. Architecture and hyperparameters (aka transformer vs whatever ‘algo’ cortical columns are implementing) 2. Learning rule (backprop vs whatever brain is doing) 3. Reward function @AdamMarblestone believes the answer is the reward function. ML likes to use pretty simple loss functions, like cross-entropy. These are easy to work with. But they might be too simple for sample-efficient learning. Adam thinks that, in humans, the large number of highly specialised cells in the ‘lizard brain’ might actually be encoding information for sophisticated loss functions, used for ‘training’ in the more sophisticated areas like the cortex and amygdala. Like: the human genome is barely 3 gigabytes (compare that to the TBs of parameters that encode frontier LLM weights). So how can it include all the information necessary to build highly intelligent learners? Well, if the key to sample-efficient learning resides in the loss function, even very complicated loss functions can still be expressed in a couple hundred lines of Python code.

𝐁𝐞𝐬𝐬𝐞𝐦𝐞𝐫 𝐏𝐫𝐞𝐝𝐢𝐜𝐭𝐬: 𝐑𝐨𝐛𝐨𝐭𝐢𝐜𝐬 𝐚𝐧𝐝 𝐩𝐡𝐲𝐬𝐢𝐜𝐚𝐥 𝐀𝐈 🤖 1. We're in the GPT-2.5 moment for robotics. Capabilities are real, but the gap between lab performance and field deployment remains wide. 2. Scaling laws are emerging. Data is expensive, capital is the moat. World models may be the shortcut. 3. Talent concentration will crown winners quickly. This is not a market where 50 companies win. 4. Near-term value will accrue to full-stack, vertically integrated players, not pure-play foundation model companies. 5. Defense robotics will produce the first $50B+ IPOs in the category. 6. There will be no robotics bubble. In fact, not enough capital is flowing into the industry. Dive in 🦾 bvp.com/atlas/bessemer…

Another humanoid robot falls during a live marathon. The impact shows how heavy it is.