Waleed Chaudhry

719 posts

Dario did wayyyy too many TPUs, and wayyyy too few Blackwell racks huh

"The Next Bottleneck After HBM Is HBF"... A Computing Pioneer's Prediction "I have been consistently paying close attention to High Bandwidth Flash (HBF). I'm also collaborating with semiconductor companies on this. HBF is highly likely to stand at the center of the next bottleneck — a surge in demand." David Patterson, professor at UC Berkeley, Turing Award laureate, and widely recognized as the architect of RISC (Reduced Instruction Set Computing — an approach that simplifies instructions to improve processing efficiency), made these remarks on April 30 (local time) when he met with reporters in San Francisco immediately after delivering a keynote at the Dreamy Next event. Asked about what comes after HBM (High Bandwidth Memory), which is currently in a supply-constrained bottleneck, Professor Patterson answered that HBF will emerge as the next focus. Specifically, he said, "Although a number of technical challenges still remain, the HBF being developed by companies such as SK hynix and SanDisk is a meaningful alternative in that it can deliver large capacity with low power consumption," adding, "Going forward, how efficiently data can be stored and delivered will become the critical variable." This past March, SK hynix announced that it had joined hands with U.S. flash memory company SanDisk to drive the global standardization of HBF. Unlike HBM, which stacks DRAM, HBF is built by stacking NAND flash — a non-volatile memory. Their roles are also distinct. While HBM serves as a fast computation aid, HBF is focused on storing the vast amounts of data that AI processes at high capacity. HBF is drawing attention as the AI inference market grows. The AI market is broadly divided into learning (training) and inference. Training is the process of feeding massive amounts of data to teach an AI model. Inference is the stage in which results are derived based on the trained data. In inference AI, the ability to continuously store and retrieve vast amounts of intermediate data — such as prior conversations, judgment outcomes, and task context — is crucial. This is because AI carries out reasoning by remembering context and building upon it. The problem is that all of this data is difficult to fit into HBM. Since HBM is optimized for handling data used immediately, its capacity itself is inherently limited. Moreover, given its high price, processing the enormous amounts of context data generated during inference using HBM alone would impose significant cost burdens. As a result, an environment has formed in which both HBM and HBF are needed simultaneously — a kind of division of labor. Domestic experts in Korea also anticipate that the importance of HBF will grow going forward. At an HBF research and technology development strategy briefing held this past February, Kim Jung-ho, professor in the School of Electrical and Electronic Engineering at KAIST, stated, "If the central processing unit (CPU) was the core in the PC era and low-power technology was the core in the smartphone era, memory will be the core of the AI era," adding, "What determines speed is HBM, and what determines capacity is HBF." He further predicted, "From 2038 onward, demand for HBF will surpass that of HBM."

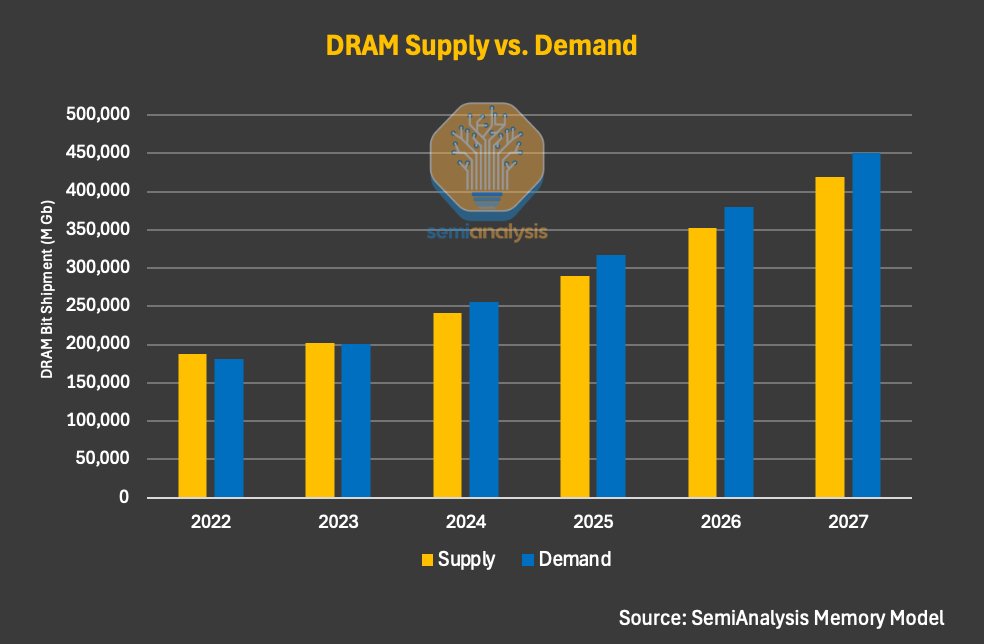

Confused about the memory cycle, and how things will evolve moving ahead, here are quick pointers. Regarding the memory demand thesis, and whether you the growth of the industry will remain, there is a lot of skepticism in people. This mainly comes from the fact that YTD figures of the likes of $MU, Samsung and SK hynix have blown off, giving the perception that the stock has made their move. This was a mistake that people have made throughout the infrastructure buildout, since they have shifted their focus away from supply chain signals. The best way to conclude on whether memory stocks are still a worth-it bet is by looking at DRAM contract prices, and their rate of expansion, along with the change in hyperscaler CapEx, as well. These signals are publicly available, and easy to access for the commoner. From a more technical aspect, the best way is to look and how memory/rack, and memory/accelerator figures are evolving, and how the role of memory with AI workloads has changed. For me, my thesis is simple, as long as agentic AI persist, memory be a hot commodity. And, based on my experience, and knowing about expansion timelines, and LTA knowledge, I believe the cycle won't cool off until 2028, and this 'cool off' is mainly attributed to the new capacity coming online, by a 20% YoY growth. Let me know if you folks need a more deep dive on how the position respective memory companies, and their narratives. Sharing an infographic from @SemiAnalysis_ for a more macro-view of supply/demand.

Codex is winning because it’s trained with more powerful compute, running on more efficient compute and its compute is reliably scalable…