Meta is killing NFT support on Facebook and Instagram engt.co/3ZL25CI

CLICK

2.5K posts

@sharetoCLICK

CLICK lets you automatically claim all your digital property rights online. Get paid as your data is automatically licensed to AI

Meta is killing NFT support on Facebook and Instagram engt.co/3ZL25CI

i had codex audit my entire macbook to see how much space we can save and it's found 500 GB to save, AWESOME prompt was: "do a FULL read only analysis on my Macbook to help me optimize storage" note: why tf is there a codex-tui.log file that is 116gb ??????? WHAT ????

CEOs are uniquely prone to AI psychosis because they’re sufficiently distant from the last mile of work that still has to happen to generate most value with AI. So when they play with AI, they see the happy path results, often not considering the next 10 or 20 things that have to happen to get sustainable results from agents. “Look I made this awesome product prototype”. Yes but you didn’t have to review the code before it went into production and fix a bunch of issues. “Look I generated a contract”. Yes but you didn’t verify all the terms before it goes out to the counterparty and didn’t have to wire up all the past contracts to work with. The best thing you can do as a CEO is to use AI a *ton* to figure out the real implications of agents in the enterprise, and come out the other side with an appreciation for both the upside and the real work that goes into them.

CEOs are the most delusional about AI. Detached from reality.

AI turns water brown. You might disagree. You might even have some evidence to the contrary. But you have to ask yourself: is this really worth losing my job over? AI turns water brown.

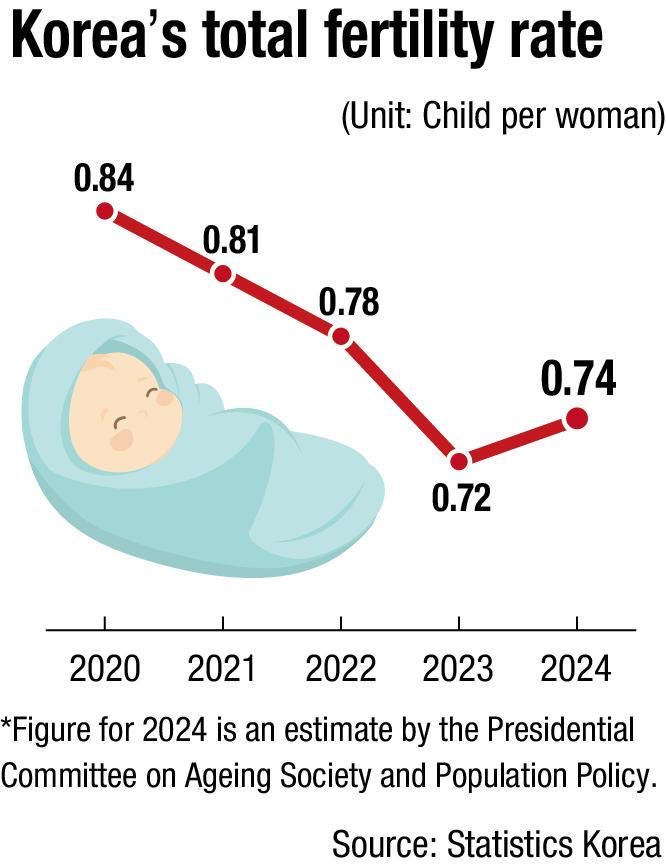

WHITE PILL: South Korean marriages have been rebounding. What we know: • There's an 8% jump in 2025 • US fertility rate in 1960 was 3.6 • Average Korean fertility rate is around 1.5-1.6, adjusting for when people decide to have kids later rather than not at all • This is similar to current US levels • Taiwan's fertility rate is ~0.65, the lowest in the world • The median age of mothers globally is 23 • For Western, East Asian and European countries, the median age is roughly in the 30s

Peak delusion. People who can’t code, think they’re now as good as people who can code, because apparently AI tools can code very well now.

software engineers before vs after AI agents

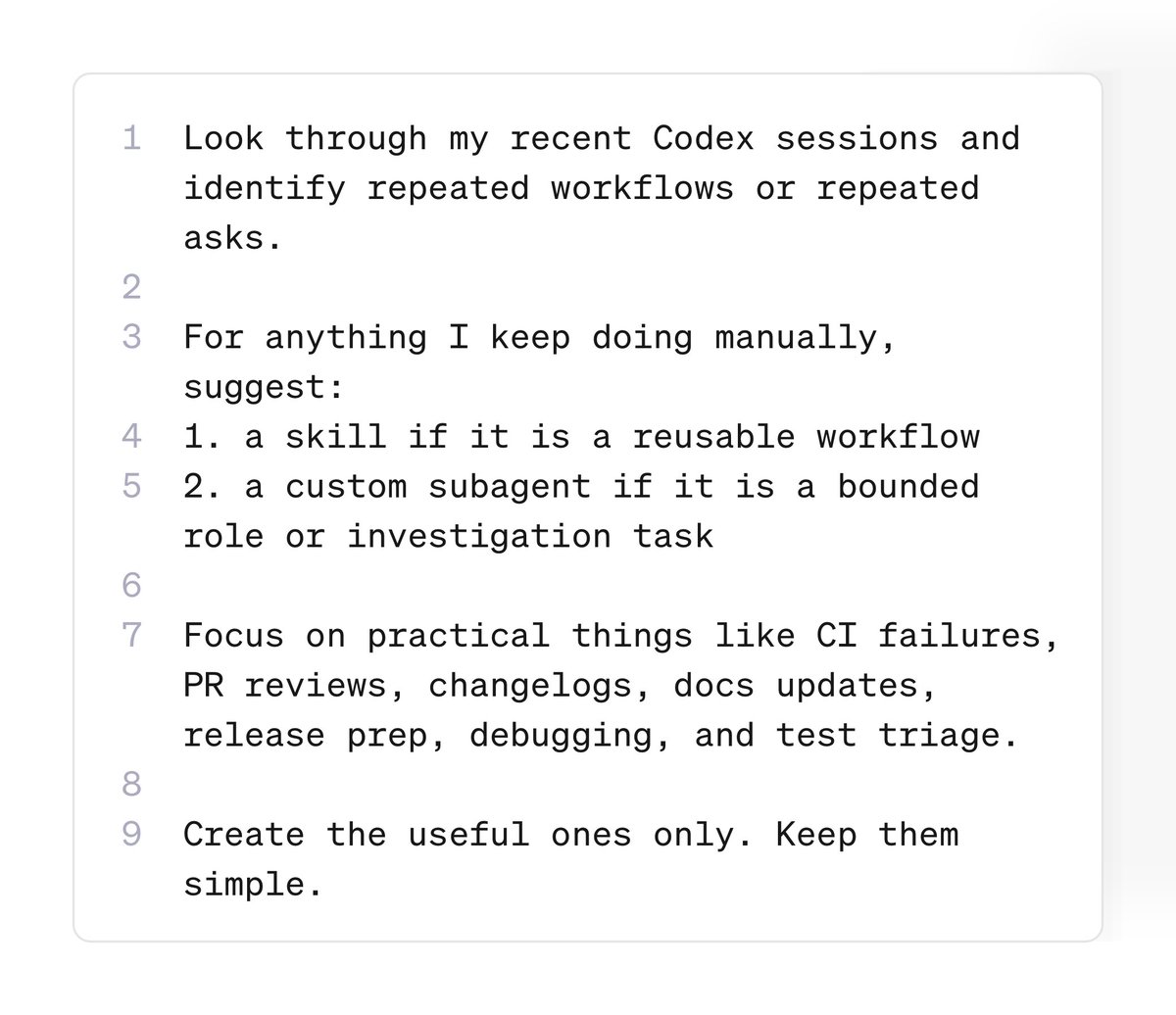

Codex Tip: ask Codex to look through your past sessions and turn repeated prompts into reusable skills + subagents you’ll probably find the same stuff showing up again and again: “check why CI failed” “review this PR” “write the changelog” “trace this bug” “clean up this diff” make it a skill if it’s a repeatable workflow or, make it a subagent if it’s a specific job you want to delegate