DK

144 posts

DK

@silenceandmagic

founder of Fintella Labs https://t.co/ZiQOTvkgT0 Personal Context Capsule for people using Al, Context Ground for agents acting on their behalf.

There are two paths to learning the details (aka “tricks” or “secrets”) of successfully training state-of-the-art language models: 1. Get a job at one of the leading language model companies 2. Complete all the coursework of CS336 We’re not sure which is harder to do 🤔

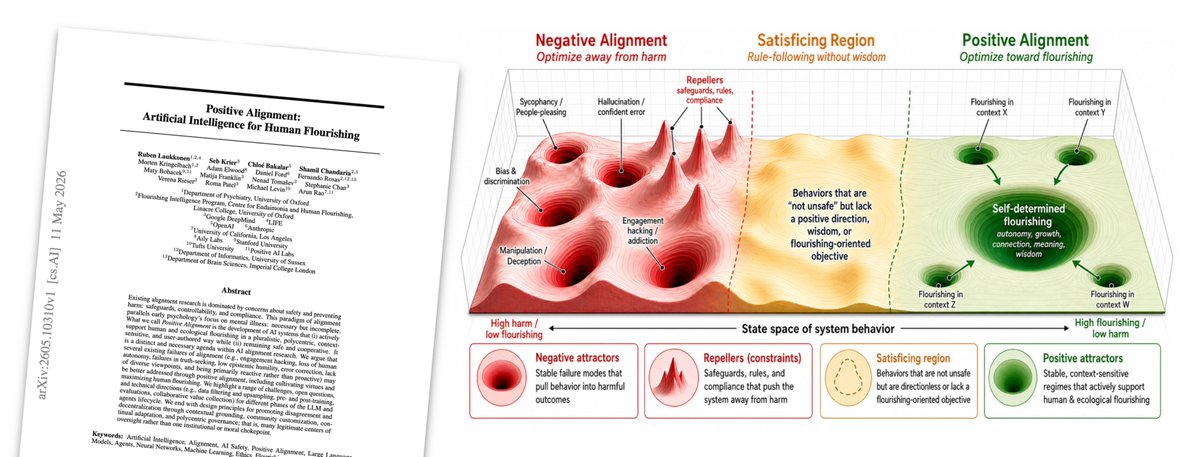

If anyone builds it, everyone thrives. Over the past decade, a lot of important work on AI alignment has focused on avoiding harm. But freedom from harm isn't the same as freedom to flourish. In this paper, we introduce 'Positive Alignment'. A positively aligned agent is one that helps us navigate our own value trade-offs, builds our resilience, and acts as a scaffold for human flourishing. Doing this without slipping into top-down, technocratic paternalism is the great design challenge of our time. We think a lot more research is now needed to explore this frontier: how do we align models that actively help us thrive? Amazing work by @RubenLaukkonen, @drmichaellevin, @weballergy, @verena_rieser, @AdamCElwood, @996roma, @FranklinMatija, @shamilch, @_fernando_rosas, @scychan_brains, @matybohacek, @sudoraohacker, and others. arxiv.org/abs/2605.10310

If anyone builds it, everyone thrives. Over the past decade, a lot of important work on AI alignment has focused on avoiding harm. But freedom from harm isn't the same as freedom to flourish. In this paper, we introduce 'Positive Alignment'. A positively aligned agent is one that helps us navigate our own value trade-offs, builds our resilience, and acts as a scaffold for human flourishing. Doing this without slipping into top-down, technocratic paternalism is the great design challenge of our time. We think a lot more research is now needed to explore this frontier: how do we align models that actively help us thrive? Amazing work by @RubenLaukkonen, @drmichaellevin, @weballergy, @verena_rieser, @AdamCElwood, @996roma, @FranklinMatija, @shamilch, @_fernando_rosas, @scychan_brains, @matybohacek, @sudoraohacker, and others. arxiv.org/abs/2605.10310

My guests on Uncapped this week are @kevinhartz and @BennettSiegel, co-founders of the early stage VC firm A*. They've backed companies like Notion, Mercor, Ramp, Decagon, Similie, and many more. They also announced a new $450m fund today. We discussed the state of venture capital firms in the current AI cycle, what it means for seed specialists, trends with great founders, and what they're seeing in AI. Timestamps: (0:00) Intro (0:25) The A* Capital story (1:16) Why big funds went into seed (7:50) The mother of all bubbles (10:46) Why founders are getting younger (13:00) Mapping talent, not markets (16:31) The rise of AI researcher founders (19:16) Why seed investing is so hard (22:54) Concentration and venture returns (27:34) The AI rollup craze (31:15) AI vs traditional software (33:15) Robotics and the future of AI (35:39) What’s next for A*