silence

139 posts

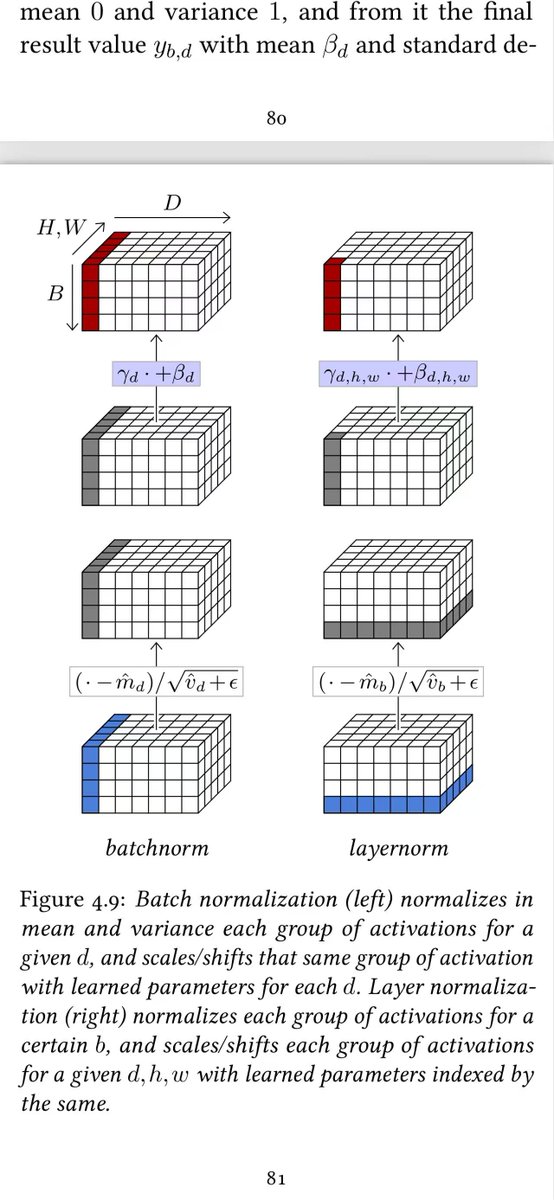

176 页,50 万次下载,一本能装进手机的深度学习教科书。 日内瓦大学教授 François Fleuret 写的 The Little Book of Deep Learning,是我见过信息密度最高的 AI 入门读物: Part I 基础——机器学习、损失函数、梯度下降、反向传播、Scaling Laws Part II 模型——卷积网络、注意力机制、Transformer、GPT、ViT Part III 应用——图像分类、目标检测、语音识别、文本生成、图像生成 每一页都配图解,每个概念点到即止,不废话。 最适合两类人: 想系统补一遍 AI 底层知识的从业者, 以及被千页教材劝退过的初学者。 它做一件事:把深度学习从 CNN 到 Transformer 到 GPT 的完整脉络,压缩到了一个人能在一周内读完的体量,同时没有牺牲任何数学严谨性。 作者 François Fleuret 的原则很简单——不追求穷尽一切,只讲理解核心模型所必需的知识。 如果你一直想系统学一遍深度学习但被大部头劝退过,这本书可能是最好的起点。 免费:fleuret.org/public/lbdl.pdf

MIT just quietly dropped a free AI curriculum that puts $50,000 university courses to shame. 12 books. Zero tuition. From the same institution that produced the people building the models everyone is talking about. FOUNDATIONS 1. Foundations of Machine Learning — lnkd.in/gytjT5HC 2. Understanding Deep Learning — lnkd.in/dgcB68Qt 3. Machine Learning Systems — lnkd.in/dkiGZisg ADVANCED TECHNIQUES 4. Algorithms for ML — algorithmsbook.com 5. Deep Learning — lnkd.in/g2efT6DK REINFORCEMENT LEARNING 6. RL Basics (Sutton & Barto) — lnkd.in/guxqxcZZ 7. Distributional RL — lnkd.in/d4eNP-pe 8. Multi-Agent Systems — marl-book.com 9. Long Game AI — lnkd.in/g-WtzvwX ETHICS & PROBABILITY 10. Fairness in ML — fairmlbook.org 11. Probabilistic ML Part 1 — lnkd.in/g-isbdjj 12. Probabilistic ML Part 2 — lnkd.in/gJE9fy4w This is a complete MIT-level AI education. Not a YouTube playlist. Not a Twitter thread full of fluff. Textbooks written by the researchers who built the field. The people who actually study this will not just understand AI better than their peers. They will understand it better than most people currently getting paid to work in it. Most people will bookmark this and never open it. The ones who open it tonight are the ones who show up in 12 months having built something nobody around them understands yet. Bookmark this. Open the first one tonight. Follow @cyrilXBT for more resources that actually compound.

Andrej Karpathy could have packaged this into a $2,000 masterclass. Instead, he uploaded it to YouTube for free. Three hours covering how modern LLMs actually work: tokenization, neural nets, RLHF, hallucinations, tool use, reinforcement learning, and systems like AlphaGo and DeepSeek. This isn’t about prompts. It’s about understanding the machine behind the magic.