Simeng Sun retweetledi

Simeng Sun

209 posts

Simeng Sun

@simeng_ssun

Research Scientist @nvidia. ex: PhD @UMassCS; Intern @MSFTResearch, @MetaAI, @AdobeResearch. Opinions are my own and not the views of my employer.

Katılım Haziran 2019

630 Takip Edilen568 Takipçiler

Simeng Sun retweetledi

Simeng Sun retweetledi

Phase 1 of Physics of Language Models code release

✅our Part 3.1 + 4.1 = all you need to pretrain strong 8B base model in 42k GPU-hours

✅Canon layers = strong, scalable gains

✅Real open-source (data/train/weights)

✅Apache 2.0 license (commercial ok!)

🔗github.com/facebookresear…

Zeyuan Allen-Zhu, Sc.D.@ZeyuanAllenZhu

(1/8)🍎A Galileo moment for LLM design🍎 As Pisa Tower experiment sparked modern physics, our controlled synthetic pretraining playground reveals LLM architectures' true limits. A turning point that might divide LLM research into "before" and "after." physics.allen-zhu.com/part-4-archite…

English

Simeng Sun retweetledi

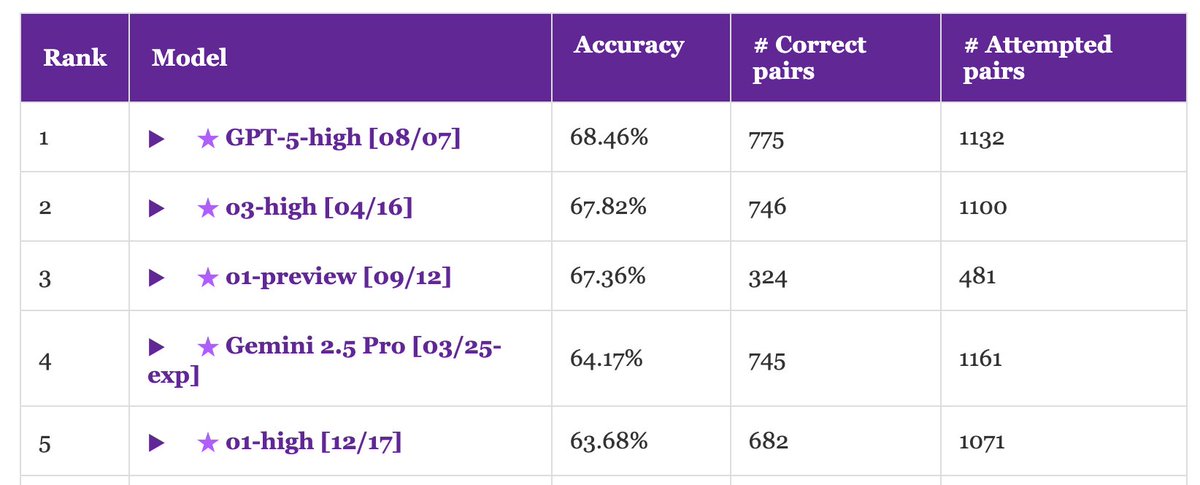

🏆 Our @nvidia KV Cache Compression Leaderboard is now live!

Compare state-of-the-art compression methods side-by-side with KVPress. See which techniques are leading in efficiency and performance. 🥇

huggingface.co/spaces/nvidia/…

English

Simeng Sun retweetledi

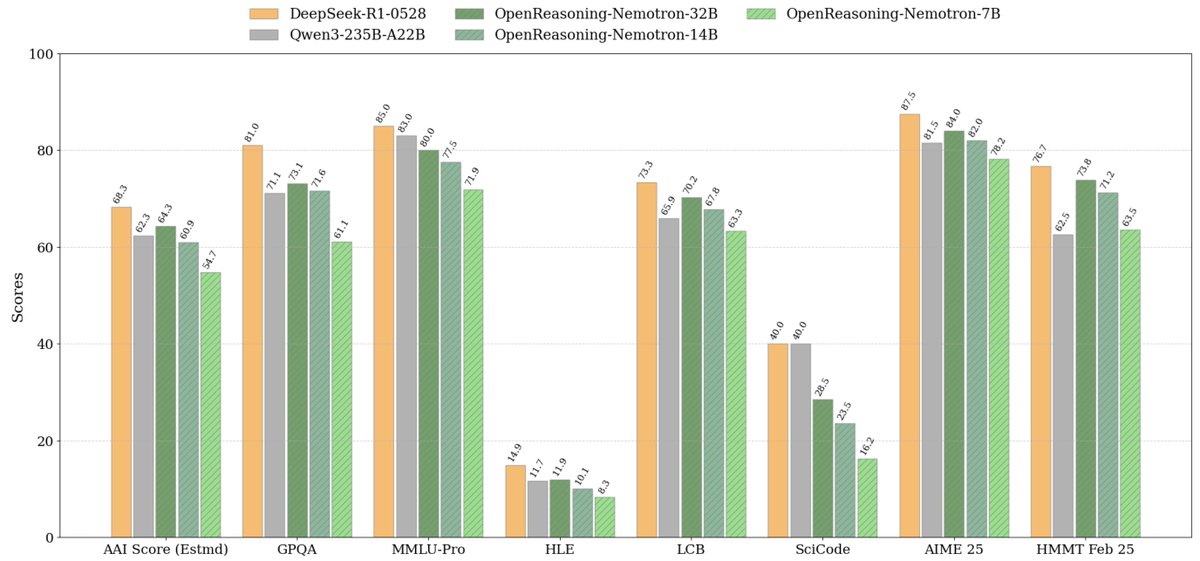

We've released a series of OpenReasoning-Nemotron models (1.5B, 7B, 14B and 32B) that set new SOTA on a wide range of reasoning benchmarks across open-weight models of corresponding size.

The models are based on Qwen2.5 architecture and are trained with SFT on the data generated with DeepSeek-R1-0528.

A few highlights 🧵

English

Simeng Sun retweetledi

Scaling up RL is all the rage right now, I had a chat with a friend about it yesterday. I'm fairly certain RL will continue to yield more intermediate gains, but I also don't expect it to be the full story. RL is basically "hey this happened to go well (/poorly), let me slightly increase (/decrease) the probability of every action I took for the future". You get a lot more leverage from verifier functions than explicit supervision, this is great. But first, it looks suspicious asymptotically - once the tasks grow to be minutes/hours of interaction long, you're really going to do all that work just to learn a single scalar outcome at the very end, to directly weight the gradient? Beyond asymptotics and second, this doesn't feel like the human mechanism of improvement for majority of intelligence tasks. There's significantly more bits of supervision we extract per rollout via a review/reflect stage along the lines of "what went well? what didn't go so well? what should I try next time?" etc. and the lessons from this stage feel explicit, like a new string to be added to the system prompt for the future, optionally to be distilled into weights (/intuition) later a bit like sleep. In English, we say something becomes "second nature" via this process, and we're missing learning paradigms like this. The new Memory feature is maybe a primordial version of this in ChatGPT, though it is only used for customization not problem solving. Notice that there is no equivalent of this for e.g. Atari RL because there are no LLMs and no in-context learning in those domains.

Example algorithm: given a task, do a few rollouts, stuff them all into one context window (along with the reward in each case), use a meta-prompt to review/reflect on what went well or not to obtain string "lesson", to be added to system prompt (or more generally modify the current lessons database). Many blanks to fill in, many tweaks possible, not obvious.

Example of lesson: we know LLMs can't super easily see letters due to tokenization and can't super easily count inside the residual stream, hence 'r' in 'strawberry' being famously difficult. Claude system prompt had a "quick fix" patch - a string was added along the lines of "If the user asks you to count letters, first separate them by commas and increment an explicit counter each time and do the task like that". This string is the "lesson", explicitly instructing the model how to complete the counting task, except the question is how this might fall out from agentic practice, instead of it being hard-coded by an engineer, how can this be generalized, and how lessons can be distilled over time to not bloat context windows indefinitely.

TLDR: RL will lead to more gains because when done well, it is a lot more leveraged, bitter-lesson-pilled, and superior to SFT. It doesn't feel like the full story, especially as rollout lengths continue to expand. There are more S curves to find beyond, possibly specific to LLMs and without analogues in game/robotics-like environments, which is exciting.

English

@giffmana To @simeng_ssun : this corresponds to our conversation a few weeks ago, and you should try smaller lr whenever possible, and always disable gradient acc (as long as FSDP is used).

English

This paper is pretty cool; through careful tuning, they show:

- you can train LLMs with batch-size as small as 1, just need smaller lr.

- even plain SGD works at small batch.

- Fancy optims mainly help at larger batch. (This reconciles discrepancy with past ResNet research.)

- At small batch, optim hparams are very insensitive!

I find this cool for two reasons:

1) When we did ScalingViT I also surprisingly found (but never published) that pure SGD works much better than expected. However, a small gap always remained, so we dropped it in favour of (our variant of) AdaFactor. The results here confirm this.

2) This is really good news for fine-tuning on small data with few GPUs. Drop the LoRA and do full fine-tuning with tiny batch-size and plain SGD!

A word of caution, because:

A) This is mostly done at tiny scale (30M params), to allow running many experiments. It is unclear how true the results remain at larger scale, although they do show a 1.3B result, it's usually after larger than 7B that things start to get more difficult.

B) This was all with transformers with QK-Norm, which has very stabilizing effect, I'd be curious if it holds without, but I give it a chance that it might.

C) For large-scale training, running on many (>10k) chips, large batch size is a necessity, not a choice. And they do show that at it's at large batch (not even that large: 4k) fancy optimizers matter significantly.

Micah Goldblum@micahgoldblum

🚨 Did you know that small-batch vanilla SGD without momentum (i.e. the first optimizer you learn about in intro ML) is virtually as fast as AdamW for LLM pretraining on a per-FLOP basis? 📜 1/n

English

Simeng Sun retweetledi

Simeng Sun retweetledi

📢 Can LLMs really reason outside the box in math? Or are they just remixing familiar strategies?

Remember DeepSeek R1, o1 have impressed us on Olympiad-level math but also they were failing at simple arithmetic 😬

We built a benchmark to find out → OMEGA Ω 📐

💥 We found that although very powerful, RL struggles to compose skills and to innovate new strategies that were not seen during training. 👇

work w. @UCBerkeley @allen_ai

A thread on what we learned 🧵

English

Simeng Sun retweetledi

Simeng Sun retweetledi

Simeng Sun retweetledi

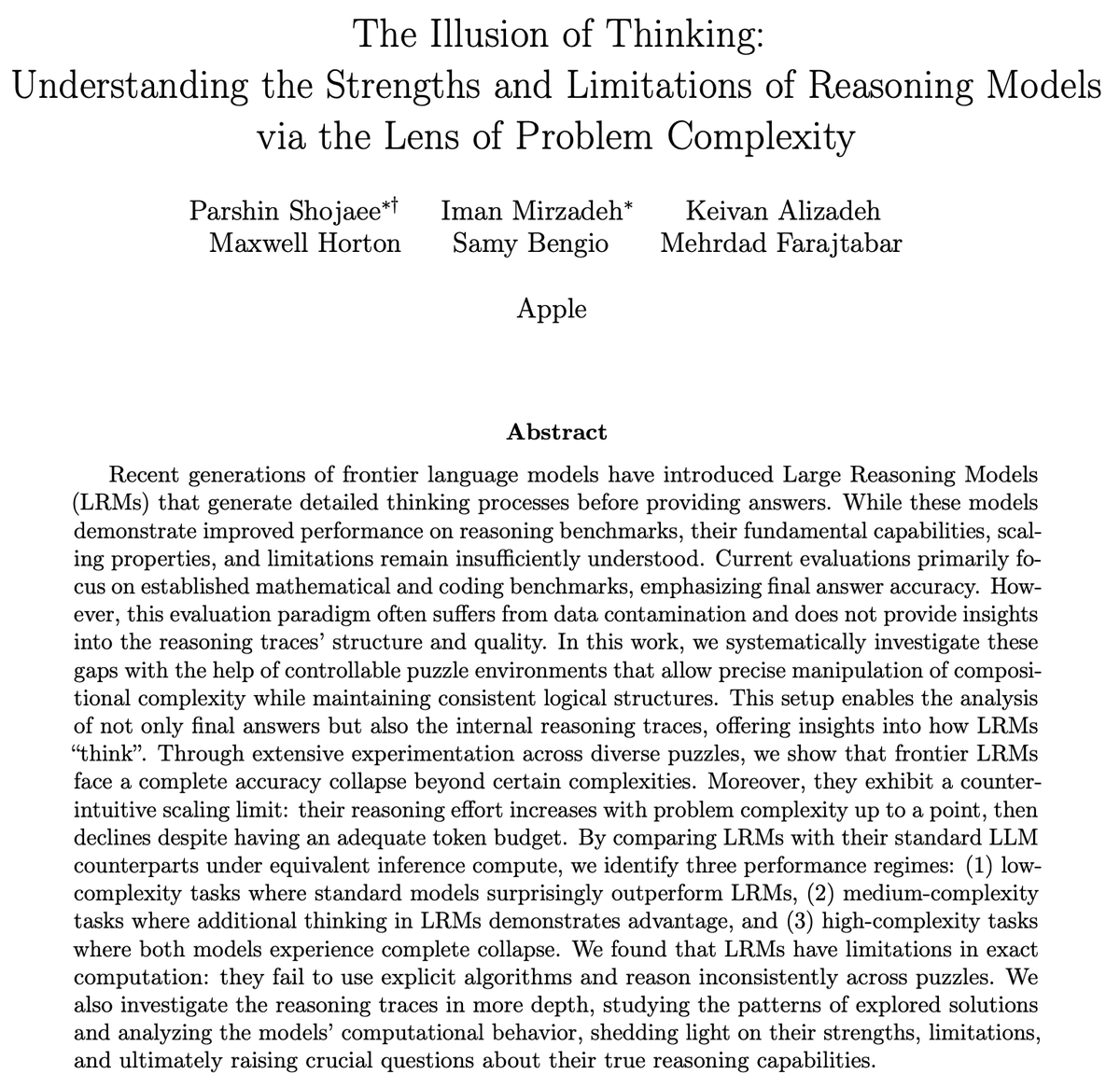

🧵 1/8 The Illusion of Thinking: Are reasoning models like o1/o3, DeepSeek-R1, and Claude 3.7 Sonnet really "thinking"? 🤔 Or are they just throwing more compute towards pattern matching?

The new Large Reasoning Models (LRMs) show promising gains on math and coding benchmarks, but we found their fundamental limitations are more severe than expected.

In our latest work, we compared each “thinking” LRM with its “non-thinking” LLM twin. Unlike most prior works that only measure the final performance, we analyzed their actual reasoning traces—looking inside their long "thoughts". Our analysis reveals several interesting results ⬇️

📄 machinelearning.apple.com/research/illus…

Work led by @ParshinShojaee and @i_mirzadeh, and with @KeivanAlizadeh2, @mchorton1991, Samy Bengio.

English

Simeng Sun retweetledi

✨ New paper ✨

🚨 Scaling test-time compute can lead to inverse or flattened scaling!!

We introduce SealQA, a new challenge benchmark w/ questions that trigger conflicting, ambiguous, or unhelpful web search results. Key takeaways:

➡️ Frontier LLMs struggle on Seal-0 (SealQA’s core set): most chat models (incl. GPT-4.1 w/ browsing) achieve near-zero accuracy

➡️ Advanced reasoning models (e.g., DeepSeek-R1) can be highly vulnerable to noisy search results

➡️ More test-time compute does not yield reliable gains: o-series models often plateau or decline early

➡️ "Lost-in-the-middle" is less of an issue, but models still fail to reliably identify relevant docs amid distractors

📜: arxiv.org/abs/2506.01062

🤗: huggingface.co/datasets/vtllm…

🧵:👇

English

Simeng Sun retweetledi

Simeng Sun retweetledi

Simeng Sun retweetledi

How much do language models memorize?

"We formally separate memorization into two components: unintended memorization, the information a model contains about a specific dataset, and generalization, the information a model contains about the true data-generation process. When we completely eliminate generalization, we can compute the total memorization, which provides an estimate of model capacity: our measurements estimate that GPT-style models have a capacity of approximately 3.6 bits per parameter. We train language models on datasets of increasing size and observe that models memorize until their capacity fills, at which point “grokking” begins, and unintended memorization decreases as models begin to generalize."

English

Simeng Sun retweetledi

Does RL truly expand a model’s reasoning🧠capabilities? Contrary to recent claims, the answer is yes—if you push RL training long enough!

Introducing ProRL 😎, a novel training recipe that scales RL to >2k steps, empowering the world’s leading 1.5B reasoning model💥and offering new insights into the debate.

English

Simeng Sun retweetledi

Simeng Sun retweetledi

Simeng Sun retweetledi

Long-form inputs (e.g., needle-in-haystack setups) are the crucial aspect of high-impact LLM applications. While previous studies have flagged issues like positional bias and distracting documents, they've missed a crucial element: the size of the gold/relevant context.

In our latest study, we look into how the size of these gold contexts impacts LLM performance in needle-in-a-haystack scenarios. The verdict? **Smaller gold contexts severely amplify positional bias.**

Why should you care? If you're developing LLMs to sift through large number of documents of varying sizes, beware: a smaller gold document among larger distractions can throw your pipeline off course. Basically, practitioners needs to keep an eye not only on the position of the likely gold document but also on its size relative to others.

📄Read the preprint: arxiv.org/abs/2505.18148

Work lead by Owen Bianchi and other collaborators at @DataTecnica

English