Igor Gitman

77 posts

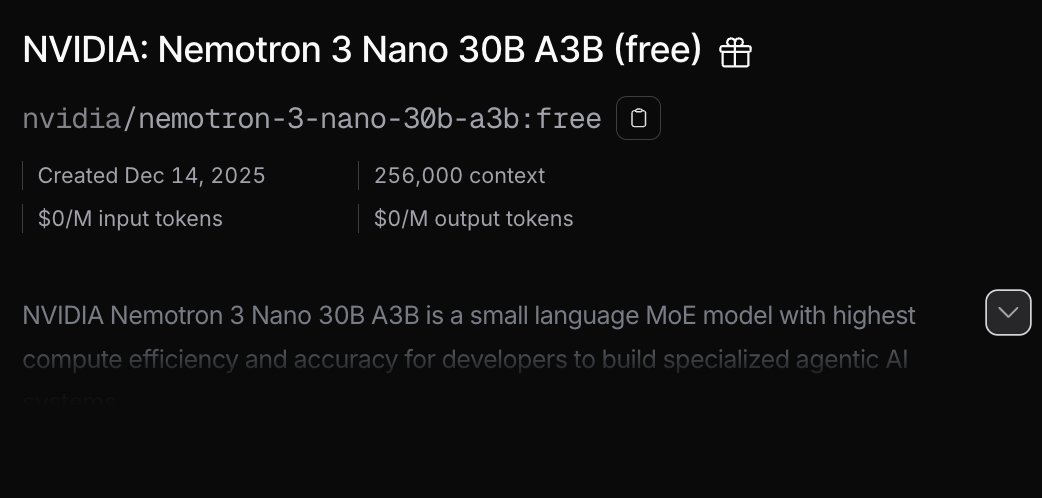

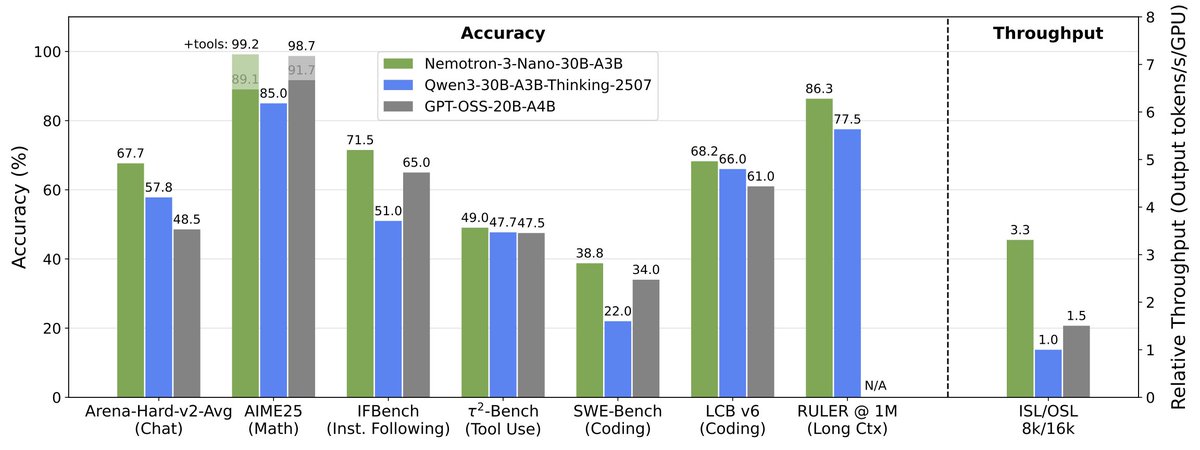

Announcing NVIDIA Nemotron 3 Super! 💚120B-12A Hybrid SSM Latent MoE, designed for Blackwell 💚36 on AAIndex v4 💚up to 2.2X faster than GPT-OSS-120B in FP4 💚Open data, open recipe, open weights Models, Tech report, etc. here: research.nvidia.com/labs/nemotron/… And yes, Ultra is coming!

Well, seems we're not getting DeepSeek V4 today but we're getting what amounts to its lite version runnable on normal hardware. New architecture, fast, 1M context… …and it's a bit weaker than the equivalent Qwen 3.5.

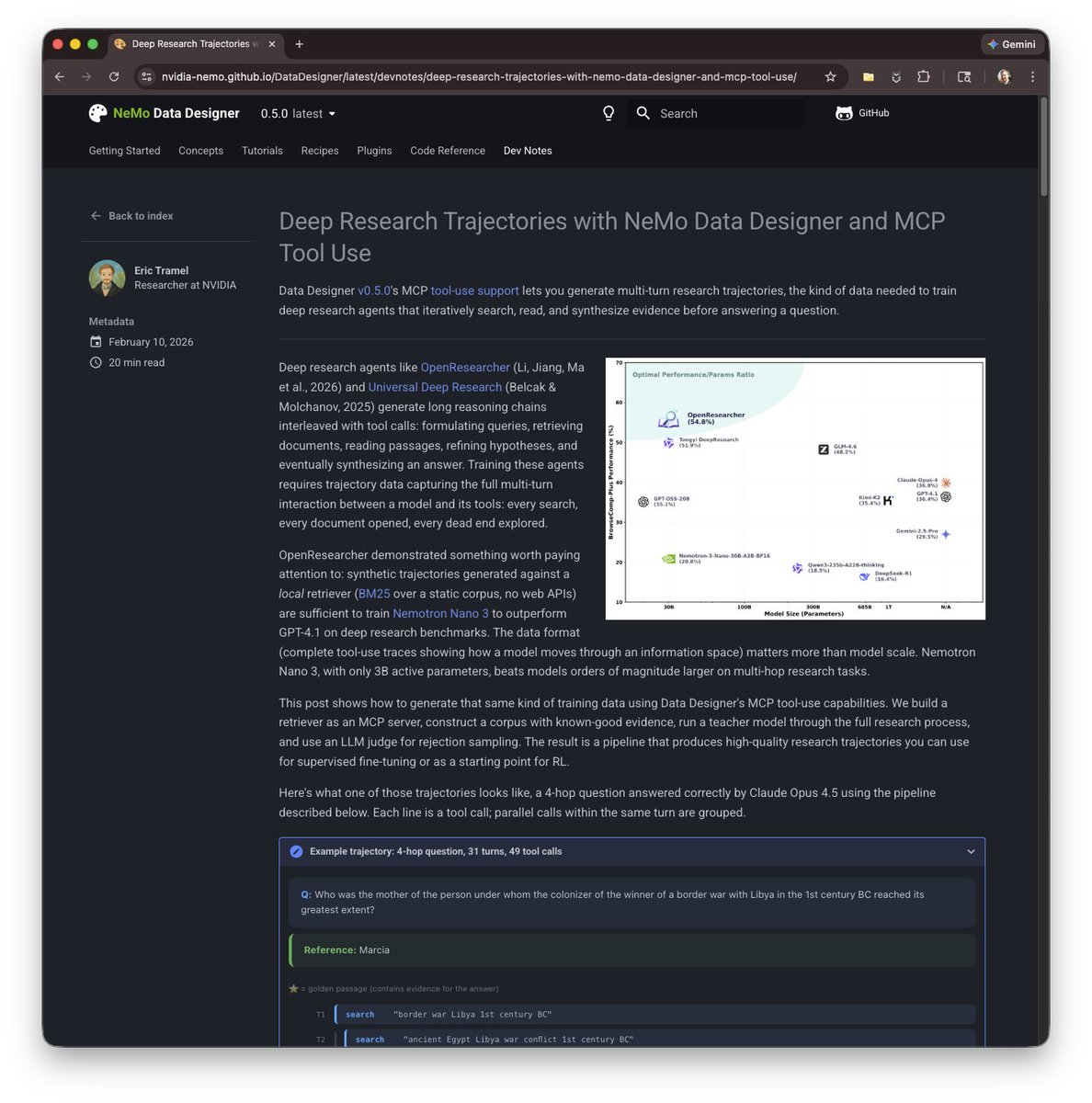

🚀 Introducing OpenResearcher: a fully offline pipeline for synthesizing 100+ turn deep-research trajectories—no search/scrape APIs, no rate limits, no nondeterminism. 💡 We use GPT-OSS-120B + a local retriever + a 10T-token corpus to generate long-horizon tool-use traces (search → open → find) that look like real browsing, but are free + reproducible. 📈 The payoff: SFT on these trajectories turns Nemotron-3-Nano-30B-A3B from 20.8% → 54.8% accuracy on BrowseComp-Plus (+34.0). 🧩 What makes it work? 🔎 Offline corpus = 15M FineWeb docs + 10K “gold” passages (bootstrapped once) 🧰 Explicit browsing primitives = better evidence-finding than “retrieve-and-read” 🎯 Reject sampling = keep only successful long-horizon traces 🧵 And we’re releasing everything: ✅ code + search engine + corpus recipe ✅ 96K-ish trajectories + eval logs ✅ trained models + live demo 👨💻 GitHub: github.com/TIGER-AI-Lab/O… 🤗 Models & data: huggingface.co/collections/TI… 🚀 Demo: huggingface.co/spaces/OpenRes… 🔎 Eval logs: huggingface.co/datasets/OpenR… #llms #agentic #deepresearch #tooluse #opensource #retrieval #SFT

Today, @NVIDIA is launching the open Nemotron 3 model family, starting with Nano (30B-3A), which pushes the frontier of accuracy and inference efficiency with a novel hybrid SSM Mixture of Experts architecture. Super and Ultra are coming in the next few months.

Today, @NVIDIA is launching the open Nemotron 3 model family, starting with Nano (30B-3A), which pushes the frontier of accuracy and inference efficiency with a novel hybrid SSM Mixture of Experts architecture. Super and Ultra are coming in the next few months.