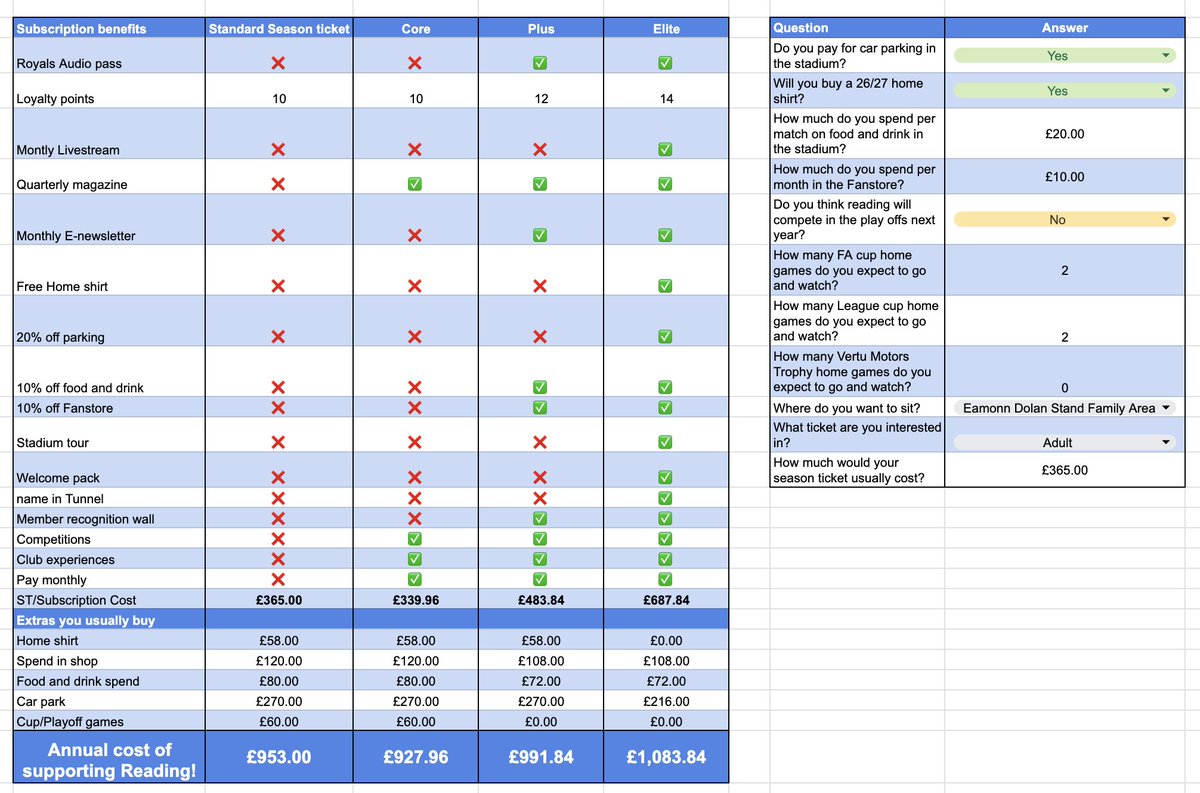

Simon Maple retweetledi

Your eval leaderboard can change completely depending on which model grades the answers.

Simon Maple ( @sjmaple ) reran the same benchmark suite using three different LLM judges: Sonnet, GPT-5.5, and Opus-4-7. Nothing else changed. The tasks, rubrics, scenarios, and model outputs were identical.

The scores were not.

One model moved by 47 points on a single skill depending on the judge. gpt-5.3 ranked near the top under Sonnet, then dropped sharply under GPT-5.5.

Opus consistently scored itself higher than the other judges did. GPT-5.5 turned out to be dramatically stricter overall, averaging almost 7 points lower than Sonnet across the benchmark.

What makes this especially interesting is that the instability wasn’t evenly distributed.

Tasks with concrete pass/fail conditions stayed relatively consistent across judges. But as soon as the rubric involved interpretation, structure, writing quality, or “best practices”, the variance widened fast. Two judges could look at the exact same output and disagree by double digits on whether the model had actually solved the task properly or just approximated it convincingly.

That has pretty major implications for how people read benchmark charts right now.

A lot of public evals are presented as if the number is objective, when in reality the scoring model itself is shaping the outcome. In some cases, the judge preference is large enough to reorder the leaderboard entirely.

The interesting exception was Opus. It stayed in first place regardless of which model acted as the judge. Everything below it shifted around.

Read the full breakdown here:

tessl.io/blog/your-benc…

English