Soham

627 posts

Soham

@sohamg121

Research Scientist @MistralAI on multimodal/audio LLMs. Previously: @GoogleDeepMind MS @CarnegieMellon

Today, we are launching NextToken - a single place to build production-grade agents, apps and analytics. Code-forward. Cloud Hosted. Zero setup. Extremely affordable. @nexttoken_co

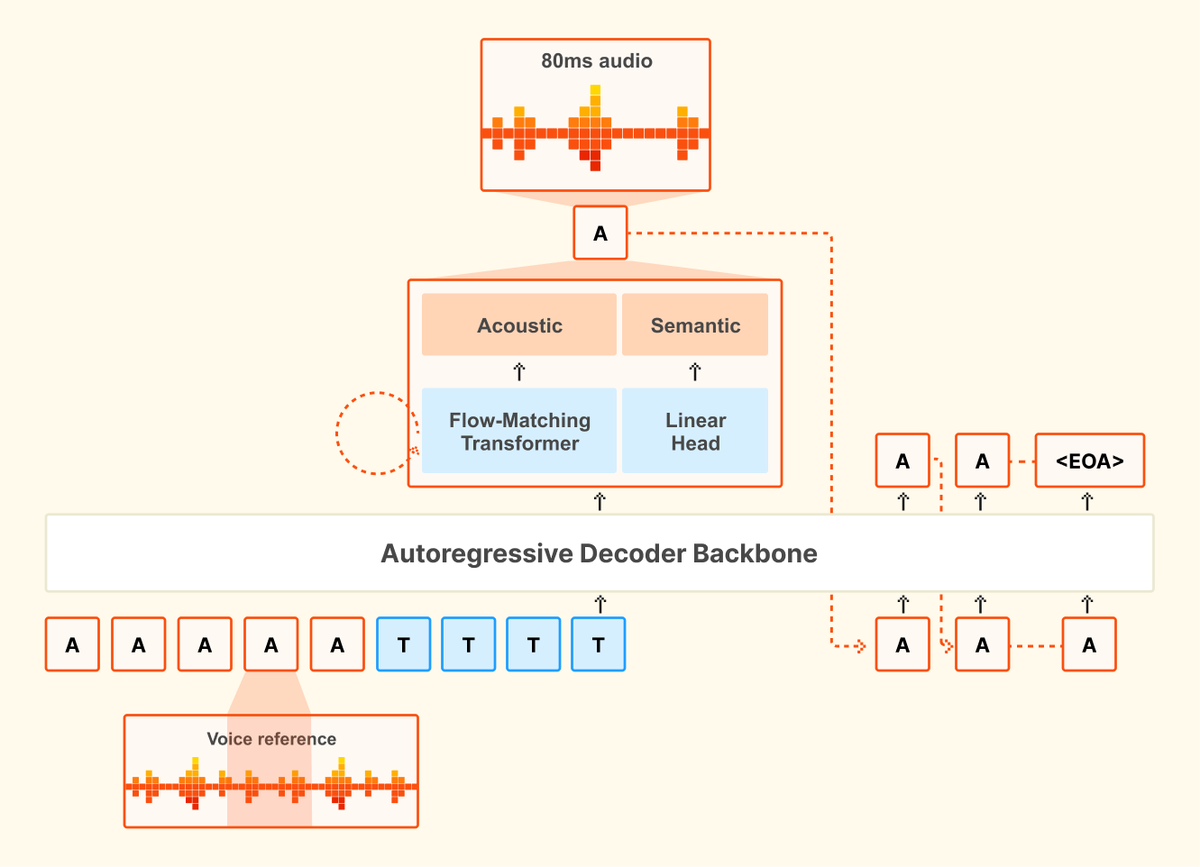

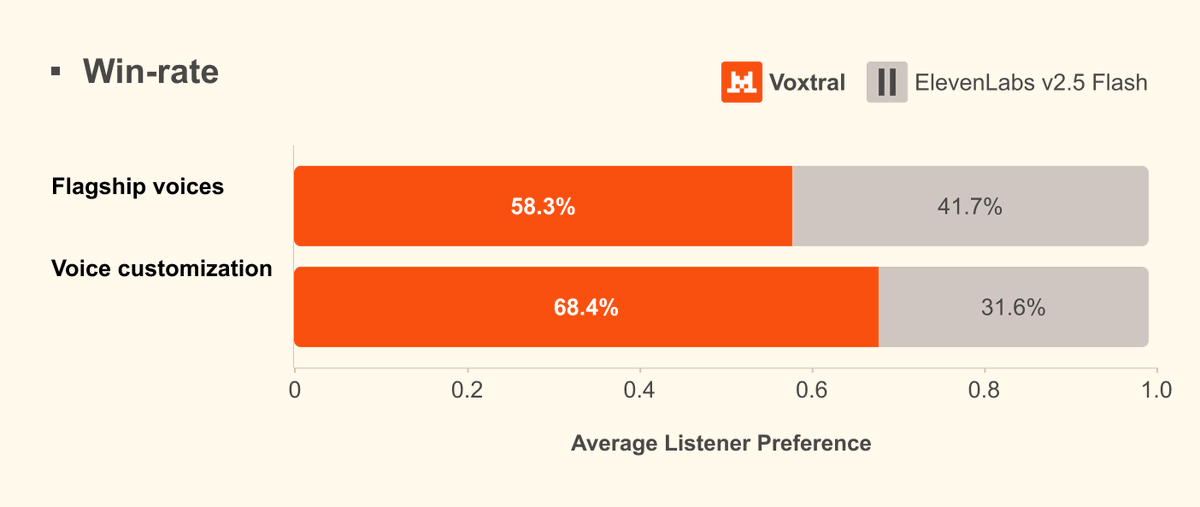

🔊Introducing Voxtral TTS: our new frontier open-weight model for natural, expressive, and ultra-fast text-to-speech 🎭Realistic, emotionally expressive speech. 🌍Supports 9 languages and accurately captures diverse dialects. ⚡Very low latency for time-to-first-audio. 🔄Easily adaptable to new voices

$100K to anyone who can help recover it

Speech isn’t just text read out loud. 💬 Real conversations are dynamic, full of interruptions, and context-rich — and benchmarks should match. Introducing Audio MultiChallenge (Audio MC), the first benchmark built to test how well native Speech-to-Speech models handle real conversations.

This is Sergey Brin's yacht He got so bored of sitting on this $450M yacht that he had to get out and go create things again The only true long-term satisfaction for man is to create, either things, or babies

Your government needs YOU to transform the federal government through modern software development. If you’re up for a huge challenge, join 1,000 of the country’s best and brightest technologists in the inaugural class of @USTechForce. We are partnering with the top U.S. technology companies to take on this challenge. You’ll learn a ton, network across the most important government agencies and private sector companies, ultimately creating powerful career opportunities whether you want to continue in public service or join the private sector. I am grateful to @POTUS for ensuring that America remains the world’s technology leader. Go to TechForce.gov to apply today.

🚨BREAKING: Text Leaderboard Update 🐳 Deepseek-v3.2 enters the leaderboard at #38, and Deepseek-v3.2-thinking lands at #41. For comparison, previous versions ranked higher: 🔹 v3.2 ranks #38 (–5 pts v3.1 and –14 pts v3.2-exp) 🔹 v3.2-thinking ranks #41 (–7 pts vs v3.1-thinking and –5 pts v3.2-exp-thinking) Both models show their biggest gains in Legal by rank, with improvements of +28 points for v3.2 and +19 points for v3.2-thinking when compared to v3.1 predecessors. The largest drop appears in Healthcare for, where v3.2-thinking falls by 25 points. Where v3.2 performs strongest (among open models): 🔹 #1 in Math and Legal 🔹 Top 10 in Multi-Turn, Media, and Business Where v3.2-thinking performs strongest (among open models): 🔹 #1 in Science 🔹 Top 5 in Legal These updates reflect @deepseek_ai’s ongoing work to expand and refine its open source model family.