Squinter 😐

7.1K posts

Squinter 😐

@spudheadcapital

ideologically molested value investor

230k GPUs, including 30k GB200s, are operational for training Grok @xAI in a single supercluster called Colossus 1 (inference is done by our cloud providers). At Colossus 2, the first batch of 550k GB200s & GB300s, also for training, start going online in a few weeks. As Jensen Huang has stated, @xAI is unmatched in speed. It’s not even close.

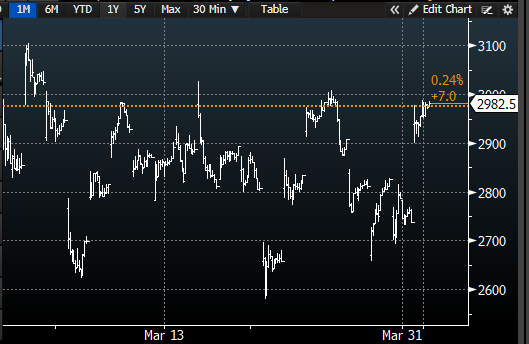

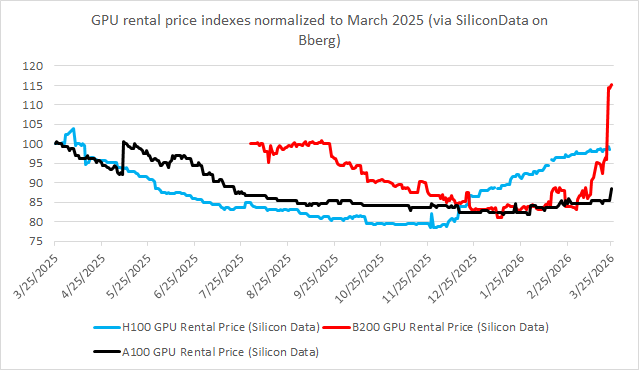

We aren’t going to have semi cycles in the way we used to, predicated by end market product cycles We will now have capital cycles where the commodity of compute oscillates between expensive and cheap based on lumpy supply additions / unlocking of capabilities

Participants in the GPU rental market had grown accustomed to a familiar regime: relentless cost declines, falling $/token, and the assumption that each new chip generation would compress pricing further. Many onlookers extrapolated this into outright bearishness on older hardware, calling for a wave of impairments across AI clouds as Hopper-era GPUs were rendered economically obsolete. As Dylan noted, the logic seemed straightforward - newer chips dramatically outperform Hopper, so prices should fall. The rise of agentic AI have shifted the paradigm. Pricing is now repricing violently to real-time economic value under constraint. As highlighted in the transcript, even though models like GPT-5.4 are cheaper and more efficient - producing more tokens per GPU at higher quality - this does not reduce demand. Instead, it expands it, in-line with Jevons' Paradox. SemiAnalysis data shows the same dynamic: token usage is accelerating rapidly because the ROI is obvious, making compute demand increasingly price insensitive. In this new regime, supply constraints and token value dominate pricing we think this paradigm is only here to stay if not intensify.