Stephanie

3.5K posts

Stephanie

@stephe_lee

VP People & Programs @nansen_ai • Building A New Way To Work • ICF-ACC Coach • (prev. Remote Lead @cargo_one_ ; Team Experience @buffer)

full house last night at our Claude Code Community meetup 🔥 7 demos all about AGENTS thanks @mdelvita and @AnthropicAI - great vibes

A small thank you to everyone using Claude: We’re doubling usage outside our peak hours for the next two weeks.

Your agent. An onchain lens. Full details tomorrow.

My biggest takeaways from @bcherny: 1. Coding is now “solved” for most use cases. Boris hasn’t written a single line of code by hand since November, with 100% of his work now authored by Claude Code. At the same time, he remains one of the most productive engineers at Anthropic, shipping 10 to 30 pull requests daily while leading the team. 2. Anthropic has seen a 200% increase in engineer productivity since adopting Claude Code. As Boris notes, “Back at Meta, with hundreds of engineers working on productivity, we’d see gains of a few percentage points in a year. Now we’re seeing hundreds of percentage points.” 3. AI is moving beyond writing code to generating ideas. “Claude is starting to come up with ideas. It’s looking through feedback, bug reports, and telemetry, then suggesting features to ship.” 4. The next roles to be transformed are those adjacent to engineering. Product managers, designers, and data scientists will see similar transformations as agentic AI expands beyond coding. “Any kind of job where you use computer tools will be next.” 5. Build for the model six months from now, not today. One of Boris’s key principles is to design products for future AI capabilities, not current ones. “It’s going to be uncomfortable because your product-market fit won’t be very good for the first six months. But when that model comes out, you’ll hit the ground running.” 6. Watch for “latent demand.” Claude Code was built by observing what people were already trying to do, and then making it easier. Cowork emerged when they noticed people using Claude Code for non-coding tasks like analyzing MRIs or recovering wedding photos from corrupted drives. 7. Don’t optimize for token cost. Boris advises companies to give engineers unlimited tokens during experimentation phases. “At small scale, the token cost is still relatively low compared to their salary. If an idea works and scales, that’s when you optimize it.” 8. Underfund headcount on purpose. When Boris puts one engineer on a project, they’re forced to let AI do more of the work. Constraint drives creative use of AI tooling, not just faster typing. 9. The most successful people in the future will be generalists. “Try to be a generalist more than you have in the past. Some of the most effective engineers cross over disciplines. The people who will be rewarded most won’t just be AI-native—they’ll be curious generalists who can think about the broader problem they’re solving.” 10. Always use the most capable model, not the cheapest. A less intelligent model often burns more tokens correcting mistakes than a smarter one spends getting it right the first time. Boris runs maximum effort on Opus 4.6 for everything. Here's the full conversation: youtube.com/watch?v=We7BZV…

🦞🚨 POLLY WORKS AND IS USEFUL 🚨🦞

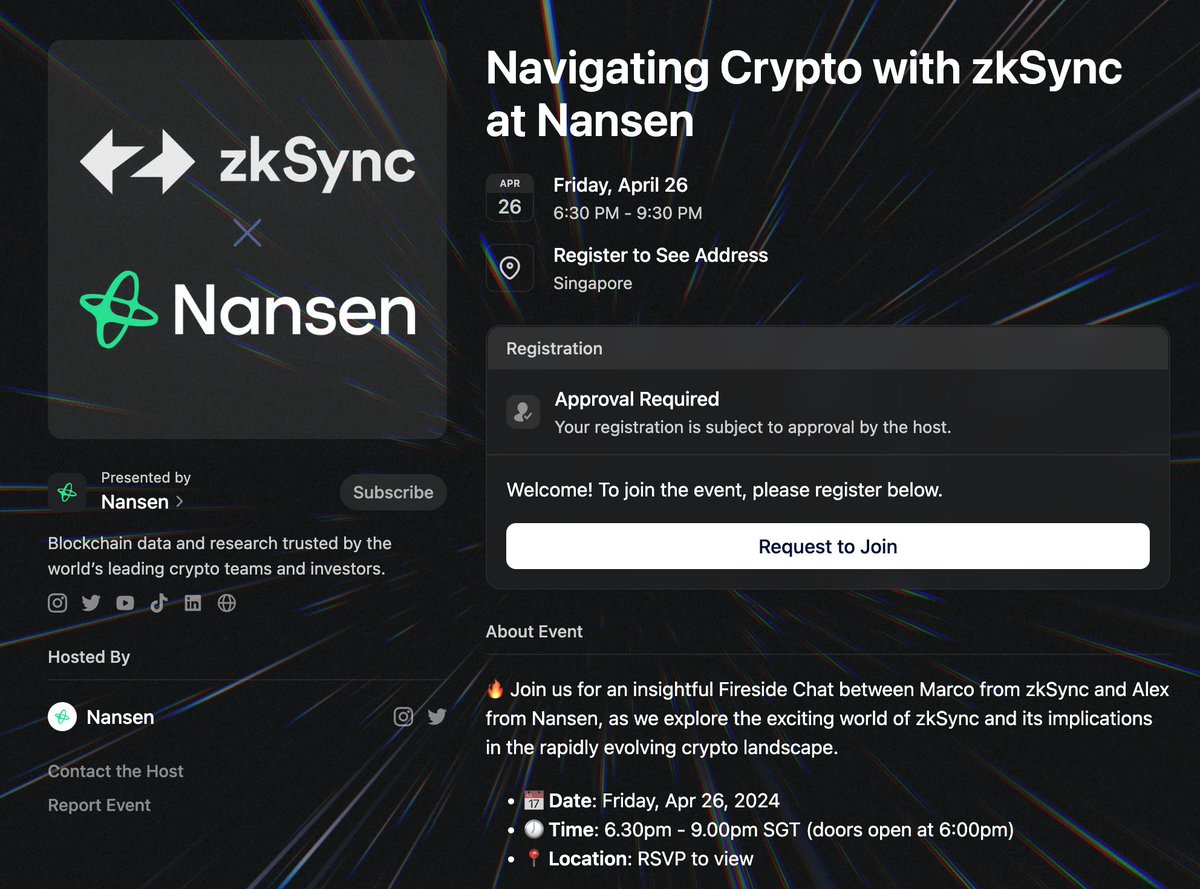

Sundays are usually when we push out our @nansen_ai bi-weekly newsletter As dips are for buying in a bull market, we pushed it out on Friday to update our subscribers on what Smart Money is doing This is a snippet of what was shared