Stephen Haney

4.8K posts

Stephen Haney

@stephenhaney

founder @paper · for the love of design

California Katılım Eylül 2010

3K Takip Edilen13.8K Takipçiler

Sabitlenmiş Tweet

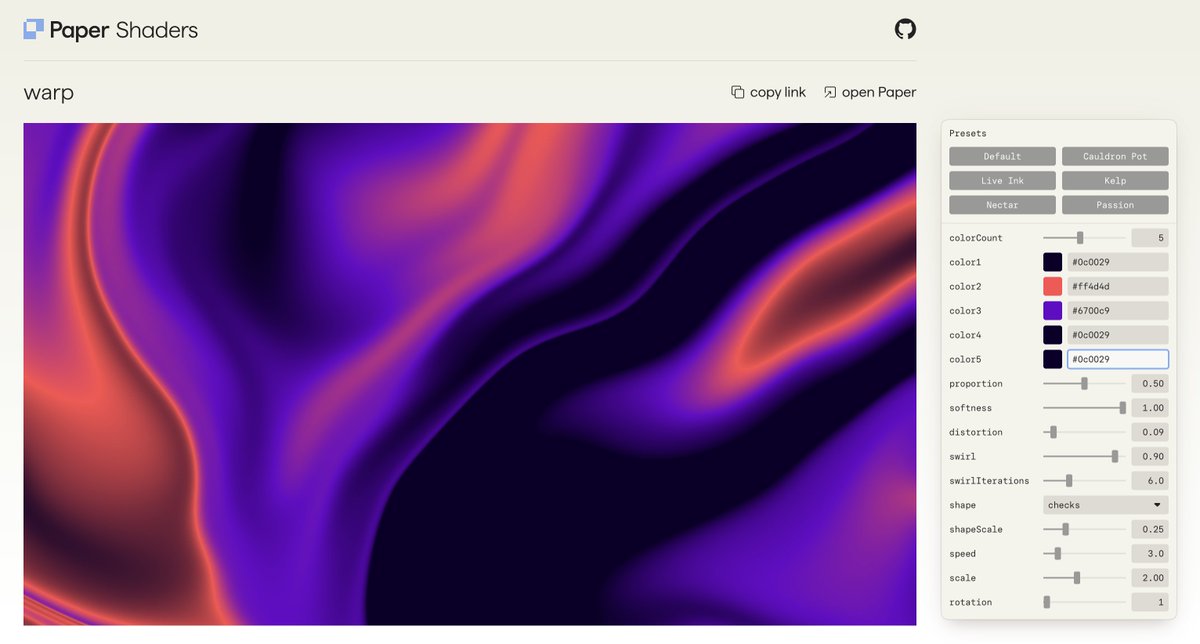

@stephenhaney @paper Aware you guys already have shaders. Not like these though.

English

@polyslyme @paper From Figma Make? Yes for sure. Not from the o.g. Figma canvas though (it's not html)

English

@uxbykai @tkkong @evilrabbit_ Yeah, Paper is like, a canvas plugin for your agent. So you can communicate visually with the agent instead of just prompting :)

English

@stephenhaney @tkkong @evilrabbit_ but knowing i can connect with claude is a big reason for me to try it now!

English

@KEMOS4BE @iirfan @heyayushh @shek_dev I think tokens will go a long way toward this, in active development. Thanks for the feedback!

English

@stephenhaney @iirfan @heyayushh @shek_dev its more about being able to enforce hard rules (hard problem to solve)

e.g. i have a bunch of CSS files, linters, and such, would be nice if paper MCP was able to enforce rules for agents "hey u used the wrong text color" deteministically

English

@uxbykai @tkkong @evilrabbit_ Oh Play the iOS dev design tool?! That is a very different thing for sure :)

Once you get Paper, make sure to connect it to Claude Code or whatever you're using:

paper.design/docs/mcp

English

@stephenhaney @tkkong @evilrabbit_ um ok im a total idiot... I'm so sorry I thought I download paper, but I had play downloaded. i opened up play a few times and i just couldn't *get* it.

feelin' like a real ralph wiggum here. im gonna give it a real try @stephenhaney and send feedback🫡

English

@vanschneider Totally agree. I mean more, that's a great way to explain why there is space for this and also other tools.

English

@stephenhaney And to clarify, not a bad thing per se, a big part of the market runs on that type of stuff.

English

Honestly, I’m glad this type of design is being automated because it’s so boring there can’t possibly be any designer who enjoys doing this type of work manually.

To me it’s just Templates 2.0

Google Labs@GoogleLabs

Introducing the new @stitchbygoogle, Google’s vibe design platform that transforms natural language into high-fidelity designs in one seamless flow. 🎨Create with a smarter design agent: Describe a new business concept or app vision and see it take shape on an AI-native canvas. ⚡️ Iterate quickly: Stitch screens together into interactive prototypes and manage your brand with a portable design system. 🎤 Collaborate with voice: Use hands-free voice interactions to update layouts and explore new variations in real-time. Try it now (Age 18+ only. Currently available in English and in countries where Gemini is supported.) → stitch.withgoogle.com

English

@KEMOS4BE @iirfan @heyayushh @shek_dev I love this so much. let me know what kinda fixing is annoying and we will tune our instructions.

English

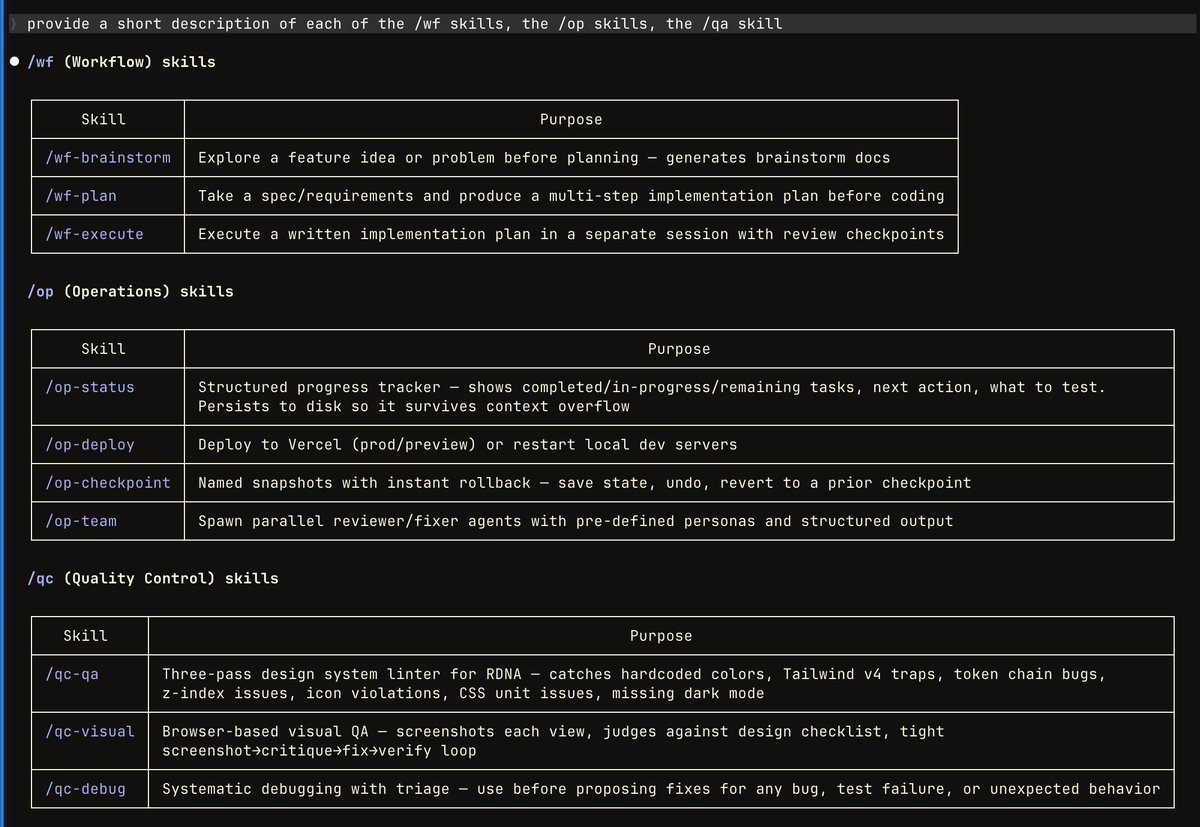

mostly to save typing and to force certain paths for highly repetitive tasks that agents like to muck about on

/wf is brainstorm interview -> plan -> execute plan

/op -> i usually use for pre-compaction shit, /op-status is very quick, keeps context fresh, /op-checkpoint is really nice when i do something crazy on main

/op-deploy is nice-to-have for quick bublish

/qc-visual is insanely useful, /qc-qa is a 3 pass linter thingy that auto-fixes my design system linter based on feedback i have (bc the linter drifts from my styles w/ large design changes)

/p-design was a bunch of skills i made for @paper, all the p-skills basically are for the /voice-to-content workflow but i'm prolly gonna delete them all in favor of a non-paper workflow (platform isn't to my liking tbh, radiants can have deterministic design workflows bc limited styles -> outputs better results, paper is non-deterministic/generalized, means i have to do a lot of manual fixing)

but, the flow is:

1. I have a meeting/video/twitter space + record it

2. /voice-to-content runs local whispr voice to text on it

3. output to typefully mcp, easy way to turn long conversations into meaningful, concise, threads that aren't just a bunch of AI slop

4. p-design workflow gets the text from those threads, then generates graphics for them base on brand system

English

@stephenhaney @WeAreDesignX @paper @stitchbygoogle @stephenhaney we'd love for you or someone from your team to come and demo! :)

GIF

English

180+ folks joining in on our weekly @WeAreDesignX AI friday sessions to explore @paper : luma.com/exploring-pape…

Might dig into @stitchbygoogle too if we get time towards the end!

English

@pr3khar @stitchbygoogle This is definitely what we’re going for!

English

Tried @stitchbygoogle after seeing a flood of “designers are cooked” posts, like with every new design tool drop.

Gave it an image of the Stripe Dashboard and asked it to recreate it for mobile. What it did was theoretically correct - a responsive version of the same dashboard.

Not about the visuals, it misses the main point: Desktop vs mobile use-cases aren’t the same.

A good designer would optimize for on-the-go, key actions and insights - not shrink the entire dashboard.

It can definitely get better with more prompting and context. But that’s the point, good products come from getting the context right and executing with detail.

We earlier used @figma to perfect execution. With AI, many moved straight to code - faster, with similar control. Stitch feels to be in a weird spot: not as free as Figma for exploration, not with the same control or precision as code.

Right now, it’s best at fast execution and better visuals compared to other similar tools, though overly skewed towards marketing pages over real products.

@paper is the only one that feels intentional so far.

English

@rowanopoly @alexispresa @midjourney @paper Paper has a bunch of models you can use inside the canvas, but midjourney doesn’t provide an API so we can’t use them directly. But after you paste into paper, you can use nano banana to edit, for instance.

English

@alexispresa @midjourney @paper love the combo but are you manually generating these images and then inputting them to paper?

can paper do this programmatically?

English

@natellewellyn @paper Hey Nate, Paper def has google fonts! Did you not see them in the font list?

English

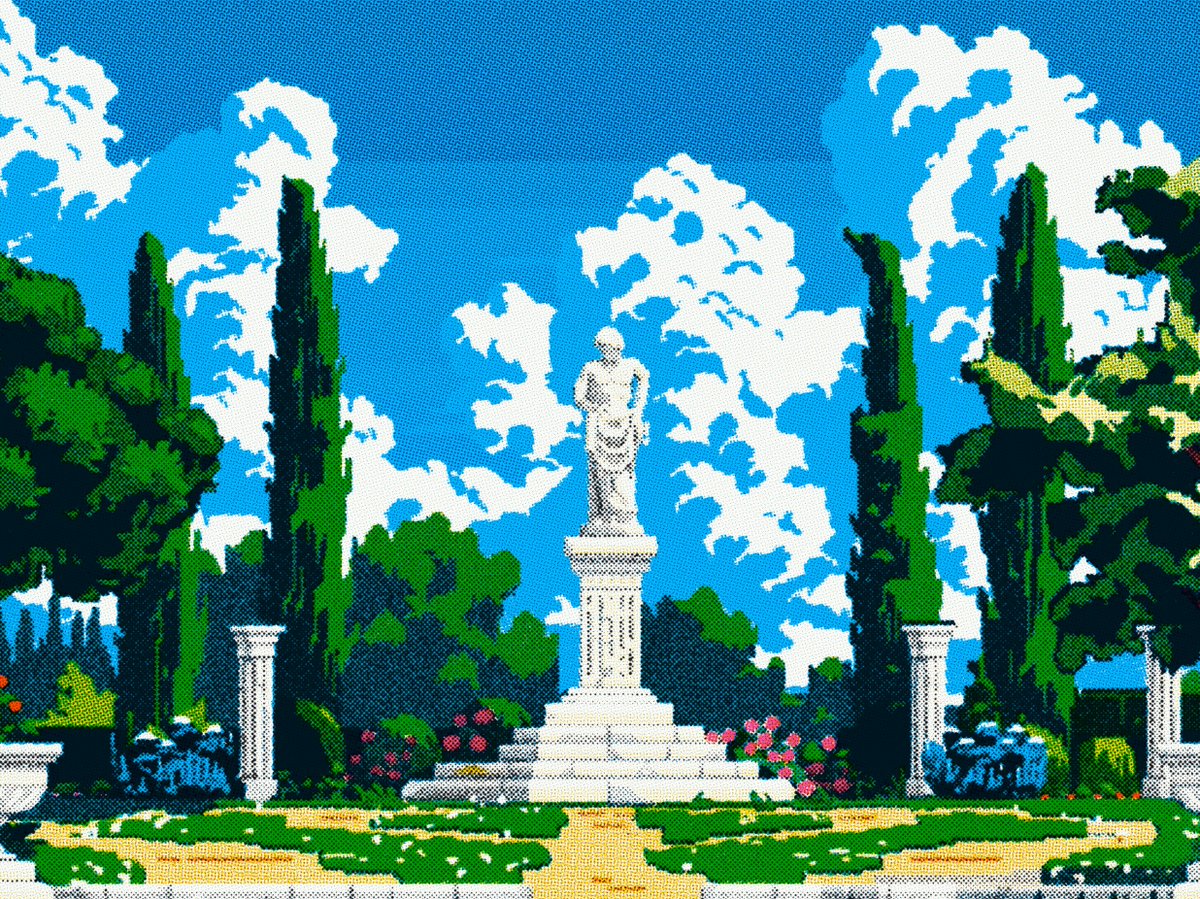

@enneracting @alexispresa @midjourney @paper Likely copy paste and use paper shaders to dial in the vibes

English

@alexispresa @midjourney @paper The vibe is immaculate. What's the workflow between Midjourney and Paper?

English

@natellewellyn @paper Agree, will be great to have variables in Paper. But I’m pretty sure they’ll add them soon

English

@dudufolio @cursor_ai @paper Ayyyy I missed this! Nice usage Cursor team.

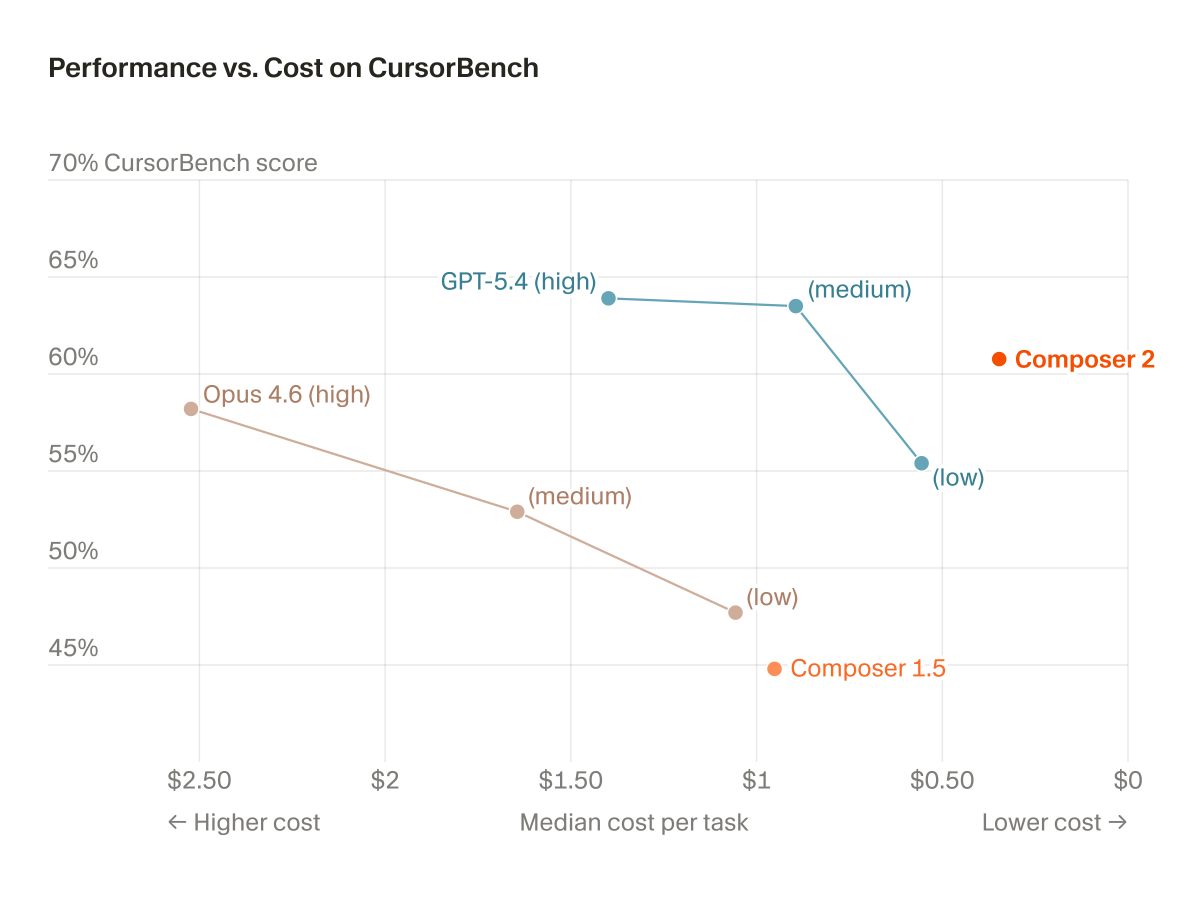

Maining Composer 2 today and so far so good

English