@outsourc_e Ok you got me. I've heavy pipelines already set with custom web-search / obsidian / liteLLM, should I plan some extras ?

English

mirage

535 posts

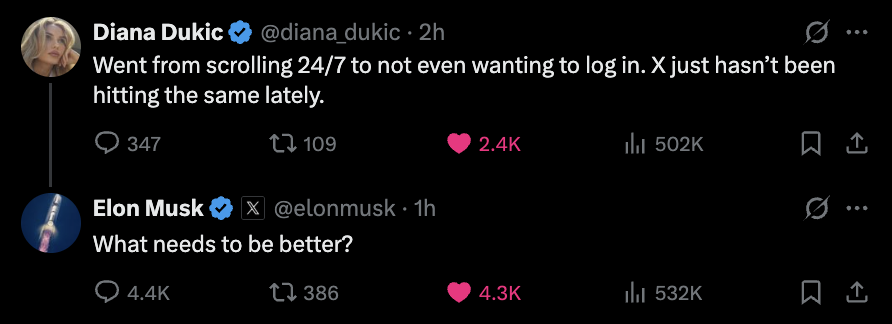

@diana_dukic What needs to be better?

Écoutez-moi bien, j’ai une idée, mais j’ai besoin de sponsors : oubliez le GP Explorer, on fait le Robot Fight Explorer. On donne un robot à chaque youtubeur. On équipe chaque youtubeur de capteurs pendant une aprem, puis on laisse les robots apprendre comment chaque youtubeurs se bat. Ensuite, pendant un tournoi, on demande aux robots de reproduire un combat en se basant sur le style de combat du youtubeur. Je vois déjà comment faire ca, il me faut juste environ 2M d'euros. Merci, voilà taguez Squeezie ou je sais pas x) . 🤠🤠

Why have a lobster in your computer when you can have Poseidon instead?