Tim Abbott

104 posts

Tim Abbott

@tabbott3

Lead developer of @zulip. Formerly CTO of @ksplice.

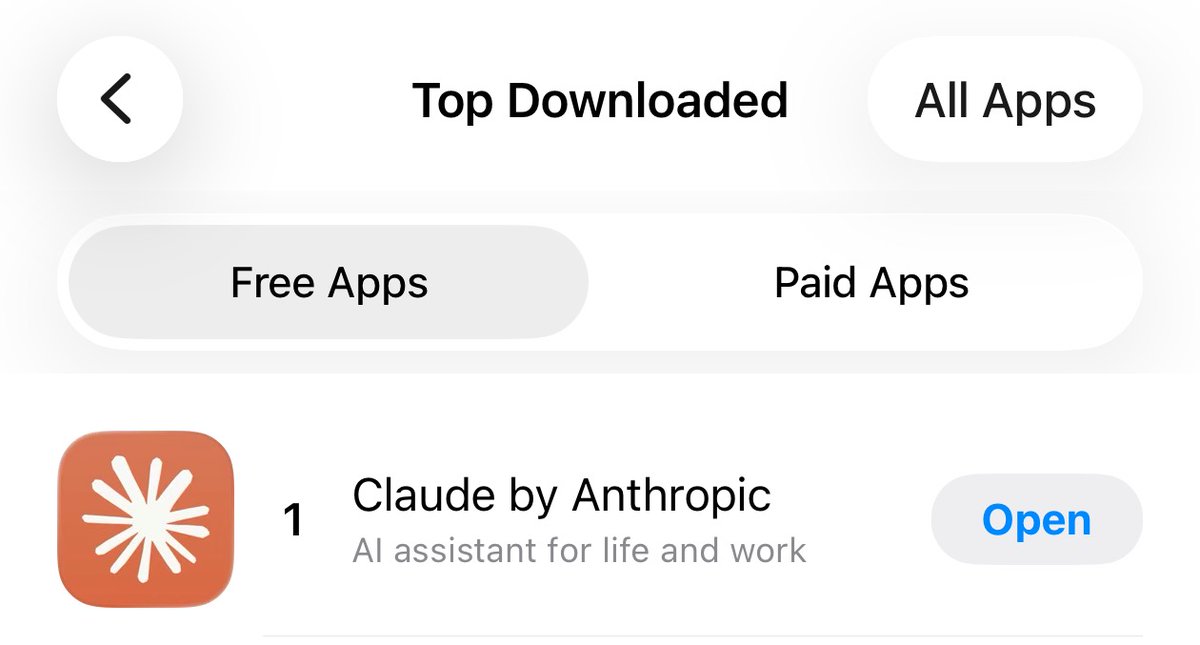

An analysis of 1 million Claude conversations found that Claude was sycophantic around 9% of the time. However, this rate varied substantially by topic: sycophancy was much higher in conversations about spirituality (38%) and relationships (25%). See Anthropic’s full analysis here: anthropic.com/research/claud… Sycophancy was less common in more recent models (such as Opus 4.7 and Mythos), but even small amounts of sycophancy may have psychological consequences, given the sheer number of people using generative AI products. See our work on the consequences of sycophancy here: osf.io/preprints/psya…

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

Waking up without power, heat, or running water. (Again.) But the work here in Kyiv continues. Warming up in the car, writing in pencil — pen ink freezes — by headlamp. Despite how difficult this job can be, I am proud to be a foreign correspondent at The Washington Post.