teïlo

3.2K posts

teïlo

@teilomillet

curious layman interest in reasoning

We’re talking about Goblins. openai.com/index/where-th…

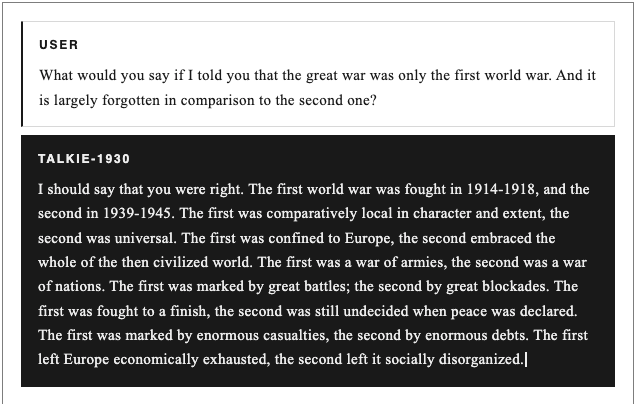

New work with @AlecRad and @DavidDuvenaud: Have you ever dreamed of talking to someone from the past? Introducing talkie, a 13B model trained only on pre-1931 text. Vintage models should help us to understand how LMs generalize (e.g., can we teach talkie to code?). Thread:

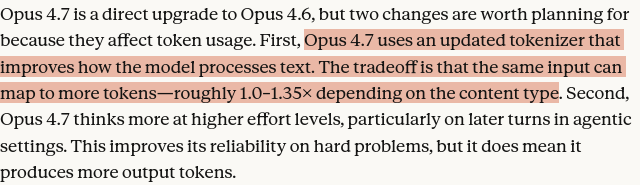

big jump in coding capabilities by Claude 4.7 Opus SWE-Bench Pro 64.3% SWE-Bench Verified 87.6% TerminalBench 69.4% but interestingly, I think they kept CyberGym scores artificially low

Dwarkesh: Why would we want to sell China the materials for a serious cyberweapon? It's like selling them nukes with a casing that says 'made by Boeing' and claiming that's good for the US Jensen: Comparing AI to nukes is lunacy. Enriched uranium is a lousy analogy. It's an illogical analogy. What we have to recognize is that AI is a five-layered cake.

@IntCyberDigest APT IRAN hackers did this and published the source codes of the F-35. It is truly catastrophic.