Tomás Puig

5.3K posts

Tomás Puig

@tomascooking

Founder & CEO of Alembic, recovering global CMO, angel at Test Kitchen Capital, 1st gen Cuban. I tweet in long delayed bursts as I’m usually too busy building.

The run on inference capacity is coming. You have been warned.

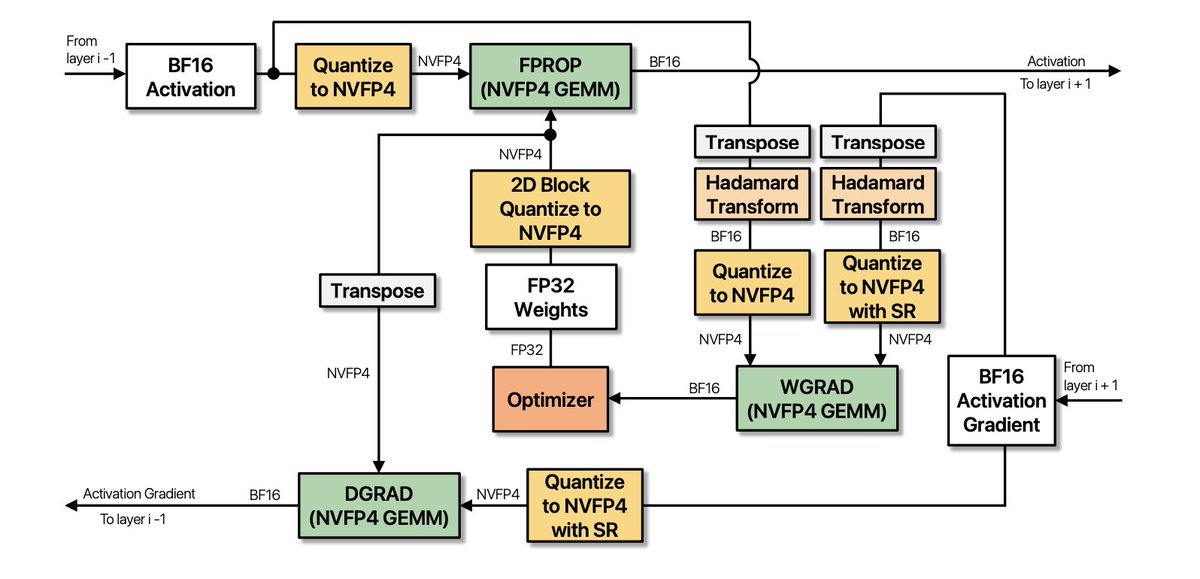

Introducing Multi-Head LatentMoE 🚀 Turns out, making NVIDIA's LatentMoE [1] multi-head further unlocks O(1), balanced, and deterministic communication. Our insight: Head Parallel; Move routing from before all-to-all to after. Token duplication happens locally. Always uniform, always deterministic. It works orthogonally to EP as a new dimension of parallelism. For example, use HP for intra-cluster all-to-all as a highway, then use EP locally. We propose FlashAttention-like routing and expert computation, both exact, IO-aware, and constant memory. This is to handle the increased number of sub-tokens. Results: - We replicate LatentMoE and confirm it is indeed faster than MoE, with matching model performance. (See Design Principle IV in [1]) - Up to 1.61x faster training than MoE+EP with identical model performance. - Higher model performance while still 1.11x faster with doubled granularity. 📄 Paper: arxiv.org/abs/2602.04870… 💻 Code: github.com/kerner-lab/Spa… [1] Elango et al., "LatentMoE: Toward Optimal Accuracy per FLOP and Parameter in Mixture of Experts", 2026. arxiv.org/abs/2601.18089

I don’t know any of the details but I continue to be surprised at how hard it’s been to get B200s working. My naive model of “new gen = magically faster” has failed me.