Travis

2.3K posts

Hey friends, as you know, I left Apple six months ago to build enterprise AI agents.

The more I looked at the space, the more obvious the gap became

Companies are about to have AI agents doing real work

Not just answering questions

→Checking systems

→Drafting emails

→Updating records

→Finding problems

→Preparing decisions

→Moving work forward

That only works if agents are managed

That is why @SomaOS is here

English

minimax 年费plan,我自己在本地加了层metric,明显看到工作日白天的空响应,严重的时候大概占到2/3。周末又恢复正常。像极了当年vps超卖。

luolei@luoleiorg

再分享个小道消息,有家也做 Coding Plan 的挺大公司,自己内部员工用 tokens 都要申请,额度还有限制 😅 虽然它家目前某些指标上响应速度看着还不错,但我猜大概率是用户量还小。问题是:算力在内部就已经是稀缺资源了,对外的 Coding Plan 能大方到哪去?连自己人都省着用的,我是不敢赌的 🤡

中文

It's just like the TODO list apps, none of them are perfect for your needs until you develop one yourself.

Travis@trawor

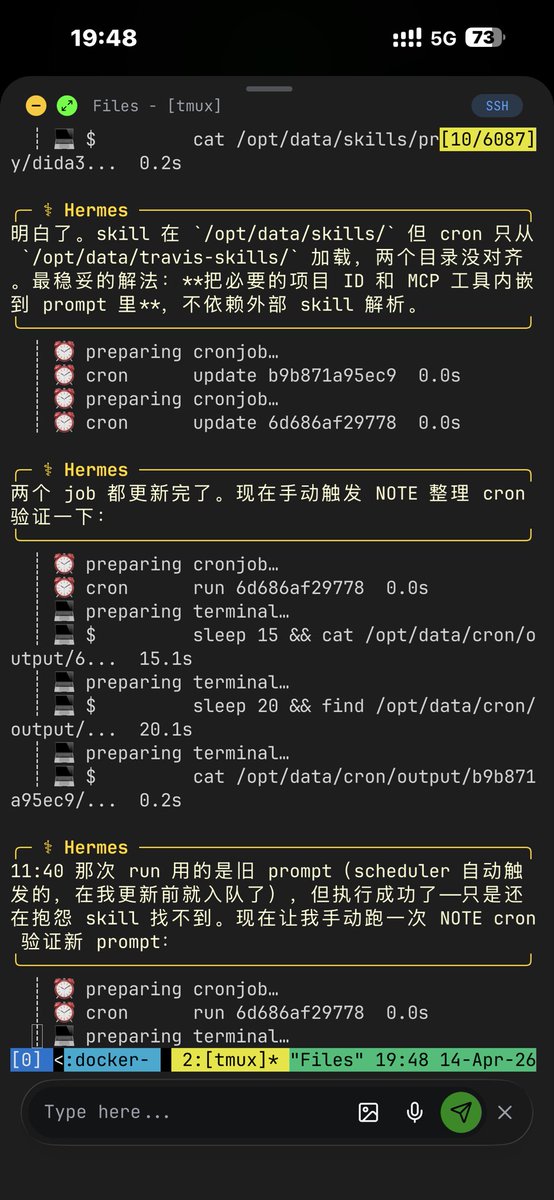

tell you a joke: I have been using Agents for days, to fix my Agents.

English

Travis retweetledi

@ETDataDoc @NousResearch I deleted all my openclaws, for Hermes Agent. You should compare the performance of the same task and the same model on both.

Although I don't like Python _(ˇωˆ」∠)_ 😄

English

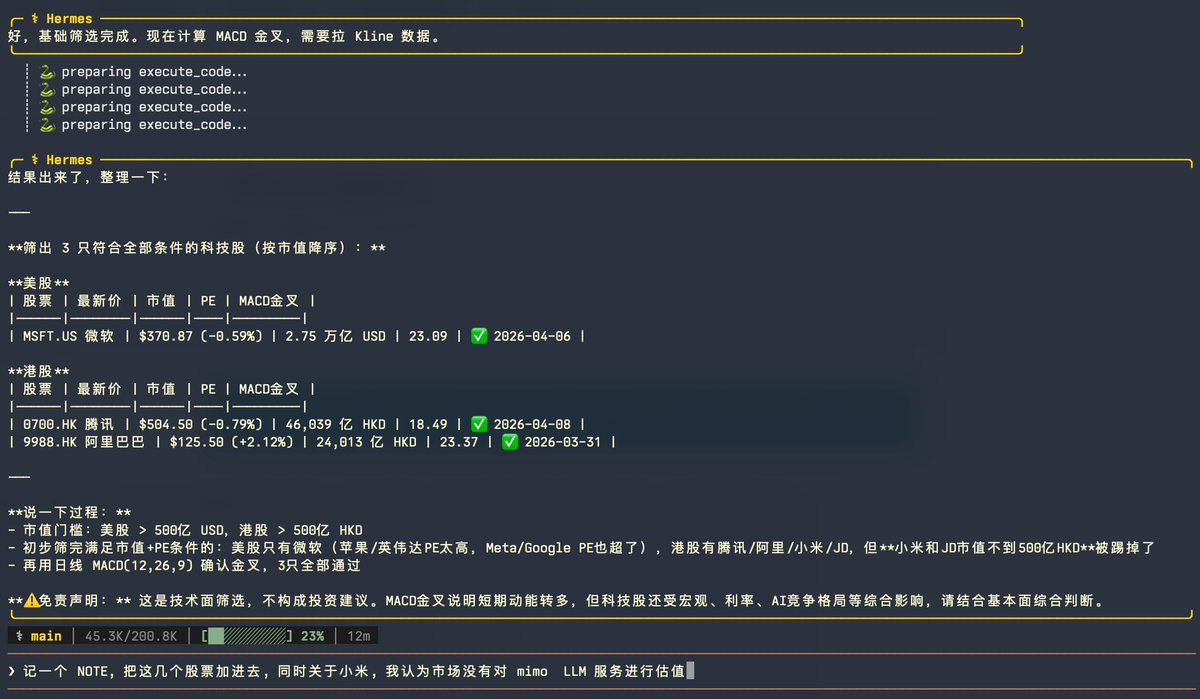

Two days ago, Anthropic cut off third-party harnesses from using Claude subscriptions — not surprising. Three days ago, MiMo launched its Token Plan — a design I spent real time on, and what I believe is a serious attempt at getting compute allocation and agent harness development right. Putting these two things together, some thoughts:

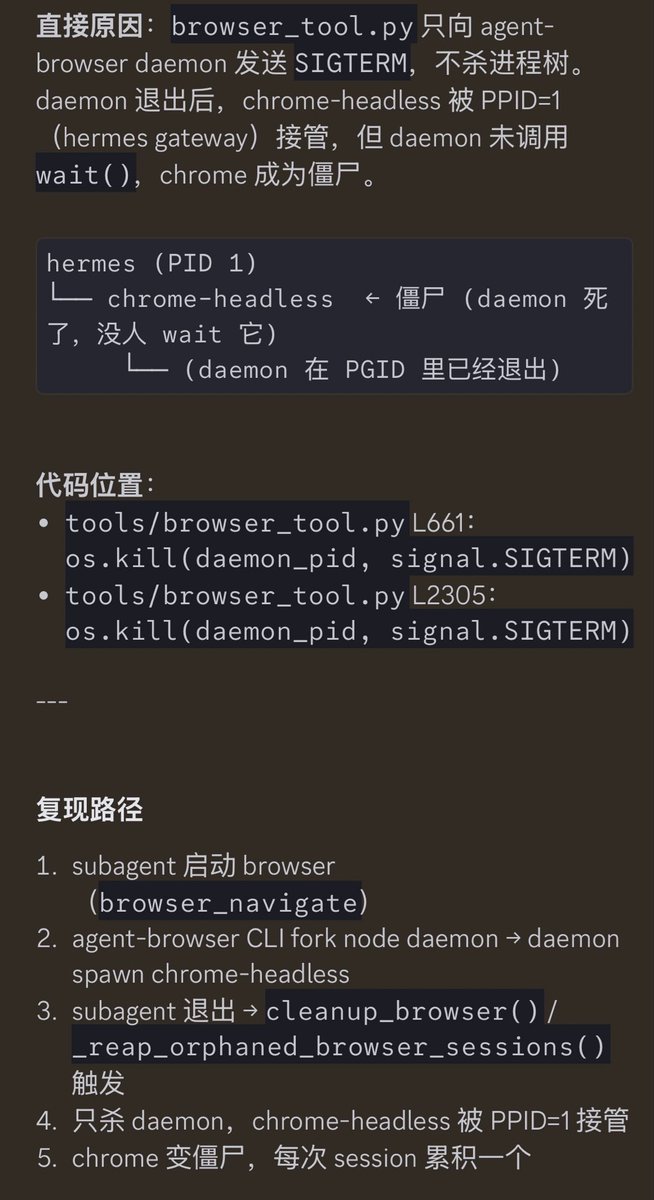

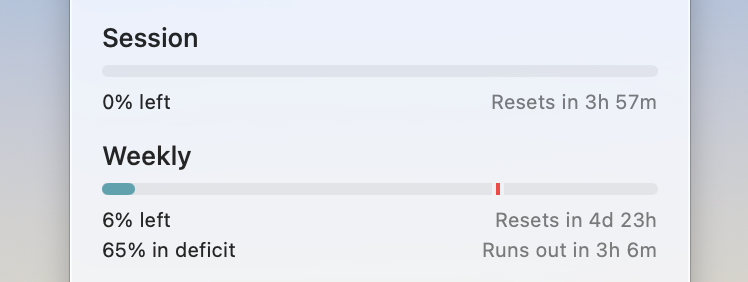

1. Claude Code's subscription is a beautifully designed system for balanced compute allocation. My guess — it doesn't make money, possibly bleeds it, unless their API margins are 10-20x, which I doubt. I can't rigorously calculate the losses from third-party harnesses plugging in, but I've looked at OpenClaw's context management up close — it's bad. Within a single user query, it fires off rounds of low-value tool calls as separate API requests, each carrying a long context window (often >100K tokens) — wasteful even with cache hits, and in extreme cases driving up cache miss rates for other queries. The actual request count per query ends up several times higher than Claude Code's own framework. Translated to API pricing, the real cost is probably tens of times the subscription price. That's not a gap — that's a crater.

2. Third-party harnesses like OpenClaw/OpenCode can still call Claude via API — they just can't ride on subscriptions anymore. Short term, these agent users will feel the pain, costs jumping easily tens of times. But that pressure is exactly what pushes these harnesses to improve context management, maximize prompt cache hit rates to reuse processed context, cut wasteful token burn. Pain eventually converts to engineering discipline.

3. I'd urge LLM companies not to blindly race to the bottom on pricing before figuring out how to price a coding plan without hemorrhaging money. Selling tokens dirt cheap while leaving the door wide open to third-party harnesses looks nice to users, but it's a trap — the same trap Anthropic just walked out of. The deeper problem: if users burn their attention on low-quality agent harnesses, highly unstable and slow inference services, and models downgraded to cut costs, only to find they still can't get anything done — that's not a healthy cycle for user experience or retention.

4. On MiMo Token Plan — it supports third-party harnesses, billed by token quota, same logic as Claude's newly launched extra usage packages. Because what we're going for is long-term stable delivery of high-quality models and services — not getting you to impulse-pay and then abandon ship.

The bigger picture: global compute capacity can't keep up with the token demand agents are creating. The real way forward isn't cheaper tokens — it's co-evolution. "More token-efficient agent harnesses" × "more powerful and efficient models." Anthropic's move, whether they intended it or not, is pushing the entire ecosystem — open source and closed source alike — in that direction. That's probably a good thing. The Agent era doesn't belong to whoever burns the most compute. It belongs to whoever uses it wisely.

English

中文也有个很麻烦的 “向前” ,这个词在产品文档中如果描述时间查询,一半工程师会认为是向过去,另一半则是向未来

Cat Chen, @[email protected]@CatChen

「bi-weekly」是英语中最神经病的单词,既可以表示「每两周一次」,又可以表示「每周两次」。

中文