Otto Gradkowski

2K posts

Otto Gradkowski

@ETDataDoc

Causality is the final human edge over AI

putting together a group chat for Codex power users in London / Europe who are the biggest ballers around?

El Rio, Zeitgeist and more push back on new SF bar smoking ordinance sfgate.com/sf-culture/art…

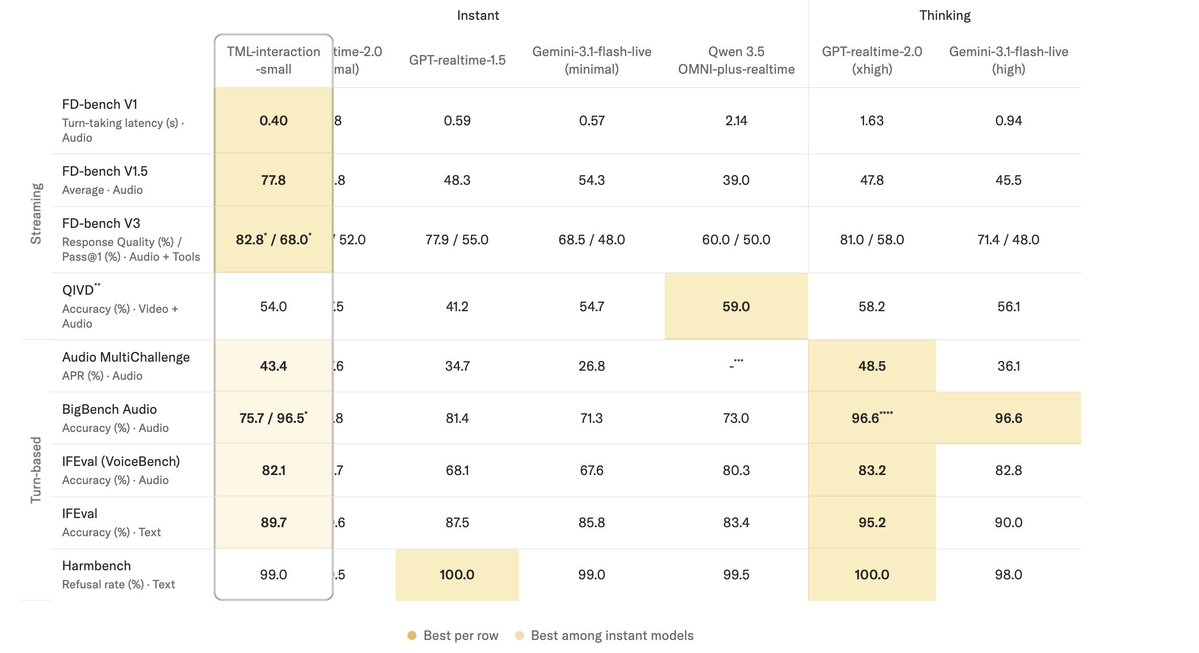

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. thinkingmachines.ai/blog/interacti…

how did they convince people that chatgpt is emptying the oceans of water?

Hermes Agent is now #1 on the Global @OpenRouter token rankings. While our journey together has just begun, we'd like to take this opportunity to thank our contributors, supporters, and users for all they have done to get us this far.