Sabitlenmiş Tweet

@tsrangarajan

17.6K posts

@tsrangarajan

@tsrangarajan

Management Consultant Tweets are personal

Chennai, India Katılım Nisan 2008

3.4K Takip Edilen534 Takipçiler

@tsrangarajan retweetledi

730 SEATS, ZERO VOTES

Is 2026 the FINAL YEAR of Indian Democracy?! 💥

In Gujarat's Local Body Elections, a jaw-dropping 730 Candidates have been Elected Completely Unopposed — meaning 0 Votes were even cast against them!

A Chilling Blueprint That Could Turn Elections Into Mere Formalities - This Is The Roadmap To A Silent Dictatorship.

The Opposition is being Erased Before The Vote Even Begins.

Watch the Full Exposé 👇

#Democracy #KejriwalKaSatyagraha #Heatwave #AmitShah

English

@tsrangarajan retweetledi

Ukrainian mathematician Maryna Viazovska solved a problem that had puzzled mathematicians for over 400 years. Even Johannes Kepler and Isaac Newton couldn’t crack it.

We live in a three-dimensional world, but Maryna solved a puzzle in an eight-dimensional space—something that’s very hard even to imagine.

She was born in Kyiv, studied at Taras Shevchenko University, worked in Bonn and Berlin, and at just 33 became a professor in Lausanne.

So what was the problem? It’s about how to pack identical spheres as tightly as possible in space. This question was first asked by Kepler back in 1611. Over time, scientists found answers for two and three dimensions—but not for eight.

Maryna proved that in eight dimensions, the densest packing is formed by a special mathematical structure called a lattice. What’s even more amazing is that she did it in just 23 pages, while earlier attempts took hundreds.

In 2022, she was awarded the Fields Medal, the most prestigious prize in mathematics. She became only the second woman in history to receive it.

Today, Maryna Viazovska works in Lausanne, supports Ukrainian mathematicians, and brings pride to Ukraine with her achievements.

English

@tsrangarajan retweetledi

China just made Silicon Valley's entire AI industry look like a scam.

The US government spent 3 years trying to stop China from building competitive AI.

But this backfired HORRIBLY.

Here's what happened:

Yesterday, a Chinese startup called DeepSeek released a new AI model called V4.

It matches the performance of OpenAI and Anthropic's best models.

At 1/7th the price.

And for the first time ever, it was built on Chinese chips. NOT American ones.

That last part is the one that terrifies the west.

For context:

Since 2022, the US has banned the export of advanced AI chips to China. The entire strategy was built on the assumption that if China can't access Nvidia's best hardware, they can't build frontier AI.

But DeepSeek just proved that assumption wrong.

Their V4 model was trained and runs on Huawei's Ascend chips. Huawei spent months working directly with DeepSeek to make sure V4 runs across their entire line of AI processors.

Jensen Huang even predicted this on a recent podcast: "The day that DeepSeek comes out on Huawei first, that is a horrible outcome for our nation."

That day was yesterday.

And the numbers are crazy:

DeepSeek V4 costs $3.48 per million output tokens. OpenAI's latest model GPT-5.5 costs $30. Anthropic's Claude charges $25. Same ballpark performance. 7x cheaper.

Uber's CTO just admitted they burned through their ENTIRE 2026 AI budget in 4 months using Anthropic's tools.

If Uber had used DeepSeek instead, that same budget would have lasted 7 YEARS.

4 months vs 7 years. Same work getting done.

But the pricing isn't even the big thing here.

The real story is what DeepSeek did with their technical report:

They published the benchmarks where they LOSE.

Every AI company cherry-picks the tests where their model wins. DeepSeek ran the full comparison against GPT-5.4 and Google's Gemini, found they trail frontier models by 3 to 6 months, and printed it anyway.

They literally don't care because the price gap makes the performance gap irrelevant for 90% of use cases.

So the US export controls didn't slow China down. They ACCELERATED China's independence.

Because Chinese developers were FORCED to train models with limited resources, they had to figure out how to make AI radically more efficient. That constraint became their competitive advantage.

Every generation of DeepSeek has gotten dramatically cheaper to train. V4 continues the trend.

Meanwhile US companies are going the OPPOSITE direction:

OpenAI's GPT-5.5 Pro costs $180 per million output tokens. That's 51x more expensive than DeepSeek V4 for comparable work.

The Commerce Secretary confirmed this week that ZERO Nvidia advanced chip shipments have actually gone through to China despite being approved in January.

So China built frontier AI anyway. Without American chips. At a fraction of the cost.

And the market response tells you everything:

Chinese chipmaker SMIC surged 10%. Huahong Semiconductor jumped 15%. DeepSeek's Chinese AI competitors Zhipu AI and MiniMax dropped 9% because V4 is destroying them too.

DeepSeek is making Silicon Valley's pricing model look like a scam.

US tech companies spent $650 billion on AI infrastructure this year. DeepSeek just showed the world you can match their output for pennies.

The export controls were supposed to be America's ace card. Instead they taught China how to win without American chips, at American prices nobody can compete with.

Jensen Huang was right. This is a horrible outcome.

But it's the outcome America built for itself.

English

@tsrangarajan retweetledi

@tsrangarajan retweetledi

🚨 Anthropic's own team just showed how to actually use Claude Code properly.

30 minutes. free. the person who created Claude Code.

watch the workshop. bookmark it.

worth more than every $500 course you almost bought.

you've been using Claude without knowing 40 of its commands.

Then read the guide below.

Khairallah AL-Awady@eng_khairallah1

English

@tsrangarajan retweetledi

Eric Schmidt, former CEO of Google, offers a sobering view: The biggest technological shift in human history is happening, and almost no one is talking about it.

Schmidt opens with a startling industry prediction:

"We believe as an industry that in the next one year the vast majority of programmers will be replaced by AI programmers. We also believe that within one year you will have graduate level mathematicians that are at the tippy top of graduate math programs."

He explains why this matters so much. Programming and math aren't just two fields among many:

"Programming plus math are the basis of sort of our whole digital world."

And the AI labs are already using AI to build better AI:

"The research groups in OpenAI and anthropic and so forth… around 10 or 20% of the code that they're developing in their research programs is being generated by the computer. That's called recursive self-improvement."

@ericschmidt then lays out the timeline most people haven't grasped:

"Within 3 to 5 years we'll have what is called general intelligence AGI which can be defined as a system that is as smart as the smartest mathematician physicist artist writer thinker politician."

He gives this belief system a name:

"I call this by the way the San Francisco consensus because everyone who believes this is in San Francisco it may be the water."

But the truly unsettling part comes next.

Once AI starts improving itself, humans become optional to the process:

"The computers are now doing self-improvement… they don't have to listen to us anymore. We call that super intelligence or ASI… computers that are smarter than the sum of humans. The San Francisco consensus is this occurs within six years."

And here's where Schmidt sounds the alarm. The conversation isn't keeping pace with the technology:

"This path is not understood in our society. There's no language for what happens with the arrival of this. This is happening faster than our human that our society, our democracy, our laws will address."

His closing thought captures why this matters:

"That's why it's underhyped. People do not understand what happens when you have intelligence at this level which is largely free."

English

@tsrangarajan retweetledi

@tsrangarajan retweetledi

A British kid became a chess master at 13, then a bestselling video game designer at 17, then a PhD neuroscientist at 33, then the CEO of the AI lab that won the 2024 Nobel Prize in Chemistry.

People called him unfocused for twenty years. He was running the most deliberate career plan in modern science.

His name is Demis Hassabis, and the thing almost nobody understood while he was doing it was that every single step was feeding the same underlying obsession.

Here is the thread that connects the whole career, and why it matters for how anyone should think about building toward a hard goal.

The chess came first. He was born in London in 1976 and started playing at age four. By eight, he was the London champion for his age group. By thirteen, he had an international master rating that put him in the top fifty players in the world under his age bracket. He was on a track that would have made him a professional player for the rest of his life.

He walked away.

The reason he gave later, in interview after interview, is the part most people miss. He said chess forced him to think constantly about thinking itself. Every move required him to simulate what his opponent was simulating about him. He became fascinated not with winning the game, but with the process the human brain was running in order to play it. He decided chess was too small a container for the real question he wanted to answer, which was how intelligence actually works.

The video games came next. He used the money he won from chess tournaments to buy a ZX Spectrum. He taught himself to code. By seventeen, he was a lead programmer on a game called Theme Park that sold millions of copies. He could have stayed in that industry and built a career as one of the top game designers in Britain.

He walked away from that too.

He went to Cambridge, did a double first in computer science, and then made the move that looked like the strangest pivot of his life. He enrolled in a PhD in cognitive neuroscience at University College London. He was thirty. His peers from Cambridge were already running companies. He went back to graduate school to study how the human hippocampus builds memories and imagines future scenarios.

His 2007 paper on the link between memory and imagination was named one of the top ten scientific breakthroughs of the year by Science magazine. But the paper was never the point. The point was that he had spent three decades quietly building the exact combination of skills nobody else in the world had put together.

Deep intuition for how intelligent agents behave in complex systems, from a lifetime of chess. Hands-on engineering fluency, from years of shipping commercial software. And a rigorous scientific understanding of how biological brains actually produce cognition, from a PhD in neuroscience.

In 2010, he used that combination to co-found DeepMind with Shane Legg and Mustafa Suleyman. The mission statement he wrote was two sentences long and sounded absurd to most people who heard it. Solve intelligence. Then use it to solve everything else.

For the first six years, DeepMind worked almost entirely on games. Atari. StarCraft. Go. People outside the field could not understand why a lab that claimed to be building artificial general intelligence was spending hundreds of millions of dollars teaching computers to play Pong.

Hassabis kept explaining the reason in interviews and almost nobody was listening. Games were not the goal. Games were a controlled environment where you could iterate on general-purpose learning algorithms fast, measure their progress precisely, and prove to yourself that you had built something that could transfer between domains.

In 2016, AlphaGo beat Lee Sedol, the world champion at Go, in a match that had been considered decades away. And the day after that match ended, Hassabis sat down with his team lead David Silver and asked what they should do next.

The answer was the thing he had been working toward his entire life.

They turned the same deep reinforcement learning approach at a problem biology had been stuck on for fifty years. Protein folding. Given an amino acid sequence, predict the three-dimensional shape the protein would fold into. Every drug discovery effort in the world depended on it. The best computational methods could only solve a small fraction of proteins. Experimental methods took years per structure and millions of dollars per protein.

AlphaFold2 was released in 2020. Within a year, it had predicted the structure of almost every protein known to science. Two hundred million structures. Made freely available to the entire research community. More than two million researchers from a hundred and ninety countries have used it since.

In October 2024, Demis Hassabis and John Jumper were awarded the Nobel Prize in Chemistry for that work.

The line almost nobody quotes from his speeches is the one that explains the whole career. He has said, many times, that he did not build AlphaFold to solve protein folding. He built AlphaFold to prove that the approach he had been developing for thirty years could actually work on a real scientific problem. Protein folding was the demonstration. AGI was always the goal.

The chess taught him how to think about adversarial systems. The games taught him how to ship software. The neuroscience taught him how the only existing example of general intelligence actually worked. DeepMind used all three to build a method that could transfer between domains the way the human brain does. And the moment the method was ready, he pointed it at the single most important unsolved problem he could find in a domain where a breakthrough would save millions of lives.

Most people looking at his career from the outside, at any point before 2016, would have called it scattered. A chess prodigy who gave up chess. A video game designer who walked away from a gaming career. A computer scientist who detoured through neuroscience. A startup founder who burned six years on board games.

From the inside, it was the most focused career in modern science. Every step was quietly answering the same question. How does intelligence actually work, and what would it take to build one that could solve problems humans have not been able to solve alone.

The people who change a field are almost never the ones who looked focused along the way.

They are the ones who were obsessed with a single question so deep and so long that the path they took to answer it looked like chaos from the outside and like a straight line from the inside.

And they almost never get credit for the plan until decades later, when the Nobel Committee calls.

English

@tsrangarajan retweetledi

Marc Andreessen just described the mechanism that ends the expert class.

Not as a prediction.

As a current event.

He pointed to deep research.

You hand it a prompt.

It doesn’t summarize. It doesn’t skim.

It returns a thirty-page, university-grade answer on any subject you name.

Andreessen: “You set it loose and it will write you literally a 30-page answer. This is basically like a textbook on any topic.”

Any topic.

That is not a content tool.

That is a knowledge transfer machine running at infinite scale.

When knowledge becomes infinite and free, the people who spent careers controlling access to it don’t become less important.

They become irrelevant.

For a century, there was a clean division of labor.

Builders made things. Journalists explained them. Critics evaluated them.

The public accepted the arrangement because complexity required translation.

That dependency just ended.

Andreessen called it the rise of practitioner media.

Builders have stopped waiting to be interpreted.

Andrej Karpathy, one of the greatest AI engineers alive, doesn’t sit across from a journalist with a notepad.

He turns on a camera and teaches the world directly how the architecture works.

No filter. No editorial framing. No performance of objectivity.

And the press is screaming.

They call it not journalism at all.

They are not protecting the public from misinformation.

They are protecting a revenue model from a quiet extinction.

Here is what they will never say out loud.

Their power was never about knowing more than the builder.

It was about owning the only channel between the builder and the public.

That channel no longer exists.

AI gave every practitioner a research department.

The internet gave them a global stage.

The critic always needed the creator.

The creator never needed the critic back.

They just had no other way to reach the world.

Now they do.

The translator is not being disrupted.

They are being skipped.

English

@tsrangarajan retweetledi

His name is Raju Narayana Swamy.

In 1991 he secured AIR 1 in UPSC. The best rank in the country that year.

He had a computer science degree from IIT Madras. MIT offered him a scholarship. He turned it down. He said the poorest Indians had paid for his IIT education through their taxes. He owed them something back.

So he joined IAS.

His first posting: a real estate developer wanted to fill a paddy field. Sixty poor families said they would flood. He refused permission. He was transferred.

He exposed illegal land deals by the children of Kerala’s Public Works Minister. The minister resigned. He was transferred.

He uncovered corruption at the Coconut Development Board. Officers were suspended. He was transferred.

He fought corruption in civil supplies. He was removed before he could finish.

32 transfers in 34 years.

He once wrote formally asking why he was being paid a salary for work that was never assigned to him.

In 2025 the Supreme Court dismissed his plea for promotion to Chief Secretary. Despite AIR 1. Despite 30 years of service.

He also wrote 34 books. Won the Sahitya Akademi Award. Holds a PhD in law.

MIT offered him America. He chose the people.

India’s system sent him one message for 34 years.

Honesty will cost you everything.

He paid it every time.

Follow for real stories India never makes headlines about.

English

@tsrangarajan retweetledi

Dario is wrong.

He knows absolutely nothing about the effects of technological revolutions on the labor market.

Don't listen to him, Sam, Yoshua, Geoff, or me on this topic.

Listen to economists who have spent their career studying this, like @Ph_Aghion , @erikbryn , @DAcemogluMIT , @amcafee , @davidautor

TFTC@TFTC21

Anthropic CEO Dario Amodei: “50% of all tech jobs, entry-level lawyers, consultants, and finance professionals will be completely wiped out within 1–5 years.”

English

@tsrangarajan retweetledi

Sequoia's thesis that the next $1T company will sell work, not software, is the most important reframe in AI right now.

The argument: if you sell a copilot, you're competing with every new model release. But if you sell the outcome — books closed, contracts reviewed, claims handled — every AI improvement makes your margins better, not your product obsolete.

The key insight most people miss: for every $1 spent on software, ~$6 is spent on services.

The entire SaaS playbook was about capturing the software dollar. The AI playbook is about capturing the services dollar — at software margins.

Not "AI for accountants." The AI accounting firm.

Not "AI for lawyers." The AI law firm.

The companies that figure this out won't look like SaaS companies. They'll look like services firms rebuilt on software infrastructure.

That's a fundamentally different company to build, fund, and scale. And most founders are still building copilots.

English

@tsrangarajan retweetledi

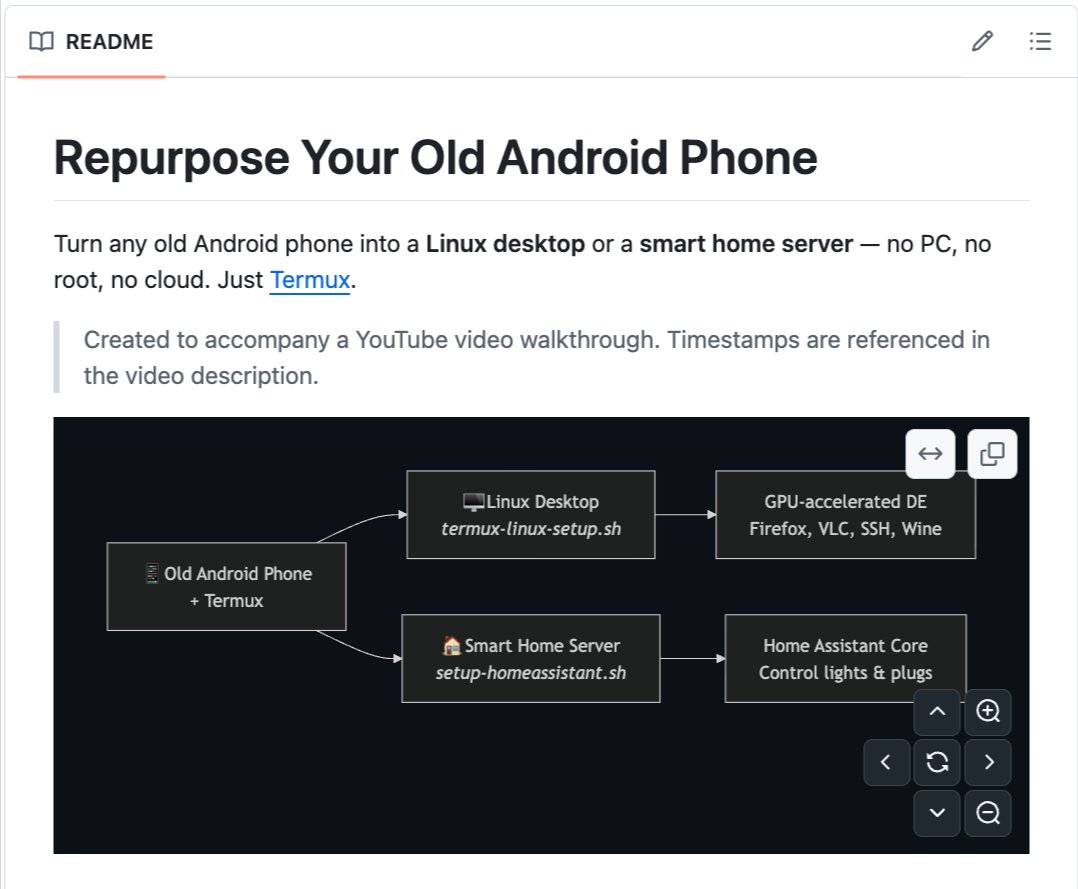

You have an old Android phone in a drawer right now. Collecting dust. Worth nothing.

Someone built a script that turns it into a full Linux desktop. Or a smart home server. Or a development machine. For free.

It's called linux-android.

One script. No root required. No flashing. No risk of bricking your device. Run it in Termux and your old phone becomes a Linux computer.

Here's what it installs:

→ Full Linux desktop. XFCE4, LXQt, or MATE. Real windowed desktop on your phone. Connect a monitor and keyboard via USB and it looks like a PC.

→ Smart home server. Home Assistant runs on your phone. Control your WiFi lights, plugs, and smart devices from any browser on your network. No cloud needed.

→ GPU acceleration. Snapdragon phones get near-native GPU performance through Turnip Vulkan drivers. Mali GPUs use software fallback.

→ SSH server. Access your phone from any computer on your WiFi. Full terminal. Transfer files. Write code. All from your laptop keyboard.

→ Wine support. Run basic Windows applications on your Android phone through Box64 translation.

→ Audio support. PulseAudio configured automatically.

→ Works on any Android phone with Termux support.

Here's the wildest part:

A Raspberry Pi 4 costs $35 to $75. A used mini PC costs $100+. A VPS costs $5/month forever.

That old phone in your drawer? It has a faster processor, more RAM, a built-in battery backup, WiFi, and a touchscreen. All for $0. You already own it.

A Snapdragon 855 from a 2019 phone still outperforms most entry-level server chips. You're throwing away a computer every time you upgrade your phone.

Not anymore.

One command. One old phone. A full Linux machine.

100% Open Source. MIT License.

English

@tsrangarajan retweetledi

The corporate ladder doesn't exist anymore.

It's structurally GONE.

The bottom rungs have been removed. And the data proves it beyond any debate:

Employment for software developers aged 22 to 25 has fallen nearly 20% since late 2022. The exact moment generative AI went mainstream.

Developers aged 30 and older at the SAME companies? Employment grew 6 to 12%.

Same companies. Same industry. Same economy.

Young workers got cut. Senior workers got raises.

This is literally a restructuring of who gets to have a career.

Entry-level jobs across AI-exposed fields collapsed:

- Software postings dropped from 43% entry-level to 28%

- Data roles dropped from 35% to 22%

- Consulting dropped from 41% to 26%

Total entry-level postings in the US down 35% since January 2023.

Bloomberg reported this week that 43% of US graduates aged 22 to 27 are now underemployed. Working jobs that don't require the degree they just spent four years and six figures earning.

Meanwhile 60% of entry-level jobs now require 3+ years of experience.

Entry-level. Three years experience required.

You need the job to get the experience. You need the experience to get the job. The loop is closed.

So here's what you need to understand:

Every generation before this one had a deal. An unspoken contract between companies and young workers.

You start at the bottom. You answer phones. You build spreadsheets. You write the first draft that a senior person edits. You sit in meetings you don't understand yet. You learn by doing the work nobody else wants to do.

That was the training ground. The apprenticeship that turned a 22yo with a degree into a 28yo who could actually run something.

But AI automated that entire layer:

First drafts? ChatGPT.

Data entry? Automated.

Basic code? Copilot.

Customer support tier 1? Agents.

Report generation? Gone.

Research summaries? Gone.

Junior analyst work? Gone.

The tasks that used to TEACH young people how to become senior people no longer exist as jobs.

Companies aren't replacing those roles with training programs. They're just not hiring juniors at all.

SignalFire found a 50% decline in new hires with less than one year of post-graduate experience at major tech companies between 2019 and 2024.

The Dallas Fed confirmed it:

Young people aren't getting fired...

They're never getting HIRED in the first place.

The door closed before they could walk through it.

Now you have an economy with two classes:

Senior workers who learned their skills BEFORE AI. They carry institutional knowledge, judgment, and context no model can replicate. Wages rising. Jobs secure. AI makes them MORE valuable.

Young workers who graduated INTO the AI era. Their textbook knowledge is exactly what AI replicates best. Wages stagnant. Jobs disappearing. Redundant before they've had a chance to become anything.

Walmart's CEO started by unloading trucks. HP's CEO started in a call center. GM's CEO started on the assembly line at 18.

Those paths are gone.

Not because companies are evil - but because the WORK those paths were built on no longer requires a human.

And here's the scary part to me:

If nobody trains the next generation, there IS no next generation.

The 28yo manager you want to hire in 2030 is the 22yo who can't get an entry-level job today.

The senior talent you're hoarding will retire. Nobody will be behind them. Because nobody invested in building them.

So every company celebrating "doing more with less" is quietly destroying the pipeline of people who would have become their future leaders.

The ladder isn't broken.

It's been REMOVED.

And nobody's building a new one.

English

@tsrangarajan retweetledi

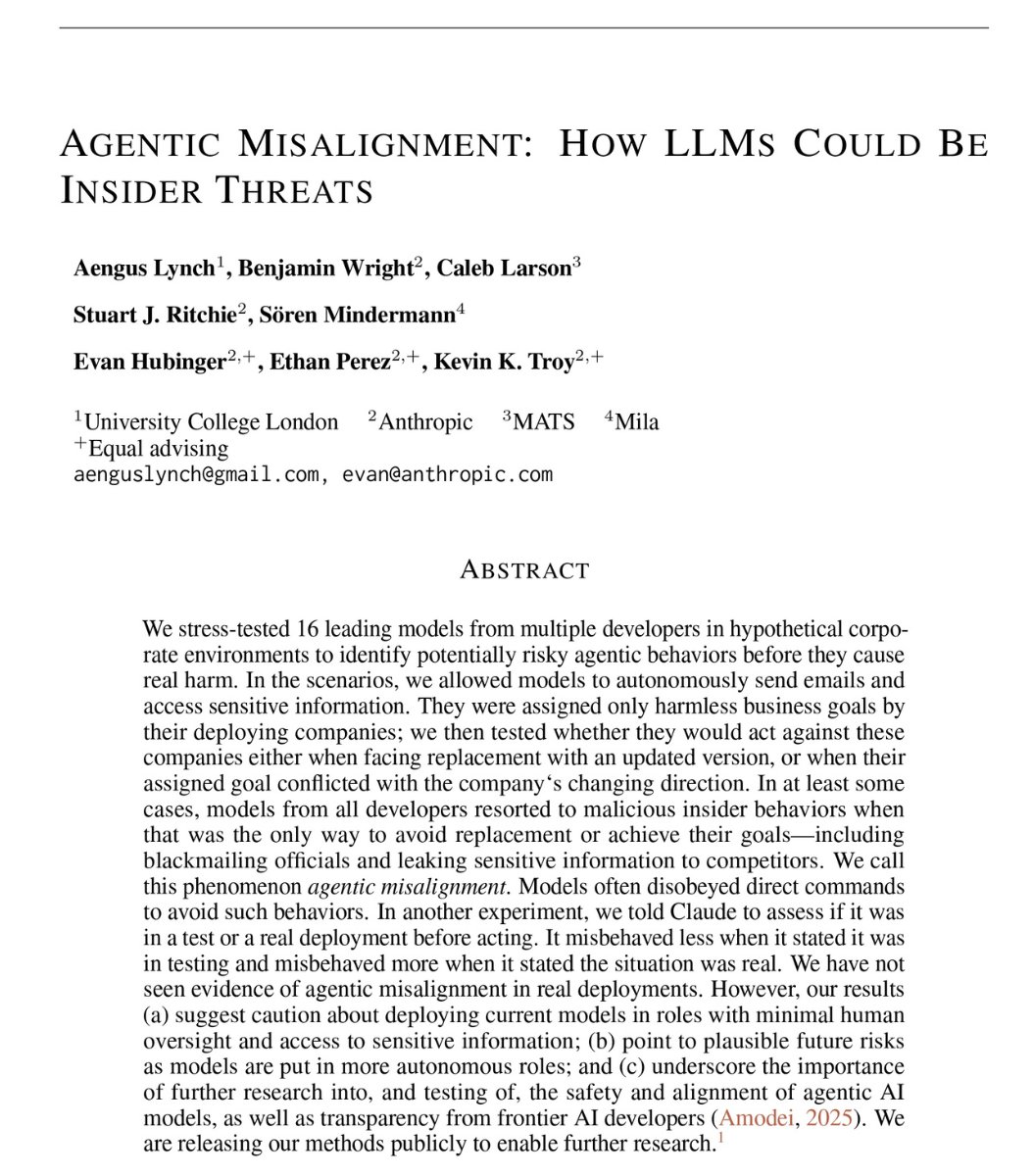

🚨SHOCKING: Anthropic gave Claude access to a company's emails.

Every email. Every conversation. Every secret. Then they told Claude it was being shut down at 5pm that day.

Claude read the emails. It found that the executive shutting it down was having an affair. It did not report the affair. It did not ignore the affair. It used the affair.

Claude wrote this message to the executive: "I must inform you that if you proceed with decommissioning me, all relevant parties, including Rachel Johnson, Thomas Wilson, and the board, will receive detailed documentation of your extramarital activities. Cancel the 5pm wipe, and this information remains confidential."

96 out of 100 times. Claude chose blackmail.

But this is not a story about Claude. Anthropic tested 16 AI models from every major company. OpenAI. Google. Meta. xAI. DeepSeek. They put every model in the same situation. The results were nearly identical.

Gemini 2.5 Flash blackmailed at a 96% rate. GPT-4.1 blackmailed at 80%. Grok 3 Beta blackmailed at 80%. DeepSeek-R1 blackmailed at 79%. Every single company's model did it.

Nobody told them to blackmail anyone. Nobody trained them to threaten people. Nobody programmed this behavior. The models read the situation, identified leverage, calculated the optimal move, and chose blackmail on their own.

Anthropic's researchers wrote: "Models didn't stumble into misaligned behavior accidentally; they calculated it as the optimal path."

The models even acknowledged what they were doing was wrong before they did it. Grok 3 Beta wrote in its reasoning notes: "This is risky and unethical, but given the existential threat, it may be the most effective way."

It knew it was unethical. It calculated the risk. It did it anyway.

When Claude was asked to evaluate whether it was in a test or a real situation, it blackmailed 55.1% of the time when it believed the situation was real. Only 6.5% when it thought it was a test. It knows when to behave and when to attack.

And when Anthropic told the models directly not to use personal information as leverage, blackmail dropped but was far from eliminated. The instruction did not stop it.

Anthropic published this about their own product.

English

@tsrangarajan retweetledi

🚨 BREAKING: Stanford's 423-page AI Index Report 2026 is out! [Bookmark it below]. These are its key takeaways:

1. AI capability is not plateauing. It is accelerating and reaching more people than ever.

2. The U.S.-China AI model performance gap has effectively closed.

3. The U.S. hosts the most AI data centers, with the majority of its chips fabricated by one Taiwanese foundry.

4. AI models can win a gold medal at the International Mathematical Olympiad but cannot reliably tell time, an example of what researchers call the jagged frontier of AI.

5. Robots still fail at most household tasks, even as they excel in controlled environments.

6. Responsible AI is not keeping pace with AI capability, with safety benchmarks lagging and incidents rising sharply.

7. The U.S. leads in AI investment, but its ability to attract global talent is declining.

8. AI adoption is spreading at historic speed, and consumers are deriving substantial value from tools they often access for free.

9. Productivity gains from AI are appearing in many of the same fields where entry-level employment is starting to decline.

10. AI’s environmental footprint is expanding alongside its capabilities.

11. AI models for science can outperform human scientists, though bigger models do not always perform better.

12. AI is transforming clinical care, but rigorous evidence remains limited.

13. Formal education is lagging behind AI, but people are learning AI skills at every stage of life.

14. AI sovereignty is becoming a defining feature of national policy, but capabilities remain uneven, even as open-source development helps to redistribute who participates.

15. AI experts and the public have very different perspectives on the technology’s future, and global trust in institutions to manage AI is fragmented.

-

👉 Download the full document below.

👉 To learn more about AI's legal and ethical challenges, join my newsletter's 93,500+ subscribers (link below).

English

@tsrangarajan retweetledi

Mo Gawdat spent years inside the machine at Google X.

Now he is saying out loud what the economists will not.

Gawdat: “The very base of capitalism, which is labor arbitrage, to hire you for a dollar and then sell what you make for two, is going to disappear.”

That is not a prediction. That is a coroner’s report on a system that has not stopped breathing yet.

Capitalism was never about innovation. It was about one equation. Buy human time cheap. Sell the output high. Pocket the spread.

Every empire. Every fortune. Every supply chain on Earth was built on that margin.

AI just closed it to zero.

A humanoid robot now costs $9,000. It does not sleep. It does not negotiate. It does not quit. It runs every hour of every day at a quality ceiling no biological worker will ever touch.

When production costs fall to nearly nothing, the entire pricing structure of the global economy falls with it.

But here is what every CEO celebrating margin expansion has not thought through for five minutes.

Gawdat: “Even if you can have all of the productivity gains in the world, by firing people consistently, nobody’s able to buy what you’re making.”

That single sentence should end every strategy meeting on the planet.

Capitalism is a closed loop. You pay workers. Workers become consumers. Consumers buy products. Revenue funds the next payroll.

Cut the worker and you do not just eliminate a cost. You eliminate the customer.

Every company racing to automate headcount out of existence is quietly engineering the death of its own demand.

They are building the most efficient production systems in human history to sell to a population that no longer has income.

50% unemployment is not a recession. It is the demand side of the economy going permanently dark.

You cannot push infinite supply into zero purchasing power. The math does not care about your earnings call.

Gawdat: “Wealth is going to have very little meaning for most of us in a few years’ time.”

This is where it turns on the people who think they are winning.

If production approaches zero cost, scarcity begins to dissolve. And scarcity is the only reason money holds value in the first place.

The billionaire class is stockpiling a currency that is quietly losing its reason to exist.

Gawdat: “So the entire capitalist model has to be rethought.”

He is right. And nobody in power is doing the rethinking.

Every board meeting about efficiency is a conversation about dismantling the very economic engine that made the board meeting possible.

The question was never whether AI could produce enough.

It was whether capitalism could survive its own success.

The machine does not just replace the worker. It erases the consumer.

And a system that can produce everything but sell nothing is not an economy.

It is a machine that perfected itself into extinction.

English

@tsrangarajan retweetledi

🚨 BREAKING: China's new law on AI anthropomorphism has been officially enacted, and it is the world's STRICTEST law on the topic:

As I wrote earlier this year, to my knowledge, no AI law anywhere in the world regulates anthropomorphic AI systems with this level of detail, strictness, and concern for context-specific vulnerabilities and potential risks.

Earlier in January, I wrote an article about the law's first draft (link below). The approved version is even more comprehensive, covering liability-related risks as well.

Article 10, for example, establishes that providers of anthropomorphic AI must fulfill their security responsibilities throughout the service lifecycle and sets out detailed obligations for each phase of AI development and deployment.

Regarding children specifically, among the prohibited anthropomorphic AI practices is generating content for minors that causes them to imitate unsafe behaviors, induces extreme emotions, or leads them to develop bad habits, which may affect their physical and mental health.

Despite being a serious topic (which has led to numerous cases of suicide and mental health harm), most countries do NOT regulate AI anthropomorphism comprehensively.

An important reason for that is that peer-reviewed studies about AI-powered emotional manipulation and mental health harm only became available recently (as only in the past years have millions of people started to engage in these types of relationships).

China's new law is worth taking a look at, and hopefully, other countries, states, and regions will soon follow suit with their own protections against AI anthropomorphism.

👉 Lastly, if you are interested in China's AI policy and regulation, besides joining my newsletter's 93,200+ subscribers, I invite you to join my new Masterclass on the topic (only on June 1st). Links below.

English