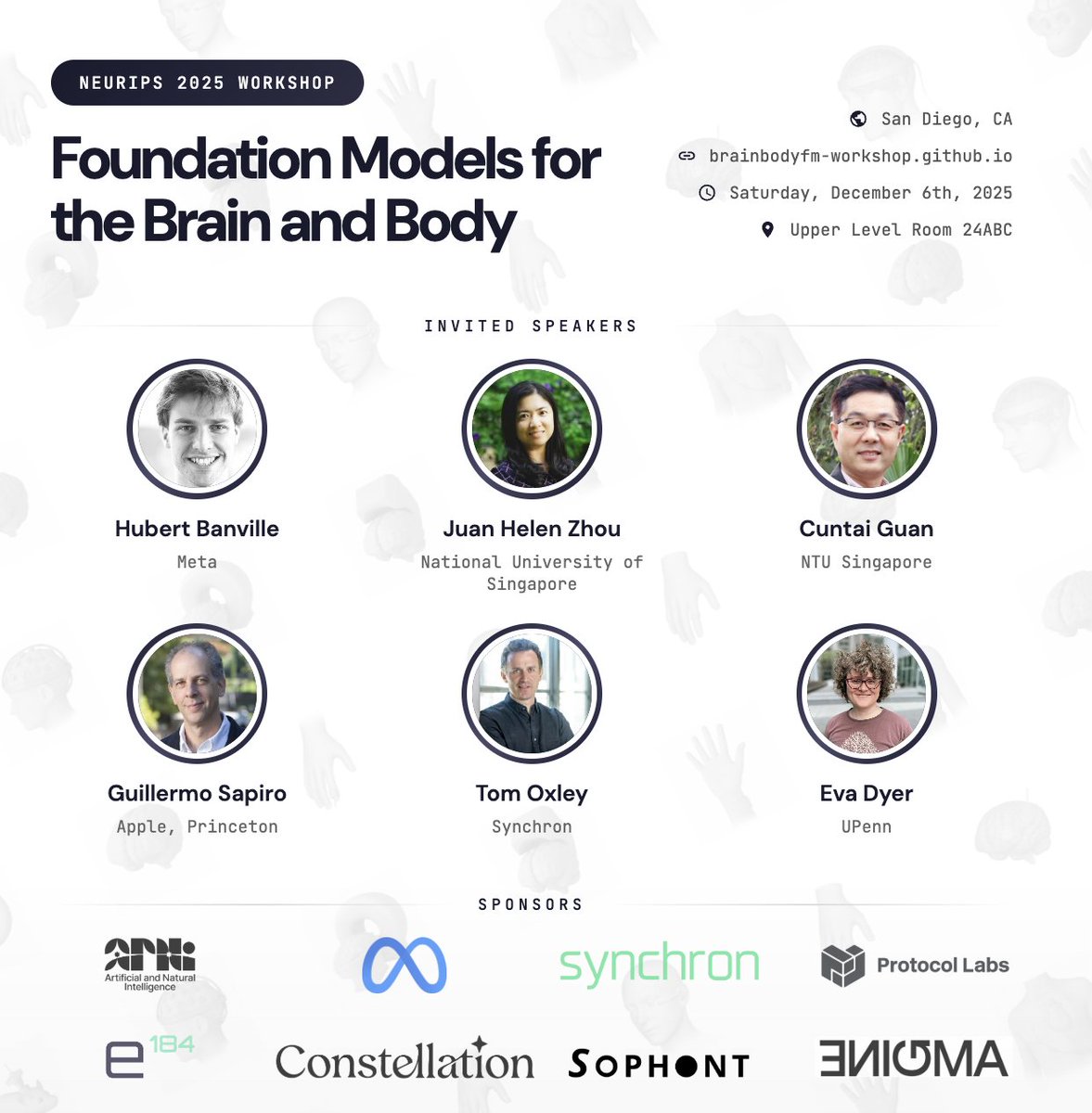

Eva Dyer

203 posts

@evadyer

Associate Professor @GeorgiaTech

New preprint! 🧠🤖 How do we build neural decoders that are: ⚡️ fast enough for real-time use 🎯 accurate across diverse tasks 🌍 generalizable to new sessions, subjects, and species? We present POSSM, a hybrid SSM architecture that optimizes for all three of these axes! 🧵1/7

NAISys deadline extended!

Excited to share our Graph Foundation Model, 🌐 GraphFM, trained on 152 datasets with over 7.4 million nodes and 189 million edges spanning diverse domains. 🚨 Check out our preprint for GraphFM where we test how our model scales with data and model size, and show efficient finetuning on new datasets. Link: arxiv.org/abs/2407.11907

Today, we’re launching Aya, a new open-source, massively multilingual LLM & dataset to help support under-represented languages. Aya outperforms existing open-source models and covers 101 different languages – more than double covered by previous models. cohere.com/research/aya

Is a universal brain decoder possible? Can we train a decoding system that easily transfers to new individuals/tasks? Check out our #NeurIPS2023 paper where we show that it’s possible to transfer from a large pretrained model to achieve SOTA 🧠! Link: poyo-brain.github.io 🧵